Google Warns Gemini Users The AI Automatically Saves Conversations

Google is advising Gemini (formerly Bard) generative AI chatbot users not to share any sensitive information, as human reviewers routinely read and analyze conversations, and the data may be used to train future iterations of the large language models (LLMs) behind the services. In a new support document, Google revealed it collects and retains Gemini chats for up to three years, along with related data like languages, locations, and devices used. It says the practices help “maintain safety and security” and enhance Gemini through machine learning.

Gemini Data Collection

Google cautioned users not to enter “confidential information” or anything they wouldn’t want reviewers or Google to access in the support document. Users can limit such data collection by turning off Gemini App Activity in My Activity settings, though the conversations are still saved for up to three days in case the company needs to respond to any feedback in that time. Without switching it off, the information could be read and analyzed by humans before being incorporated into future models. While Gemini also uses context and past conversations to come up with responses, the issues arise when looking at how that data is employed for the company’s network of products.

“Google collects your Gemini Apps conversations, related product usage information, info about your location, and your feedback. Google uses this data, consistent with our Privacy Policy, to provide, improve, and develop Google products and services and machine learning technologies, including Google’s enterprise products such as Google Cloud,” the support page explains. “To help with quality and improve our products (such as generative machine-learning models that power Gemini Apps), human reviewers read, annotate, and process your Gemini Apps conversations. We take steps to protect your privacy as part of this process. This includes disconnecting your conversations with Gemini Apps from your Google Account before reviewers see or annotate them. “Please don’t enter confidential information in your conversations or any data you wouldn’t want a reviewer to see or Google to use to improve our products, services, and machine-learning technologies.”

The warning comes amid growing scrutiny of AI chatbots’ data collection and privacy practices. Rivals like OpenAI also retain ChatGPT conversations to train models, drawing regulator concerns. As generative conversational AI tools spread, firms balance privacy versus using data to improve capabilities. Google’s policy shows the challenges of this balance for consumers versus enterprises.

Gemini Privacy

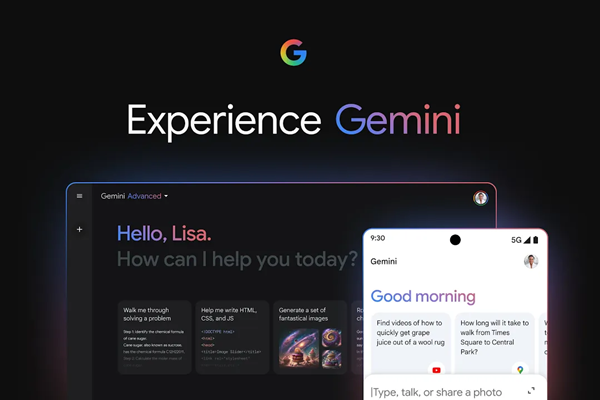

The disclosure arrived in the wake of the rebrand of Bard to Gemini and the release of Gemini Advanced, a subscription service utilizing Gemini Ultra, the most powerful version of the Gemini LLM group. Gemini Advanced is integrated into Google One and comes with access to that service. Google has also released a Gemini app for Android, with an iOS version on the way, supplanting Google Assistant on mobile devices, though not smart speakers as of yet.

If the privacy issues seem familiar, that’s because they are very much the same issue facing voice assistants starting four years ago. At that point, the issue was about contractors listening to audio recordings made by voice assistants and accidental recordings without the wakeword being invoked. Consumers and regulators questioned why private information people didn’t know was recorded could be heard by an unknown contractor. Almost every voice assistant developer, including Google, paused or revised their contractor programs. Apple apologized for its program and changed it to an opt-in system, removing contractors from the equation entirely. Google may hope to head off that kind of outrage with this warning and option to turn off collections by Gemini. However, whether that will prevent more regulation than the tech giant wants remains to be seen.

Follow @voicebotai Follow @erichschwartz

Google Bard (and Duet and Assistant Mobile App) No More – Gemini Now The Star of Generative AI Show

Google & Apple Hit Pause on Sharing Voice Assistant Recordings with Contractors

Biden Administration Introduce First US AI Safety Institute Director