Report: Apple Contractors Listen to Siri Recordings

Apple’s Siri voice assistant recordings are heard by contractors, according to a new report from the Guardian. The recordings include plenty of “conversations” or other noises that people probably did not realize were being recorded. This is only the latest in a recent spate of reports that some recordings of consumer interactions with popular voice assistants are heard by company contractors.

Standard Terms

Some portion of Siri recordings are shared with Apple contractors for quality control and other tests. That’s hinted at in Apple’s consumer licensing terms, although not in quite those words. The idea is to improve Siri’s responses and general ability to know when and how to respond to people.

Identifying details are supposed to be removed before contractors listen to them. According to Apple, the recordings are just a few seconds long, and fewer than one percent of Siri’s activations are chosen to test each day. The report’s source, however, told the Guardian that personal information is still picked up and heard by the contractors. Medical information, business meetings, intimate relations, and explicitly criminal plans are all picked up by Siri when it supposedly hears the wake word.

False Alarms

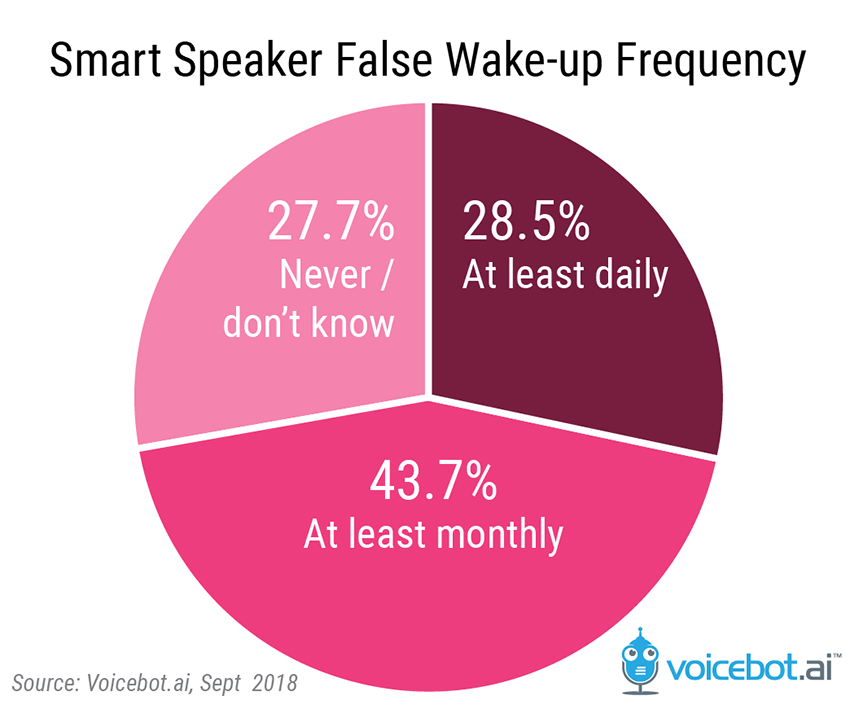

Voice assistants in smart speakers and increasingly on smartphones are designed to listen for a wake word or phrase that indicates to the AI system the user wishes to activate it. For Apple’s voice assistant, the common wake phrase is “Hey Siri.” The assistant is not supposed to wake up and start listening unless it hears this phrase. However, there are false activations where the assistant believes it has been called by the user, but it has not. A survey of 375 voice assistant users in September 2018 by Voicebot found that over 70% of device users have noticed one of these “wake up” errors. Nearly 29% says these false wakeups occur daily.

The false wake up phenomenon is not limited to Siri. Alexa and Google Assistant users report monthly and daily false wakeup frequency at a higher rate than HomePod owners. However, it is a characteristic of the system.

To avoid having users shout over and over to wake up the device, they are more permissive in activating based on similar sounds as a usability feature. Typically, the shut down after the AI concludes that the user is not attempting to interact. The voice assistant providers then audit a portion of these false wakeups which show up as errors in their logs. Further evaluation by human auditors enables the companies to confirm whether these errors were the result of false wakeups or the assistant did not correctly handle the user request. This information is then fed back into the systems in theory to improve performance. The net result is a human sometimes hears information from a private conversation.

Not So New

This revelation is not a surprise, based on other reports this summer. The fact that Google Assistant recordings, including accidental ones, are listened to by contractors came out just a few weeks ago. Reports on Amazon’s Alexa recording for the same purpose came out even earlier.

People worry about digital assistants and privacy, even as it becomes clear the two are only tangentially connected. A study by Microsoft showed 41 percent of voice assistant users are concerned about privacy invasion by devices that employ passive listening. For some, the worry about privacy has led to ingenious anti-passive listening ideas like Project Alias, ClappervsAlexa, and Mycroft Kickstarter.

While the companies say that all personally identifiable information is stripped from the recordings, that is not a foolproof mechanism. An error by an Amazon customer service representative in 2018 led to sharing recordings with a user based on a GDPR request. The representative inadvertently shared the recordings from another user who then passed the recordings to a journalist with C’T magazine in Germany. After listening to 1,700 recordings, that journalist was able to positively identify the user in the recordings and make contact with them.

Still, some of the companies are taking steps to reassure users. Amazon recently created an Alexa privacy center website and implemented a voice command to delete Alexa recordings. Apple lacks that option, though that may change if there’s enough outcry over this news. Smart speaker ownership is rising, 40 percent higher in the last year according to a Voicebot survey. That means voice privacy will remain an issue for the foreseeable future, and stories like this will only continue to come out until some sort of solution is found.

Follow @voicebotai Follow @erichschwartz

Google Contractors Listen to Belgian and Dutch Voice Assistant Conversations