Amazon Alexa Unveils Command to Delete Voice Recordings

Alexa devices will delete voice recordings upon request thanks to a new voice command debuted by Amazon today, as first reported by VentureBeat. Asking Alexa to “delete everything I said today,” will erase everything heard and recorded by the voice assistant that day. Amazon also plans to debut the more limited command, “Alexa, delete what I just said” soon. Deleting Alexa History by Voice Requires a Settings Change

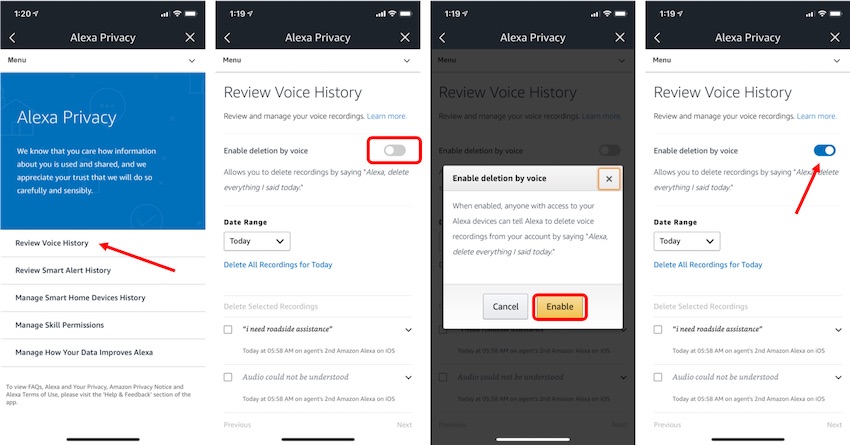

To enable voice deletion you must first go into the Alexa in Settings > Alexa Account > Alexa Privacy > Review Voice History. You then must select the toggle button next to “Enable deletion by voice” followed by confirmation that you want to enable it. This confirmation step comes with a warning that once enabled, anyone with access to your Alexa device can delete your voice recordings and therefore your activity history for that day.

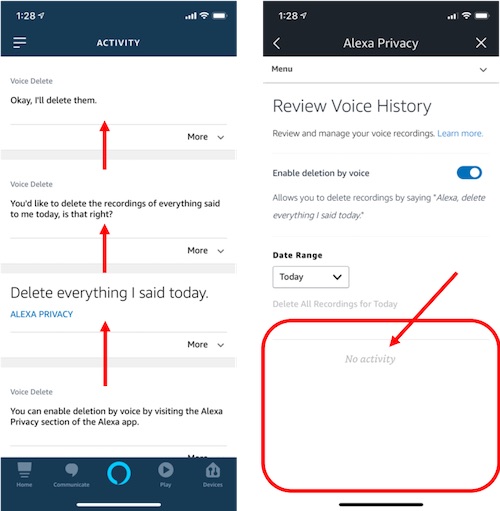

After you activate the voice deletion feature, you still need to request that the voice recordings be deleted. You can activate the deletion function manually in Alexa app on the “Review Voice History” screen or you can ask Alexa orally to delete what you said that day. If you make the request by voice, you will see that request in your activity history, and other requests from that day will be removed. In testing by one of my colleagues today, the feature was immediately available after activation and the deletion was seemingly instantaneous.

A Shift Toward Privacy Protection

In addition to the new command, Amazon has created the Alexa Privacy Hub specifically to educate people on how they can customize Alexa and Echo devices for privacy. For now, there isn’t a way to totally disable voice recording storage, or delete them automatically, but users can delete their entire voice history in one fell swoop at the privacy hub.

The new voice commands and privacy site, revealed in an announcement about Amazon’s new Echo Show 5 device, arrive at a critical moment in the larger debate over privacy and voice assistants. Although the recordings are made to improve how well Alexa understands voice commands, and to provide users with a record of their device use activity, many people are apprehensive over how the information might be used. A recent study by Microsoft found 41 percent of voice assistant users are concerned about their privacy due to passive listening by their devices. On the consumer side, privacy worries have spurred the development of anti-passive listening devices like Project Alias and the Mycroft Kickstarter.

Regulation Reasoning

Amazon, Google and other voice assistant developers are keeping an eye on more than just assuaging users who want to limit how much personal information is stored by their devices. Lawmakers and regulators are starting to write legislation specifically to deal with ambient listening devices.

California’s State Assembly just voted for a law to require passive listening device makers to get consent before recording voices, and other states are considering similar laws. Meanwhile, a complaint filed by advocates with the Federal Trade Commission earlier this month claims that Amazon is violating a federal law known as the Children’s Online Privacy Protection Act (COPPA) by recording data from children younger than 13 on devices like the Echo Dot Kids, and doesn’t do enough to help parents figure out how to delete that information. Amazon implemented what it views as a COPPA-compliant approach to using Alexa devices with children in September 2017.

Amazon and other voice assistant makers will likely continue to develop privacy solutions for their users at a rapid clip. Reassuring lawmakers and consumers that their data is protected will be crucial not only to comply with evolving regulations, but also to compete with each other and claim to produce the most secure, privacy-conscious voice assistant available.

Follow @voicebotai Follow @erichschwartz