Google & Apple Hit Pause on Sharing Voice Assistant Recordings with Contractors

Google and Apple employees and contractors won’t be listening to recordings by their respective voice assistants for the next few months. The temporary pause on the practice of listening and transcribing audio recorded by their platforms comes after a spate of stories revealed private conversations and intimate moments have been inadvertently recorded by voice assistants and reviewed by the companies as part of ongoing quality assurance. More notably, each of these articles has revealed that third-party contractors are tasked with reviewing these recordings, and not just employees of the large tech companies.

Voluntary Recording Halt

Both Google and Apple claim that they are voluntarily pausing their listening programs to reevaluate and potentially change how they work.

German officials issued an order to Google prohibiting the practice of letting people listen to customer recordings for at least three months to allow for an investigation. Google agreed to extend the pause across the European Union. According to Google, the halt is voluntary, and one that it started right after leaks about how the program collects sensitive information came out in several media reports.

Apple declared in its own statement that the decision to stop people reviewing Siri recordings for what it calls “grading” will apply globally. The company did make it clear that it does plan to restart the program, but turning it into an opt-in system, where people will have to affirm that they want to take part. While the German regulator ruling was not mentioned, it’s hard to believe it wasn’t a factor in the decision.

Amazon Takes the Opt-Out Approach

Amazon has taken a different approach with Alexa. It does have human reviewers for some recordings similar to Google Assistant and Apple but the company stresses that it also offers users the ability to opt-out of having their recordings heard by any humans. Given that opt-out option, Amazon appears to not think it necessary to halt or radically change its current procedures.

An Amazon spokesperson commented in an email to Vociebot, “We take customer privacy seriously and continuously review our practices and procedures. For Alexa, we already offer customers the ability to opt-out of having their voice recordings used to help develop new Alexa features. The voice recordings from customers who use this opt-out are also excluded from our supervised learning workflows that involve manual review of an extremely small sample of Alexa requests. We’ll also be updating information we provide to customers to make our practices more clear.”

Voice Assistants Record More Than They Should

Voice assistant recordings are transcribed and analyzed by employees and contractors in order to correct and improve them. It is part of a process called supervised learning which is common in machine learning systems. Humans annotate a subset of interactions to ensure the assistant is responding appropriately and some say the approach is critical to improving the solutions and making them better at recognizing uncommon requests and accents. The quality control and testing are part of the standard licensing terms people agree to when they buy a phone or smart speaker. According to the iOS license agreement,

“By using Siri or Dictation, you agree and consent to Apple’s and its subsidiaries’ and agents’ transmission, collection, maintenance, processing, and use of this information, including your voice input and User Data, to provide and improve Siri, Dictation, and dictation functionality in other Apple products and services.”

The privacy concerns arise when the smart speakers and other devices record and share information that was not intended for the voice assistant. These unintended utterance recordings are the result of wake up errors by the voice assistants. This occurs when the voice assistants inadvertently activate due to noise or a spoken phrase that appears to indicate a summoning by the user.

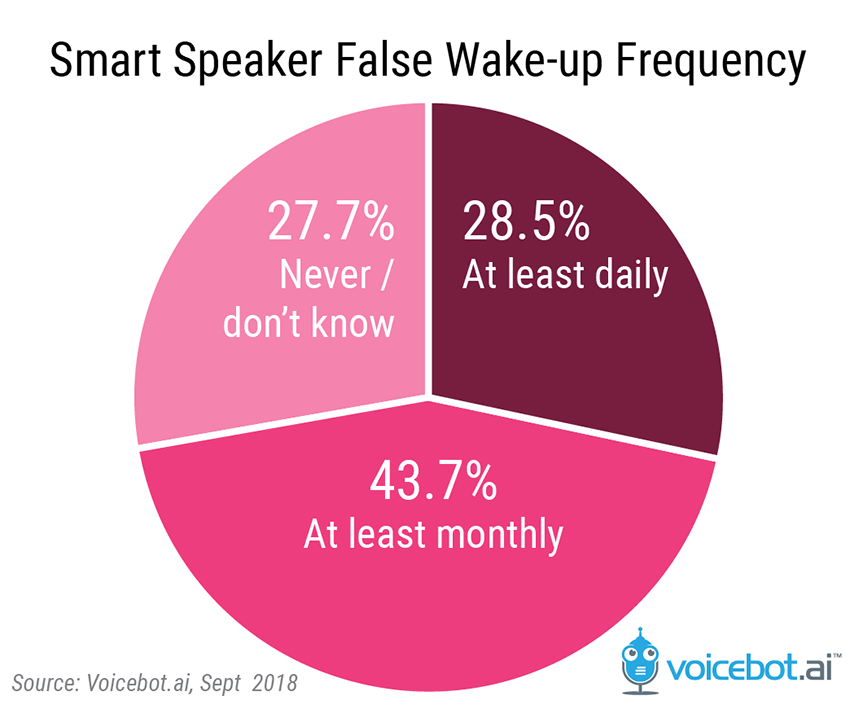

A Voicebot survey in 2018 found that for 28.5 percent of smart speaker users these false wake-ups were occurring at least daily with another 43.7 percent reporting the incidents happened at least monthly. Whether you notice the false wake-up or not, the voice assistant records the noise nearby for a few seconds and a portion of those recordings are listened to later by a human to determine whether the activation was a false wake up or a missed user command. That information is then used to determine how and whether the issue can be avoided in the future.

Uncomfortable and Illegal Conversations

Apple and Google contractors have heard everything from medical discussions to criminal planning, to intimate moments on recordings that Siri and Google Assistant made without being deliberately called. The same goes for Amazon Alexa recordings. And, while the contractors are specifically not told where or who the recordings come from, the voice assistants frequently capture more than enough personal information to reveal who is talking. There was also true in the memorable case of an Amazon customer service representative receiving a GDPR request and sharing the wrong person’s files.

Privacy is a big deal for people when it comes to technology, including smart speakers. That Apple and Google feel the need to reassure their customers is part of a larger effort, including Amazon’s new Alexa privacy center website and voice command to delete Alexa recordings. The pause on sharing recordings might give Apple and Google some breathing space to make a more permanent solution that customers will be happy with, but it’s unlikely the voice privacy fight will be fully resolved any time soon.

Editors Note: This story was updated at 11:00 pm EDT on August 2, 2019 to include a response provided by Amazon.

Follow @voicebotai Follow @erichschwartz

Google Contractors Listen to Belgian and Dutch Voice Assistant Conversations