Microsoft Copilot Adds Generative AI Music Engine

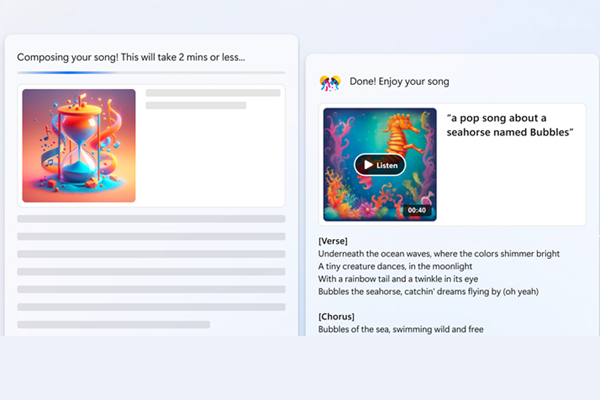

Microsoft has augmented its Copilot AI assistant with a synthetic music engine capable of composing songs through a collaboration with generative AI music company Suno. The new feature allows users to produce a tune and lyrics by prompting Copilot with a description of the kind of music and lyrics they want to hear.

Generative Music

Suno’s technology can generate complete musical compositions, including lyrics, instruments, and vocals, from basic text prompts with no musical ability required. Microsoft is making the AI model for music creation accessible through a Copilot plugin. After enabling the Suno extension, Copilot users can ask the AI for a “romantic ballad about puppies” or anything else they want to hear.

“You don’t have to know how to sing, play an instrument, or read music to bring your musical ideas to life. Microsoft Copilot and Suno will do all the hard work for you, matching the song to cues in your prompt,” Microsoft explained in its announcement. “We believe that this partnership will open new horizons for creativity and fun, making music creation accessible to everyone.”

For instance, the generative AI synthetic audio experiment Riffusion went from an experiment in turning text into music to raising $4 million as a startup. Riffusion used the Stable Diffusion synthetic image generator, fine-tuned to work for music to create a sonogram, a visual representation of sound as a graph with time on the horizontal and sound frequency on the vertical axis. Riffusion then uses Torchaudio to read the frequency and time to play the sound. There’s also Voicemod’s synthetic song generator, which matches submitted lyrics to a selection of popular songs and AI voices, and the text-centered LyricStudio, which claims its AI has assisted in writing more than a million songs.

Meta has its own generative AI music and sound model named AudioCraft that can transform a text prompt into any sound by melding Meta’s text-to-music model MusicGen and text-to-natural-sound tool AudioGen, enhanced by EnCodec, a decoder that compresses the training required for the AI models to work. Meanwhile, Google’s MusicLM generative AI music composer has only been glimpsed in a few demonstrations as it is supposedly too good to be released without concern about copyright infringement.

There’s been a recent rush toward musical generative AI creations in the last few months, tempered by plenty of ethical and legal questions that remain unresolved. Deepfake creator Ghostwriter has had multiple hits with synthetic songs, and music producer and artist Timbaland shared a sample of a song featuring a deepfake voice of the Notorious BIG before pausing work on it after some backlash from fans of the deceased rapper. Meanwhile, musical artist Grimes has gone so far as to offer 50% of the royalties on any AI-generated song that uses her voice. Holly Herndon outright offers a free synthetic version of her voice to make music with called Holly+, and the musical group YACHT trained an AI model to write an entire album called “Chain Tripping.”

Some artists have gone to court over whether generative AI music exploits creators without consent or payment. There’s also pending legislation to give artists more control over any AI voice clones or musical style mimics. Streaming service Deezer is even working on building AI tools to detect and remove deepfake singers and synthetically generated songs from its platform. At the same time, Google and Universal Music Group are working on a preliminary deal to license artist voices and music for AI projects and voice clones.

Follow @voicebotaiFollow @erichschwartz

Meta Releases Open-Source Generative AI Text-to-Sound Engine AudioCraft