Google Shows Off New Text-to-Music AI Composer MusicLM

Google scientists have unveiled a generative AI model capable of producing musical compositions from written prompts called MusicLM. The text-to-music generator transforms words into different music styles and instruments, but isn’t available to the public due to potential copyright infringement concerns.

MusicLM

MusicLM applies the same kind of multi-modal generative AI as text-to-image tools like OpenAI’s DALL-E or Stable Diffusion. The researchers trained the AI on 280,000 hours of music tagged with descriptions to teach it how to generate appropriate tunes from text descriptions. The results shared by the researchers do a pretty good job of matching what the text says.

“MusicLM generates high-quality music based on a text description, and thus it further extends the set of tools that assist humans with creative music tasks,” the developers explain in their research paper.

MusicLM’s short descriptive tunes are just the one way the AI operates. The researchers showed that the AI could listen to someone singing, humming, or whistling a little bit of music and then extrapolate from that to come up with additional bars of melody. The kinds of instruments and even how well the AI performers know how to play can be adjusted. It can also set up a “story” of sequential musical riffs. For instance, the audio below starts with an “electronic song played in a videogame,” then continues with a “meditation song played next to a river,” “fire,” and “fireworks.”

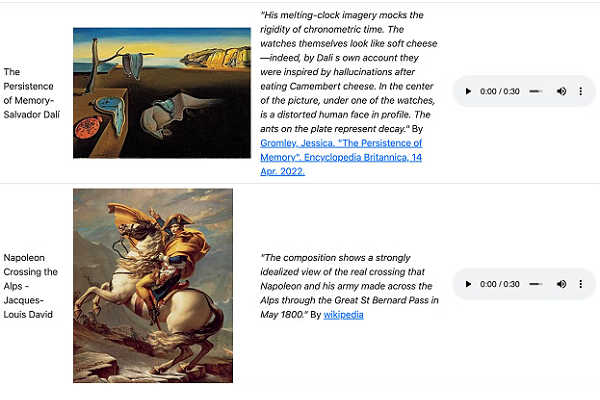

The AI can even produce sounds to fit visual art. The two examples below are what MusicLM created based on descriptions of The Persistence of Memory by Salvador Dali followed by Napoleon Crossing the Alps by Jacques-Louis David. The descriptions are in the image at the top of the article.

Musical AI

MusicLM is not the only musical generative AI model around. For instance, the visual and sonic AI project Riffusion uses Stable Diffusion to turn a text prompt into a sonogram, a visual representation of sound as a graph with time on the horizontal and sound frequency on the vertical axis. Riffusion then uses Torchaudio to read the frequency and time to play the sound. There are also AI tools for blending generative AI and music, like Voicemod’s synthetic song generator, which matches submitted lyrics to a selection of popular songs and AI voices, and the text-centered LyricStudio, which claims its AI has assisted in writing more than a million songs.

MusicLM isn’t going to match human composers, especially when it comes to lyrics. The “words” come out as garbled at best and often are just nonsense. The AI also has the potential to simply replicate existing music. The researchers suggested about 1% of MusicLM’s creations already exist. That means it could step on intellectual property rights. That’s why the current version of MusicLM is not accessible to the public.

Yesterday, Google published a paper on a new AI model called MusicLM.

The model generates 24 kHz music from rich captions like “A fusion of reggaeton and electronic dance music, with a spacey, otherworldly sound. Induces the experience of being lost in space.” pic.twitter.com/XPv0PEQbUh

— Product Hunt 😸 (@ProductHunt) January 27, 2023

“We acknowledge the risk of potential misappropriation of creative content associated to the use-case. In accordance with responsible model development practices, we conducted a thorough study of memorization, adapting and extending a methodology used in the context of text-based LLMs, focusing on the semantic modeling stage,” the researchers wrote. “We strongly emphasize the need for morefuture work in tackling these risks associated to music generation — we have no plans to release models at this point.”

Follow @voicebotaiFollow @erichschwartz

Voicemod Debuts AI Text-to-Song Generator After Acquiring Synthetic Singing AI Startup Voctro

AI Songwriting Assistant LyricStudio Has Collaborated on More Than a Million Songs