Meta Teaches Doodles to Dance With Open-Source Generative AI Project

Meta has introduced a generative AI tool for transforming static drawings into cartoons as an open-source project called Animated Drawings. The drawing-to-animation AI can process any approximately human-shaped doodle into a character capable of walking or dancing through a video.

Meta has introduced a generative AI tool for transforming static drawings into cartoons as an open-source project called Animated Drawings. The drawing-to-animation AI can process any approximately human-shaped doodle into a character capable of walking or dancing through a video.

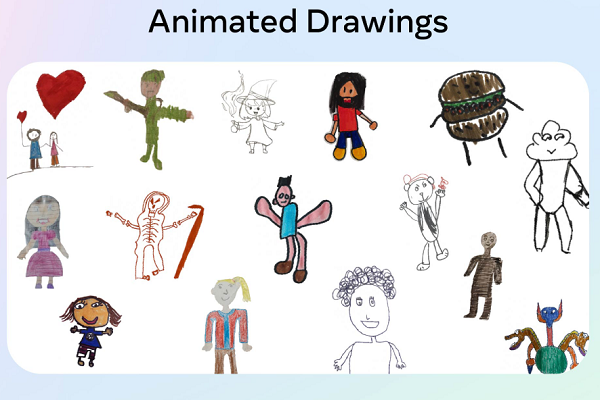

Animated Drawings

Meta’s Fundamental AI Research (FAIR) team first published a version of the tool online in 2021. Now, Animated Drawings is available as an open-source project, accompanied by the Amateur Drawings Dataset, a collection of 180,000 drawings, to encourage experimentation and expansion. Users can either pick a demo drawing or upload a human-shaped drawing of their own, provided they give consent for Meta to employ it in training its models. Once the character’s dimensions are identified, users have to define the joints of their character, where the limbs might move on a human.

The actual movement cycle is chosen by the user after the AI processes the drawing for use in videos. There are 32 different animation options available on the demo website currently, roughly evenly divided among the dance, jumping, walking, and funny categories. The AI processes the drawing for animation using Meta’s object detection, pose estimation, and image processing-based segmentation. That’s the same generative AI technology underlying the Segment Anything Model (SAM) Meta recently debuted, which picks out component objects from images and videos.

“Our system incorporates repurposed computer vision models trained on photographs of real-world objects. Because the domain of drawings, including that of children, is significantly different in appearance, we fine-tune the models using the Amateur Drawings Dataset. With this dataset and animation code, we believe that the domain of amateur drawings can inspire a new generation of creators with its expressive and accessible possibilities. We hope they will be an asset to other researchers interested in exploring potential applications for their work,” Meta research engineer Jesse Smith explained in a blog post. “Drawing is a natural and expressive modality that is accessible to most of the world’s population. We hope our work will make it easier for other researchers to explore tools and techniques specifically tailored to using AI to complement human creativity.”

Animating still images and producing synthetic video is on the rise among generative AI experiments though Meta’s approach stand out partly for having evolved over a relatively long period of time. For instance, Stable Diffusion creator Stability.ai recently produced an image-to-animation tool called Animai in partnership with the digital collectible platform Revel.xyz. Meanwhile, synthetic media startup D-ID built its reputation on animating old photographs before launching its Creative Reality Studio, capable of producing videos from a single photo accompanying script. D-ID has since expanded to supporting real-time conversations with those synthetic video creations through an API or web app.

Follow @voicebotaiFollow @erichschwartz

Meta’s New Segment Anything Model Can Identify and Remove Objects in Images and Videos

Meta Jumps into Synthetic Media With Text-to-Video AI Generator ‘Make-A-Video’

Stability AI Unveils Image-to-Animation Generative AI Tool Animai