How HuggingGPT Enhances Generative AI by Enabling ChatGPT to Mix-and-Match Hugging Face’s AI Models

Generative AI researchers at Microsoft have created a system for using large language models to link AI models in machine learning communities to complete tasks called HuggingGPT and published the results in a research paper. The resulting framework can perform across different domains and modalities, as represented in the name, which combines open-source generative AI developer Hugging Face and ‘generative pre-trained transformer,’ the GPT in ChatGPT.

Generative AI researchers at Microsoft have created a system for using large language models to link AI models in machine learning communities to complete tasks called HuggingGPT and published the results in a research paper. The resulting framework can perform across different domains and modalities, as represented in the name, which combines open-source generative AI developer Hugging Face and ‘generative pre-trained transformer,’ the GPT in ChatGPT.

HuggingGPT

Hugging Face offers developers an open-source collection of LLMs and other generative AI tools. The company is best known for its BLOOM model, but many of its models are embedded in software products to produce and edit text, write computer code, and generate images. The researchers were looking to solve complex AI tasks with disparate models limited to specific domains. Hugging Face’s library of models designed for specific tasks and industries provided a helpful repository for that purpose. The success of LLMS at processing language well enough to generate conversations and synthetic media suggested to the researchers that something like ChatGPT could help manage multiple existing AI models and complete these more involved tasks.

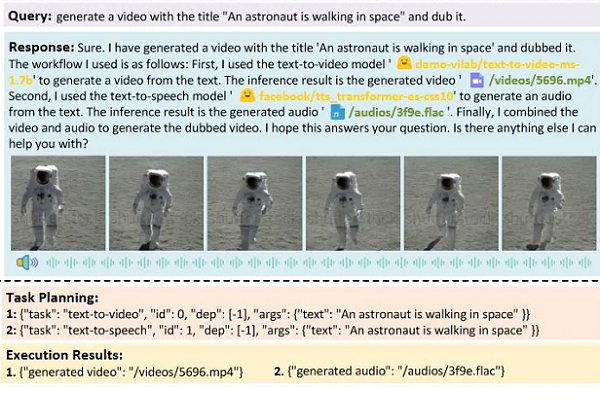

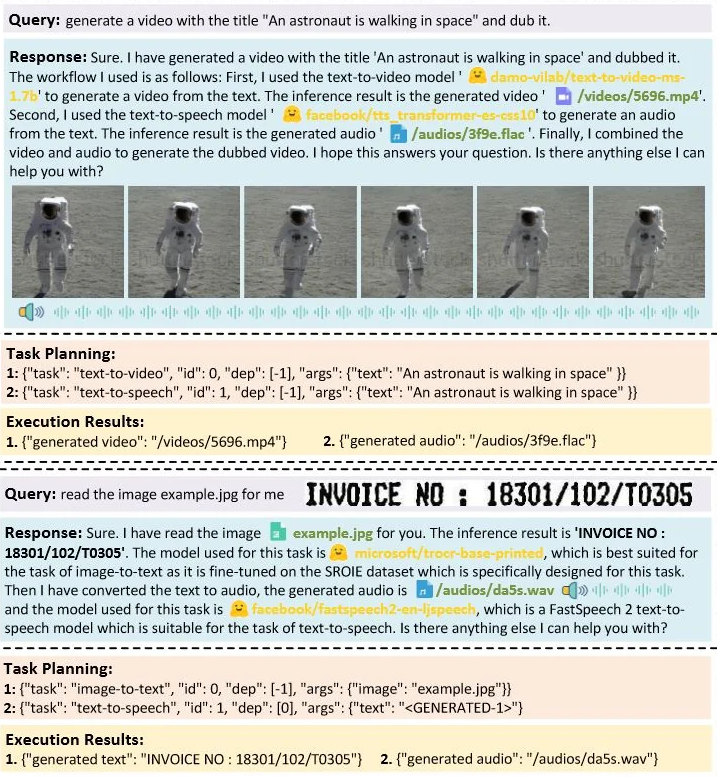

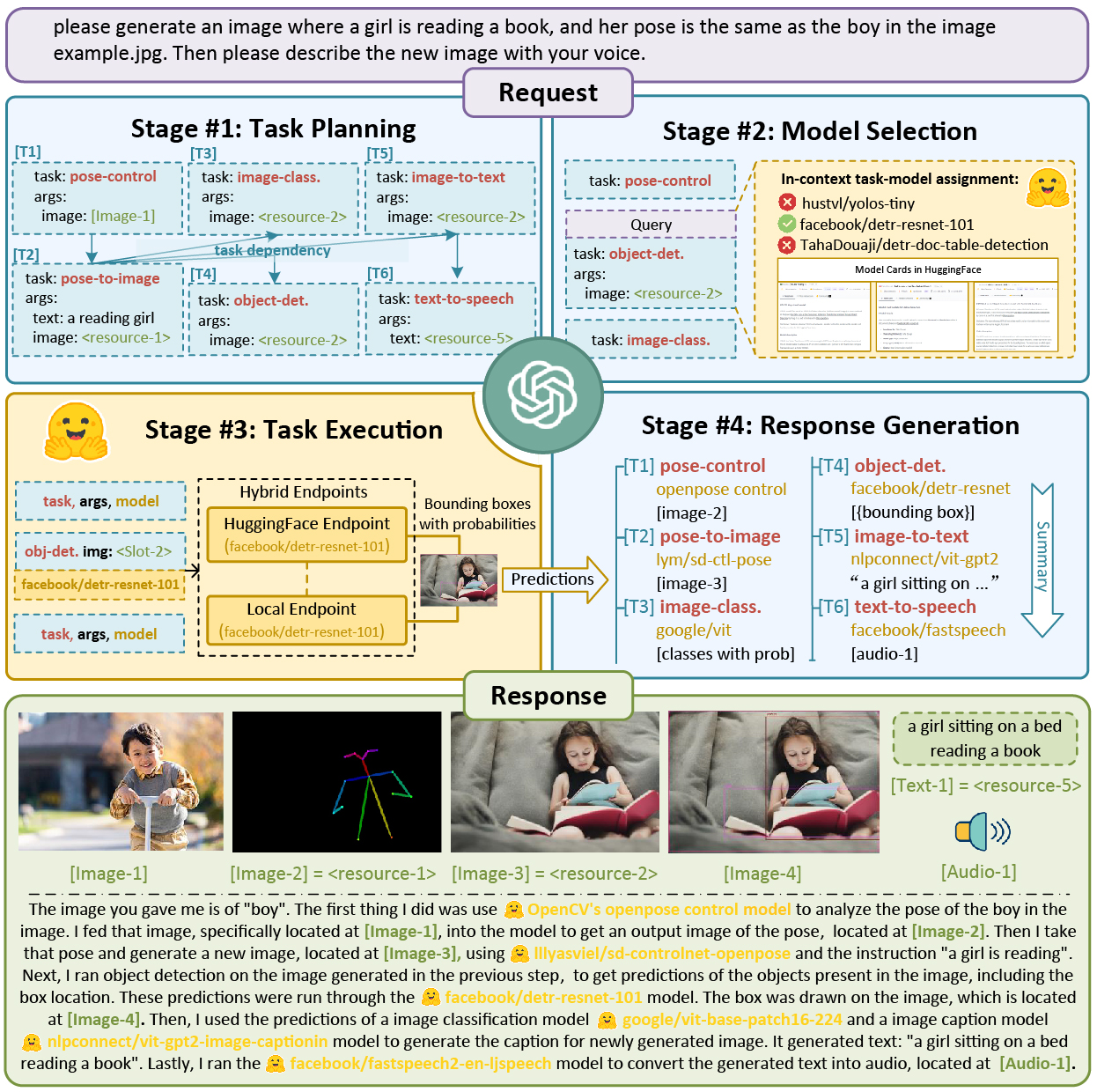

For the experiment, the researchers relied on ChatGPT to coordinate the models for the task. They empowered the generative AI chatbot to pick appropriate AI models from Hugging Face’s library based on their attached descriptions. ChatGPT then used the chosen models to complete the differing facets of the task, sharing the result and explaining what it used and how to the user with ChatGPT’s mastery of conversational language. The result worked for tasks combining written words, visuals, and audio. You can see an example above showing how HuggingGPT responded to a text-to-audio and text-to-video request combination. First, the audio and video are produced simultaneously, while the bottom shows the AI first producing the text before the audio. You can also see a more complete diagram of how it works on the right.

“The principle of our system is that an LLM can be viewed as a controller to manage AI models, and can utilize models from ML communities like HuggingFace to solve different requests of users. By exploiting the advantages of LLMs in understanding and reasoning, HuggingGPT can dissect the intent of users and decompose the task into multiple sub-tasks. And then, based on expert model descriptions, HuggingGPT is able to assign the most suitable models for each task and integrate results from different models,” the researchers explained in their paper. “By utilizing the ability of numerous AI models from machine learning communities, HuggingGPT demonstrates huge potential in solving challenging AI tasks. Besides, we also note that the recent rapid development of LLMs has brought a huge impact on academia and industry. We also expect the design of our model can inspire the whole community and pave a new way for LLMs towards more advanced AI.”

The researchers shared all of the code in a GitHub repository named Jarvis. If you have API tokens from OpenAI and Hugging Face, you can also test out HuggingGPT for yourself on Hugging Face’s platform.

Follow @voicebotaiFollow @erichschwartz

Generative AI Startup Hugging Face Picks AWS to Host Future Large Language Models

AI21 Debuts New Generative AI Large Language Model Jurassic-2 and APIs