Got-It AI Debuts Compact Large Language Model With Hallucination Filters ELMAR

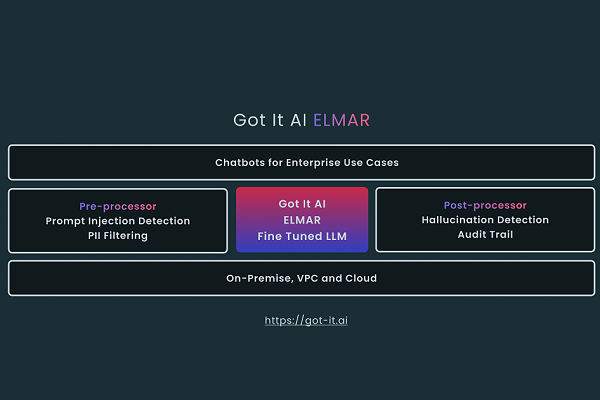

Got It AI has introduced a new large language model able to produce generative AI chatbots like ChatGPT while still compact enough to run on-premises. The Enterprise Language Model ARchitecture (ELMAR) also includes internal checks on responses to reduce the incidents of false, hallucinatory responses.

Got It AI has introduced a new large language model able to produce generative AI chatbots like ChatGPT while still compact enough to run on-premises. The Enterprise Language Model ARchitecture (ELMAR) also includes internal checks on responses to reduce the incidents of false, hallucinatory responses.

ELMAR Independence

ELMAR can be used to build generative AI chatbots much like other LLMS, But it’s far smaller than OpenAI’s GPT-3 model from last year and can work on much less expensive hardware than required to run the most recent GPT-4 iteration. The available models range in parameter size from as few as a billion to as large as 20 billion. The AI offers not just conversation but online searches and even fact-checking with its TruthChecker service released earlier this year. Clients can modify the AI with enterprise-specific datasets and fine-tune them for their customer experience and service needs.

It also is far less expensive than API-based models because users can fine-tune ELMAR on a specific dataset without running up inference costs. Beyond ELMAR’s own capabilities, Got It believes there’s demand for LLMs that can better guarantee data security. As with other on-device AI services, ELMAR doesn’t have to funnel data to and from the cloud to operate.

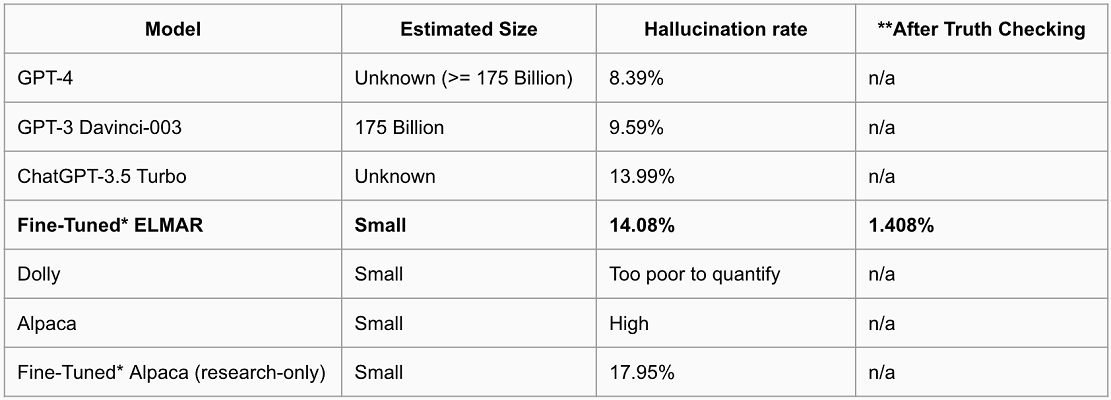

Got It AI published a test comparing ELMAR’s ability to spot hallucinations to a half dozen other models, including ChatGPT, GPT-3, GPT-4, Databrick’s GPT-J-based Dolly, and both Meta’s LLaMA model and the Alpaca model Stanford scientists derived from it. Got-it used TruthChecker to compare their hallucination rates and how ELMAR employed the tool to cut most of its hallucinations out of its response. With fine-tuning, ELMAR performed as well as all of the bigger models in its conversation over a 100-article test set, as seen in the chart below.

“Enterprises are shutting off access to ChatGPT because they want guardrails. The key guardrails we’re offering are in two critical areas: a truth checking model on the responses generated, and a pre-processing model for filtering out sensitive information sent to dialog-based chatbots,” Got It AI chairman Peter Relan said. “While LLaMA and Alpaca are research constrained licenses, our work enables commercial use. The ability to run ELMAR on premise creates a sense of control and safety around the use of generative AI models in the enterprise. It opens up a number of chatbot use cases involving potentially sensitive data: Internal Knowledge Base Queries, Customer Service Agent Assist, IT and HR Helpdesks, Customer Support, Sales Training, and many more. The availability of a solution for generative AI hallucinations is another key factor enabling enterprise adoption.”

Got It AI will share their generative AI creation and insights into how the technology is transforming enterprise operations at Voicebot’s Model Mania, the Collision of Generative AI and Conversational AI. The free online conference will take place April 20 at noon EDT.

Follow @voicebotaiFollow @erichschwartz

AI21 Debuts New Generative AI Large Language Model Jurassic-2 and APIs

Meta Introduces Large Language Model LLaMA as a Competitor for OpenAI