Apple Debuts Siri Pause Time, iPhone Live Captions in Slate of New Accessibility Tools

Apple unveiled a range of new and upgraded accessibility features for its devices this week. The collection included adding Live Captions to iOS devices and making Siri’s response time adjustable for those with speech impairments.

Apple unveiled a range of new and upgraded accessibility features for its devices this week. The collection included adding Live Captions to iOS devices and making Siri’s response time adjustable for those with speech impairments.

Accessible Apple

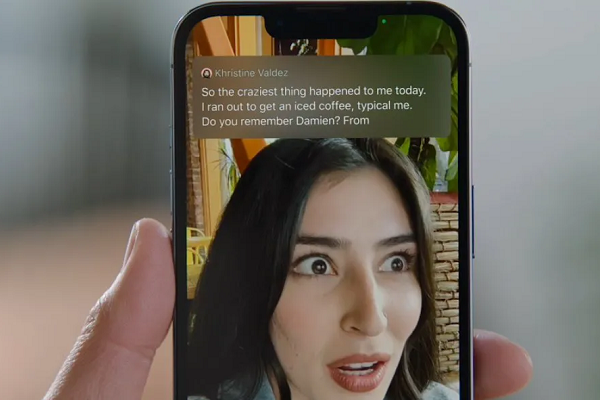

Live Captions brings real-time transcription to iPhones, iPads, and Macs, enabling deaf or hard-of-hearing users to read the text of what people are saying on the screen. The feature can transcribe phone calls and video conferences, as well as any streaming media. It can even be used to transcribe what someone physically nearby is saying using the device’s microphone. The audio processing operates entirely on the device, which Apple highlighted as a benefit for privacy and speed.

For those with speech disabilities, Apple has updated the options for Siri to accommodate their rate of speech. The new Siri Pause Time feature can change Siri’s response time so that it doesn’t interrupt the user while they are still speaking. This may have grown out of Apple’s research on teaching Siri to know when someone is stuttering, allowing it to compensate and avoid interruptions and misunderstandings. Similarly, the new spelling mode for Apple’s voice control enables users to write out words that Siri may not know or has trouble understanding letter-by-letter. Apple also has made it easier for Apple Watch owners to use the existing voice control tools through the new Apple Watch Mirroring. The feature connects the watch to a paired iPhone so that they can navigate the device without having to tap on the screen.

The rollout included additional accessibility functions not directly related to voice AI. The new Door Detection tool alerts blind or low-vision users about the distance to the door, whether it’s closed, and what kind of knob or handle it has. It will also read out any symbols or words around the door. Apple’s VoiceOver screen-reader has also been augmented with more than 20 new languages and locations.

“Apple embeds accessibility into every aspect of our work, and we are committed to designing the best products and services for everyone,” Apple senior director of accessibility policy and initiatives Sarah Herrlinger said. “We’re excited to introduce these new features, which combine innovation and creativity from teams across Apple to give users more options to use our products in ways that best suit their needs and lives.”

Tech Accessibility

Apple’s announcements fit right into the steady stream of tech for accessibilty tools that have come out over the last couple of years. Automated captioning has come into vogue at a particular rapid pace. Instagram added live captioning for videos in March, following in the footsteps of Snapchat, while Twitter incorporated automatic captions in its voice tweets last summer and YouTube started offering automated captions for livestreaming in October. Google even made live captioning a part of the Chrome Browser over a year ago. That feature built on Google’s Project Euphonia efforts to train voice assistants to understand people with unique speech patterns. The Android Action Blocks feature, Voice Access service, Lookout reader, and Google Assistant shortcuts all offer continually upgraded accessibility. Meanwhile, Alexa can be directly controlled by those with speech impairments thanks to Amazon’s partnership with speech recognition technology startup Voiceitt, which also offers an iOS app to help people with atypical and impaired speech communicate.

Follow @voicebotaiFollow @erichschwartz

Google Chrome Adds Live Caption Feature to Transcribe Audio and Video in the Browser

Instagram Adds Automated Speech-to-Text Video Captions in 17 Languages

Google Tests Voice Assistant for People With Speech Impairments