Google Upgrades Voice Access to Detect Unlabeled Android Icons

Google has upgraded the Voice Access app for Android to spot and control icons on the screen, even if they aren’t properly labeled. The improved AI gives Android users a more powerful tool for operating devices using their voice and makes them more accessible to people with visual or mobility impairments.

Label Reading

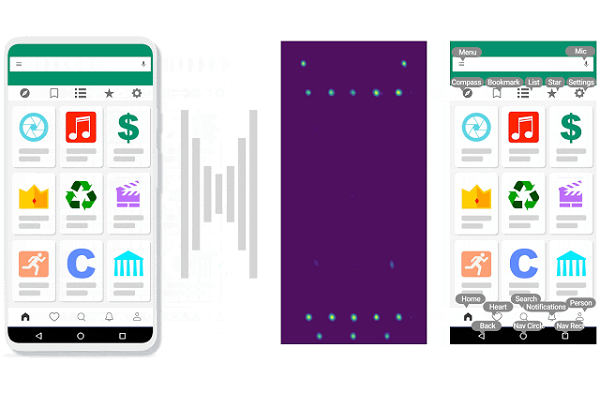

Voice Access provides hands-free control of Android devices, but it can only control what the software is able to identify. Accessibility labels in the software code can identify interactive icons and images for the AI, but it’s not uncommon for those labels to be missing. Voice Access couldn’t tell that an icon on a smartphone is an app it should tap open when asked without that label. Voice Access now skips the need for accessibility labels by applying its new IconNet model to “look” at the pixels in a screenshot. IconNet used advanced machine learning techniques and trained on more than 700,000 app screenshots labeled by the engineering team. Now, Voice Access relies on the model to match icons on the screen with its database, internally defining the buttons on the screen as though they had accessibility labels and interacting with them upon request.

“Addressing this challenge requires a system that can automatically detect icons using only the pixel values displayed on the screen, regardless of whether icons have been given suitable accessibility labels,” Google Research software engineers Gilles Baechler and Srinivas Sunkara explained in a blog post. “IconNet can detect 31 different icon types (to be extended to more than 70 types soon) based on UI screenshots alone. IconNet is optimized to run on-device for mobile environments, with a compact size and fast inference time to enable a seamless user experience.”

Accessible Icons

Voice Access debuted in beta nearly five years ago. The new iteration continues Google’s refinements to the app and its service, making Android smartphones and other devices usable by people who may have difficulty seeing the screen or using the touch-based system. Google reinvigorated Voice Access last year by adding natural language processing, instead of requiring a specific and limited set of commands and by making it compatible with older Android devices. The beta for Android 11 is also when Google first mentioned adding a ‘visual cortex’ to Voice Access that would improve how the AI labeled and interacted with on-screen visual elements. IconNet appears to be the culmination of that concept.

Voice Access is part of Google’s larger accessibility program for Android. The tech giant has been rolling out several new and improved features in that vein, including Android Action Blocks, which combine Google Assistant commands into a single button on the home screen, and Sound Notifications, a feature alerting people who can’t hear critical noises like alarms or crying babies when those events occur. This year also brought eye-tracking controls to Google Assistant, an expanded Lookout feature that can read food labels for Android, and voice cues for Google Maps to guide people with limited sight.

Follow @voicebotai Follow @erichschwartz

Google Makes Android Voice Access Feature Compatible With Older Devices

Google Rolls Out Action Blocks and Other New Android Accessibility Features

Android 11 Beta Updates Voice Access Feature with ‘Visual Cortex’