Voiceitt Debuts Direct Alexa Control for People with Speech Disabilities

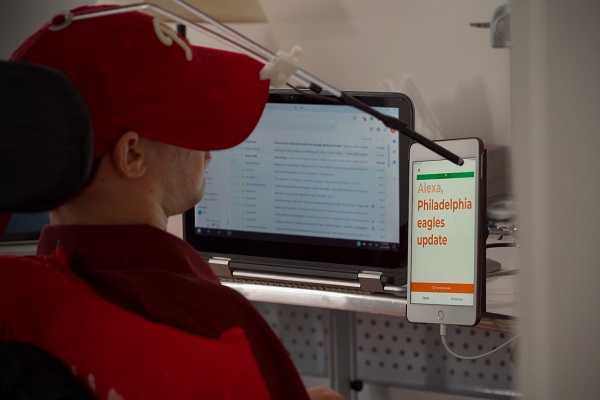

Alexa is working with Israeli voice tech startup Voiceitt to enable people with atypical and impaired speech to use the voice assistant. Voiceitt’s mobile app listens and translates a user’s words into a form Alexa can understand, giving those with limited speaking function access to Alexa and its myriad smart home connections that had been out of reach.

Alexa is working with Israeli voice tech startup Voiceitt to enable people with atypical and impaired speech to use the voice assistant. Voiceitt’s mobile app listens and translates a user’s words into a form Alexa can understand, giving those with limited speaking function access to Alexa and its myriad smart home connections that had been out of reach.

Alexa Access

Amazon’s voice assistant is often unable to understand mumbled or softly spoken words. People with certain degenerative diseases or partial facial paralysis may not even be able to get Alexa’s attention. Voiceitt’s platform overcomes this obstacle by working with a user to train the mobile app’s automatic speech recognition program with how they speak. The AI improves over time, comprehending what the user says more quickly and accurately with practice. The Voiceitt app then creates a model for turning the user’s words into Alexa-friendly data, and the voice assistant responds to the user as though they had spoken with crisp enunciation. The communication with Alexa is silent, but Voiceitt was first conceived as an audible conversation tool for people with impaired speech to be understood when conversing with humans, not an AI.

“I originally envisioned Voiceitt helping people communicate with other people, but as the world started to change as smart speakers became more and more part of our lives, we actually realized we can do much more than that,” Voiceitt CEO Danny Weissberg told Voicebot in an interview. “We have technology that can improve those products and make them accessible to those that need them most.”

Weissberg co-founded Voiceitt eight years ago but started to expand Voiceitt’s scope when the company joined the 2018 Amazon Alexa Accelerator and eventually became an Amazon Alexa Fund portfolio company. The startup worked with Amazon to test the integration with residents of a Philadelphia long-term care facility for people with physical disabilities called Inglis House. Voiceitt and the Inglis staff helped volunteers use the Voiceitt app and train the AI to understand what they say. The app soon transmitted their voice commands to Alexa, giving them the control over environmental and entertainment options that their limited motor control and voice clarity had stymied. The model is generated and operates through the Voiceitt app, with a deep Alexa integration eliminating the need to enable an Alexa skill. Voiceitt may not be as smooth as it would for those without impaired voices, but it’s far more efficient than having to repeatedly ask a staff member, especially if the user sets up Routines to combine Alexa commands into a single phrase.

“The Alexa accelerator gave us the opportunity to expose our tech to Amazon, who became very supportive of what we are doing,” Weissberg said. “We did the successful small-scale pilot in 2019 to show that it works, and now there is seamless integration, and our application speaks with Alexa.”

Distant Connection

Providing Alexa access to people with disabilities offers obvious benefits in boosting their potential independence and self-reliance. They won’t need to ask a family member or professional caregiver to turn on lights or televisions if they can ask Alexa and be understood. That’s especially important during the ongoing COVID-19 health crisis when older people, in particular, should socially distance themselves as much as possible. Enabling the voice assistant to understand those with impaired speech also opens up the option of using new Amazon features like the Alexa Care Hub introduced this summer, which allows people from one home to consensually use a loved one’s Alexa-enabled smart device to keep track of their activity and serve as an emergency contact for Alexa to call.

Voiceitt’s arrangement with Alexa fits into the recent flurry of new voice accessibility features. Alexa has been keen to show off its homegrown accessibility tools, launching the Accessibility Hub to make them easier to find. Google is pursuing similar goals, including potential competition for Voiceitt in the form of Project Euphonia, a program for training voice assistants to understand what people with speech impairments are saying. It also showcased the release of the many related features it launched this year, like visual context to the Android Voice Access, Google Maps voice cues that give directions to the visually impaired. Google also made a major update to Action Blocks, bringing eye-tracking and symbolic images to the Blocks, single buttons or commands which can host multiple Google Assistant commands.

The Voiceitt app operates on a subscription basis and costs $199 per year, although there is a 30-day free trial period for those interested in testing out the technology. As the revenue stream is just beginning, Voiceitt is relying on the more than $15 million it has raised from investors. Just last month, the startup raised $10 million in a round led by Viking Maccabee Ventures. The money is being used to scale the company through arrangements like the one set up with Alexa. A significant portion of the new capital is going back in for researching ways to improve the platform with tools like a discrete trainer.

“Right now, the user needs to train very phrase and command individually. It does not extrapolate and won’t recognize a command it’s never trained with before,” Weissberg said. “The next generation will support fluent speech, and we are also developing an SDK so any company would be able to pick up and integrate Voiceitt into their product. As the world is becoming more and more voice-enabled, we can see we are going through a revolution. When I was a child, my father still brought home punchcards for computer programs. Now I have Alexa for everything.”

Follow @voicebotai Follow @erichschwartz

Google Assistant Adds Eye-Tracking Control, Upgrades Action Blocks for Better Accessibility