Want to Make Consumers Adopt Your Bot? Call It a Toddler, Not an Expert: New Study

People prefer talking to chatbots described as toddlers more than shrewd executives, according to a new Stanford University study. The researchers found that changing the metaphor used to introduce a chatbot altered how people felt about the experience, even when the chatbot was identical.

People prefer talking to chatbots described as toddlers more than shrewd executives, according to a new Stanford University study. The researchers found that changing the metaphor used to introduce a chatbot altered how people felt about the experience, even when the chatbot was identical.

Metaphorical Talk

Metaphors are a useful way to help people understand unfamiliar ideas, and are frequently applied to consumer AI. Slang and casual phrasing can make a bot seem a lot more human, just as improved synthetic voices sound more like actual people as the technology improves. Regardless of their actual abilities and features, people approach AI bots with an idea of their personality, even if that personality is just a name and an image attached to a chatbot. The researchers set up a basic conversational AI for helping people book flights and hotels and had 260 people test it out. The participants were introduced to the chatbot with one of four different metaphors, each rated as high or low in warmth and competence. Though the bot was always the same, people began conversing with it thinking it had been modeled after a toddler, an inexperienced teenager, a trained professional travel assistant, or a shrewd travel executive with a control group who were not given any metaphor at all.

“With the emergence of conversational artificial intelligence agents, it is important to understand the mechanisms that influence users’ experiences of these agents,” the study’s authors wrote. “Metaphors can present an agent as akin to a wry teenager, a toddler, or an experienced butler. How might a choice of metaphor influence our experience of the AI agent?”

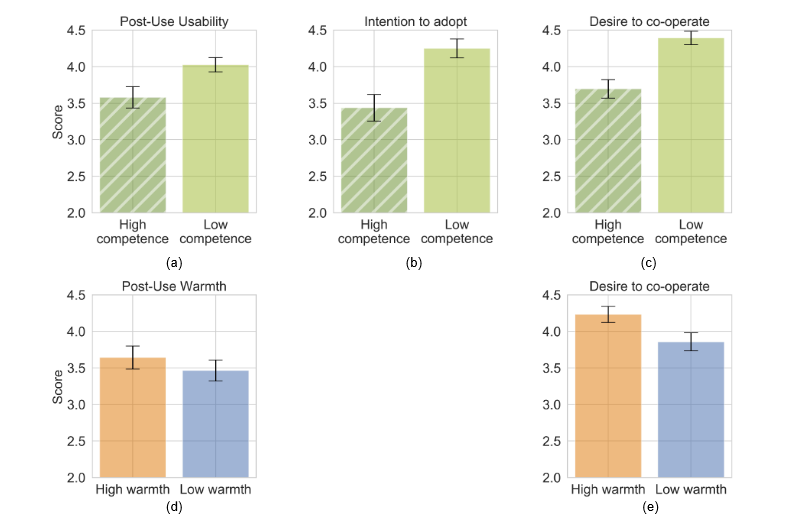

In surveys after booking their travel and accommodation, users showed a consistent preference for a warmer bot regardless of which metaphor was used. But, somewhat counterintuitively, people rated their experience with the bot they were told was modeled after a toddler over the one that was supposedly modeled after a trained, professional travel assistant. Both were rated as high in warmth, but people were more likely to say they would adopt and cooperate with the bot as being less competent, as can be seen in the chart above. At the same time, people said they preferred the higher competence metaphor version of a bot when asked at the beginning of the process. What people think they want often turns out not to be what they prefer to use in the future.

In surveys after booking their travel and accommodation, users showed a consistent preference for a warmer bot regardless of which metaphor was used. But, somewhat counterintuitively, people rated their experience with the bot they were told was modeled after a toddler over the one that was supposedly modeled after a trained, professional travel assistant. Both were rated as high in warmth, but people were more likely to say they would adopt and cooperate with the bot as being less competent, as can be seen in the chart above. At the same time, people said they preferred the higher competence metaphor version of a bot when asked at the beginning of the process. What people think they want often turns out not to be what they prefer to use in the future.

Expectation Improvement

It’s hard to ascribe concrete reasons for why people want AI that they have been told is less competent. The answer could simply be that being told a chatbot is modeled after a toddler lowered expectations to the point where users were much more impressed with the experience than they would have been if told the bot could easily handle all of their tasks. People will remember a good experience after thinking it would be bad more than they would if the bot performed precisely to their expectations.

“Users are more tolerant of gaps in knowledge of systems with low competence but are less forgiving of high competence systems making mistakes,” the study’s authors suggest. “The intention to adopt and desire to cooperate decreases as the competence of the AI system metaphor increases.”

Expectations influence experiences. It’s why people will rate the same wine as better tasting if they are told it is more expensive. Wine sellers have to find the price point that people believe reflects quality, but not so high that most people won’t buy it. The new study suggests the same goes for marketing AI, except in terms of describing competence. People need an impression of enough capability to want to try it, but low enough that they will keep using it, judging from the results of the study. Since people will anthropomorphize a bunch of algorithms even if they know better, this kind of psychological work may end up defining a lot of how AI is presented in the future, augmenting the actual platform with a layer of pleasant metaphor that encourages people to keep talking.

Follow @voicebotai Follow @erichschwartz

Google’s New Meena Chatbot Imitates Human Conversation and Bad Jokes

Futurama Fan Turns Bender’s Head into a Custom Smart Speaker