Facebook Shares Software to Teach Robots How to Navigate by Sound

Facebook announced that it is open-sourcing a tool for teaching an artificial intelligence how to identify and navigate to the source of a sound. The SoundSpaces tool lays the foundation for teaching “embodied AI,” robots, how to get process sounds as a way of determining the source in a room or home.

Facebook announced that it is open-sourcing a tool for teaching an artificial intelligence how to identify and navigate to the source of a sound. The SoundSpaces tool lays the foundation for teaching “embodied AI,” robots, how to get process sounds as a way of determining the source in a room or home.

Acoustic Recreation

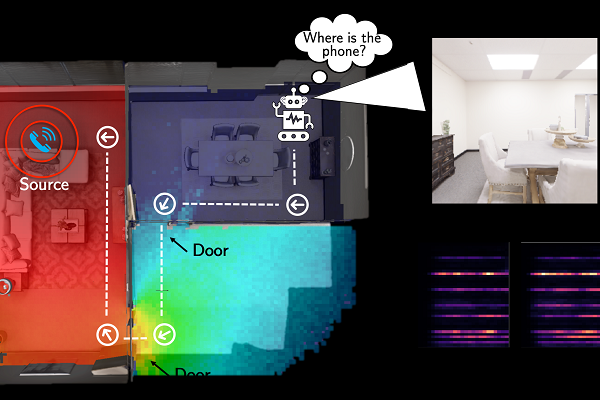

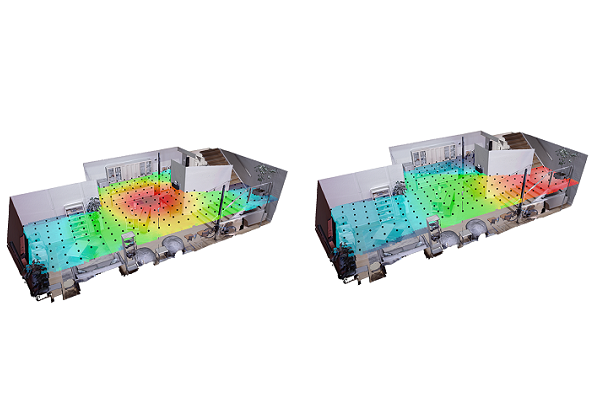

SoundSpaces created virtual 3D environments that mimic rooms and other indoor spaces, including how sound behaves. Developers can then simulate sounds, like a ringing phone, to train an AI to work out what the sounds are and where they are coming from. Robots usually need very detailed maps and specific navigational instructions to get where they are ordered. It’s a standard system for industrial processes, but applying the same unchanging precision to where people live is a lot harder. Sensors and models that can build an evolving map of a room and where sounds are coming from is a crucial next step. With enough data on a real-world environment, the AI can then devise a way to navigate to the object making the sound in a challenge Facebook calls AudioGoal.

“To our knowledge, this is the first attempt to train deep reinforcement learning agents that both see and hear to map novel environments and localize sound-emitting targets,” Facebook wrote in a blog post. “With this approach, we achieved faster training and higher accuracy in navigation than with single modality counterparts. Unlike traditional navigation systems that tackle point-goal navigation, our agent doesn’t require a pointer to the goal location. This means an agent can now act upon “go find the ringing phone” rather than “go to the phone that is 25 feet southwest of your current position.” It can discover the goal position on its own using multimodal sensing.”

“To our knowledge, this is the first attempt to train deep reinforcement learning agents that both see and hear to map novel environments and localize sound-emitting targets,” Facebook wrote in a blog post. “With this approach, we achieved faster training and higher accuracy in navigation than with single modality counterparts. Unlike traditional navigation systems that tackle point-goal navigation, our agent doesn’t require a pointer to the goal location. This means an agent can now act upon “go find the ringing phone” rather than “go to the phone that is 25 feet southwest of your current position.” It can discover the goal position on its own using multimodal sensing.”

See and Hear

The multimodal concept fits with Facebook’s ongoing open-sourcing of its machine learning models for AI navigation. Back in January, Facebook shared models it claimed could get around an indoor environment with 99.99% accuracy. The social media giant released the models as an open-source tool that could be used to enhance smart homes and make voice assistants more useful. Facebook described ways smart home devices with cameras could help humans keep track of things they can’t immediately find or aren’t home to look for. Add a Tile or the pinging many earbuds to the audio training models that Facebook opened up and a robot could find pretty much anything visible or audible. Facebook hasn’t mentioned any robots it is working on for consumers, Amazon is said to be working on an Alexa-powered robot, while Samsung has been demonstrating the Ballie and its home navigation for almost a year. Any robot that can see and hear will be a lot more popular than the Roomba-style options that constantly bump into walls on their perambulations.

Follow @voicebotai Follow @erichschwartz

Samsung Ballie is a Social Robot That Might Actually Be Useful and Scalable