Banks Can Create an Alexa Skill in Just Three Steps with the Conversation.One Platform

Rachel Batish, CRO, Conversation.One

Conversation.One is an omni-channel platform that builds conversational apps for organizations, from chat bots to voice apps like Amazon Alexa. The company recently launched a self-service platform which lets organizations create an Alexa skill, Google Home Action, Facebook Messenger bot and other conversational apps in just three steps. I recently got in touch with Rachel Batish, the company’s CRO, to discuss the new self-service platform, the future of voice technology and what Conversation.One is looking to do next.

What problem is Conversation.One trying to solve?

Conversation.One basically tries to provide answers and solutions for two of the main challenges organizations face with conversational solutions. The first one is the technicality -literally how to build a conversation solution and how to build one for all the different devices and different services out there, from voice-enabled assistants like Alexa to chatbots like Facebook Messenger. This is the immediate challenge. The second challenge is how to build the conversation user interface. It is very different from a graphical UI, especially when you have a large number of questions you want to answer. How do you design for voice? One very simple example is on Alexa, if I’m asking for a phone number, I probably want Alexa to repeat it twice to make sure I have it down correct. But with a chatbot, you wouldn’t need it repeated twice. There’s more visually you can use with a chatbot.

Tell me about the process of building the self-service platform.

We wanted to provide a simple wizard that builds a conversational apps like an Alexa skill and Google Home Action in one single flow. We started by targeting financial institutions before we had even built the first conversational tool to better understand their challenges. We found out that building an actual “conversation” was the main issue for most of them. With this in mind we designed our solution for financial institutions, to include 16 different queries that the user can ask, that are supported by a couple hundred different samples that live to each intent. For example, if a user wants information about their balance, we have 600 or 700 phrases that people can use to ask for their balance – even if they are wrong grammatically. The higher accuracy the conversation has, the better experience it creates for the customer.

We initially built those questions and sample answers manually. But now we’ve added our machine learning system to the platform which collects the data from real-time conversations, identifies failures and successful flows, and improves the conversation automatically. Over time as people ask new questions, in different forms and ways, the system will discover the user’s intent and improve the conversation on-the-fly. Conversations will become more efficient and better for the user. In conversational UI, you can’t limit the user.

What are the three steps of the self-service platform?

The first step is to just log in to the dashboard and choose the industry and the organization’s name. By choosing the industry they pick the relevant conversation for their vertical (banking, retail etc.) Then when they choose the name of their organization, they are actually connecting to their APIs. We have over 10,000 financial institution’s APIs already built in. This is extremely important for our clients because we found out that getting the APIs can become a substantial challenge.

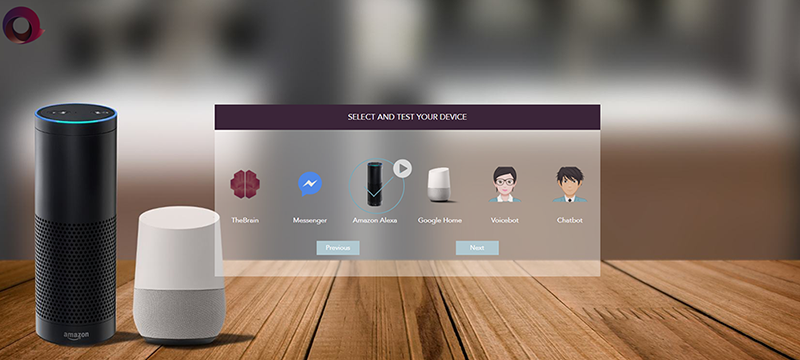

The second stage lets them choose what devices they want to support, like Facebook Messenger, Amazon Alexa, Google Home, etc. They can start with one or all – the good thing is that they need to go through one single process – to all devices and services. In addition, we also provide the ability to purchase the output of our machine learning data, which we coined “The Brain”, basically letting them use the dictionary that was built.

The third and final step is to just to click “Submit” and deploy the app on the relevant platforms. We have a built-in guide on how to submit an Alexa skill, Google Action or Facebook chatbot. Our clients also have the option to further customize their skill or action, add or delete questions or change or connect to a different API or to create another API to bring more engagement with the user.

How long does it take to build a skill from start to finish?

It takes no time unless you want to add extra customization. But even if you do, it will just take a couple of hours. Since we already have the conversations set in place it really doesn’t require anything else. If customers are coming from different industries, that are not yet automatically supported (not financial and retail) it might take them a few more days.

How many clients do you have using the platform today?

We have some 30 companies engaged on our platform, and hoping to see the first clients launch within the next week. The reason is that Financial skills are undergoing a thorough certification process by Amazon, and we are obliged to wait for their full approval.

Why did you decide to start with financial institutions?

As a team, we had previous experience working with financial institutions. Second, our first product was an omni-channel platform for mobile platforms where we focused on financial institutions as well, so we knew the challenges in designing solutions for them. We also have a pretty good relationship with the financial institution industry and the relevant ecosystem so we thought it would make sense to start in a place where we are most familiar. It was a good choice.

What was the response to the app development platform?

In general, we see a lot of enthusiasm, more than we actually expected. It can actually serve any institution. We also decided to put a lot of emphasis on the conversation so they don’t have to build the business logic themselves and we automatically connect to their API. It’s been amazing to see how many financial institutions, even the smaller ones, like the credit unions, are interested in delivering their info over the Amazon Echo and Google Home.

Why did you decide to launch the platform on Google Home as well?

Alexa is very popular but people still ask about Google Home. And it was our strategy from the very beginning that clients would want to have their solution deployed on as many devices and services as possible. It reminds us of the time smartphones came out. People wanted an app on the iPhone as well as for Androids phones. Then all the tablets, like iPads and the different Android devices. We are experiencing exactly the same trend right now with the Echo, Google Home, Apple HomePod and other devices. The possibility for small and large organizations to literally get a conversational app on more than one device in a few days or a week is priceless.

Why is it important for financial institutions to get on Alexa and Google Home?

Banks use IVR systems which can be very annoying for the end-user and expensive to the organizations themselves. We see from our clients that they see the benefit of using a voice app to support their other knowledge based applications. It makes sense, as I think those systems will eventually replace the IVR system.[wt-tip]It makes sense, as I think those systems will eventually replace the IVR system.[/wt-tip]

In addition, small organization today feel that they have to be a little more competitive with the larger ones in terms of what they are offering technologically. With a conversation app, they can be exactly like the big ones. As for the large banks, they also need to “fight back” fintech and social apps that are taking over some of their business – look how easy it is today to transfer money – people do not even have to go the bank anymore – they can do it through Facebook, Google or through another third party. This is where our clients feel threatened. By being able to reply faster to those trends, they can keep up with the growing competition.

What is different about building an app for Alexa versus Google Home?

As a platform that actually eliminates the differences, for our clients – it is exactly the same! Business wise, we do see that Alexa has a lot more requests but people are interested in Google Assistants because of the languages it supports. Right now, Alexa only supports English and German. There are a lot of clients looking for Spanish language support. This is where Google could gain an advantage in the market over Alexa. It is a very interesting war.

Is there anything that surprises you about how people interact with Alexa and other voice assistants?

I think what mostly surprised me is how differently adults talk to these devices compared to young children. I have two daughters, who are nine and seven. Whereas I am a little more cautious in what I’m asking because I don’t trust it will understand me, my daughters ask anything they want – and in many cases, it surprises me how it can understand them correctly. They treat Alexa like another member of the family. They talk to her like a person – “Do you like us?”, “Do you like living with us?”, “Do you have a boyfriend?”. Questions I would never think about asking.

Actually, looking at the way my daughters interact with those devices, it certainly proves that this is the future of communication. A lot of the things we used to do with our phones, we now just ask Alexa, like to play music, set a timer, check the weather, etc. I always like to reflect to the movie “Her” when talking about conversational solutions; I think we’re really going in that direction. It won’t be too long before people have relationships with their virtual assistants. I agree that this is very scary, but it won’t be for our kids. In some respects, my daughters trust Alexa more than they trust me.

What is next for Conversation.One?

We plan to provide a general eCommerce solution for conversations, and then add special features for relevant industries. We found out that there is a lot in common between the industries. We would start though with supporting the 5 largest eCommerce platforms. Our goal at the end is for “The Brain” to serve different segments and to learn from those different segments to have them endure on different verticals, just like our own brain.

Mycroft is Growing Fast and Building an Agent so People Won’t Be Dependent on Silicon Valley Giants

Jeff Adams Led the Team That Built Amazon Alexa, Then He Founded Cobalt