Nvidia Enhances Industrial Robotics With On-Device Generative AI Features

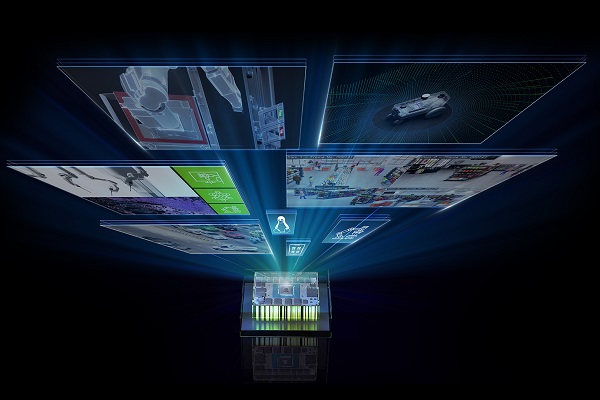

Nvidia has begun augmenting its industrial and robotics Jetson hardware platform with ‘on-edge’ generative AI features that operate within a device rather than requiring communications with the cloud. The company claims that the updates will allow over 10,000 Nvidia customers across sectors like manufacturing, logistics, and healthcare to more easily adopt emerging generative AI capabilities that promise to boost automation.

Generative AI Robots

Nvidia’s upgrades to its Jetson software aim to streamline the design and operation of generative AI in industrial systems. The idea is to incorporate generative AI features without needing lots of specific training data. Nvidia is providing developer tools like pre-trained models, APIs, and microservices so engineers can quickly build AI into edge devices like robots, manufacturing systems, and logistics networks. Using ready-made tools means engineers don’t have to start totally from scratch when developing AI for robots or manufacturing equipment. For example, generative AI could allow a robot to understand natural language commands, adapt trajectories to avoid obstacles on the fly or identify defects in products as they zip down the assembly line – all without needing extensive reprogramming. The same tools could also be used to teach wireless sensors to track assets around a warehouse and automatically provide inventory updates. Instead of reprogramming the system, a user could simply converse with a robot to teach it a new task.

The updates build on Nvidia’s existing Isaac robotics framework and Metropolis platform for industrial devices, which thousands of customers already leverage. Enhancements make it seamless to tap into emerging AI techniques like generative models. This includes access to Nvidia’s new Jetson Generative AI Lab, where engineers can get optimized tools and tutorials to deploy AI innovations from text generation to visual data processing. Nvidia is also unveiling new computer vision microservices and APIs for developers to easily imbue automation systems with next-gen AI capabilities. Additionally, a collection of new pre-built AI templates will enable rapid adoption by letting engineers plug and play modular components tailored for manufacturing, inspection, logistics, and more. Smoothing the integration of advanced models like generative AI into edge devices will be crucial for leveraging AI’s full potential across industries.

“Generative AI will significantly accelerate deployments of AI at the edge with better generalization, ease of use, and higher accuracy than previously possible,” Nvidia vice president of embedded and edge computing Deepu Talla said. “This largest-ever software expansion of our Metropolis and Isaac frameworks on Jetson, combined with the power of transformer models and generative AI, addresses this need.”

Nvidia AI Dominance

The inclusion of generative AI within Nvidia’s industrial robot space fits with the company’s current successful strategy of leveraging the technology wherever it is feasible to do so. Nvidia casts a long shadow over nearly everything involving generative AI right now and has shown no signs of resting on its laurels. The company recently showcased how its Grace Hopper CPU+GPU Superchip can outperform any rival on the MLPerf industry benchmark tests. Nvidia suggests the MLPerf tests serve to make the new TensorRT-LLM software for upgrading LLM efficiency and inference processing ability more enticing.

TensorRT-LLM leverages Nvidia’s GPUs and compilers to improve LLM speed and usability by a significant margin. The TensorFlow-based platform minimizes coding requirements and offloads performance optimizations to the software. The company’s TensorRT deep learning compiler and other techniques allow the LLMs to run across multiple GPUs without any code changes. Both upgrades extend Nvidia’s generative AI work from earlier this year. Since then, it has rapidly scaled up its operations, including working with Hugging Face to develop Training Cluster as a Service, a tool for streamlining enterprise LLM creation.

Follow @voicebotaiFollow @erichschwartz

Nvidia’s New TensorRT-LLM Software Pushes Limits of AI Chip Performance

Nvidia and Hugging Face Showcase New Generative AI Training Service