Cohere Rolls Out Enterprise Generative AI Chatbot API

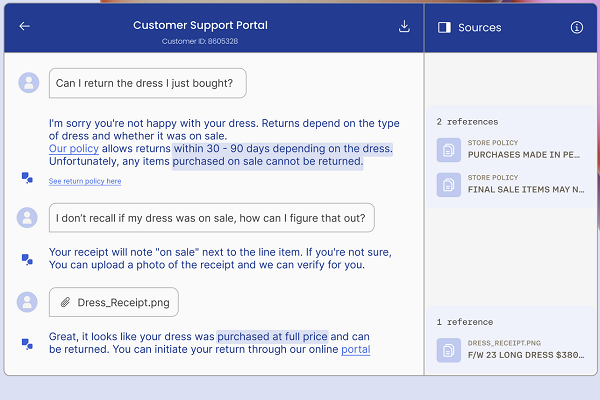

Enterprise generative AI startup Cohere has debuted a chatbot API in beta with retrieval-augmented generation (RAG), employing Cohere’s Command large language model (LLM). The API will enable developers to integrate conversational capabilities into their organization’s services. The startup has also unveiled a free demo of its Coral chatbot in its new Coral Showcase portal.

Coral Chat

Cohere’s development has often focused on melding its generative AI and natural language processing tools with customer service platforms, but Coral is aimed at assisting a company’s workers with completing their tasks. Companies are able to fine-tune the base version of Coral through training on their databases to suit their needs and protect proprietary information. The result is a chatbot capable of conducting research and analysis tasks for employees, able to both understand queries and provide explanations using informal language.

“Whether you’re building a knowledge assistant or customer support system, the Chat API makes creating reliable conversational AI products simpler,” Cohere explained in a blog post. “As with other Cohere endpoints, developers have substantial control over the output, such as choosing the model, adjusting the temperature, and utilizing the chat history.”

Cohere has suggested Coral will be a useful tool for tasks like gathering product information for a customer service agent or a market analysis and financial projection. Though Coral initially came out in July, the API allows brands to build it into their own internal or external-facing apps. The Chat API allows for directly integrating Cohere’s flagship conversational model Command into apps and bots. This provides a smooth interactive experience akin to chatting with a human. The Coral Showcase allows users to test out its chatbot before buying access to the API. Cohere specifically highlighted the API’s groundbreaking support for RAG techniques.

“Retrieval-augmented generation, or RAG, can help generative AI models build trust with users. RAG systems improve the relevance and accuracy of generative AI responses by incorporating information from data sources that were not part of pre-trained models,” Cohere wrote. “The Chat API is RAG-enabled, meaning developers can inform model generations with information from external data sources. This represents a leap forward for generative AI accuracy, verifiability, and timeliness.”

Cohere’s new offering follows a $270 million raise in June. The company has been on a steady uptick since former Google Brain researchers launched it from stealth in 2021, later partnering with Google Cloud and its supercomputers to set up and train its LLMs. Google Cloud’s AI and machine learning hardware and infrastructure powered Cohere’s development of its LLMs. Cohere also partners with conversational AI provider LivePerson, providing LLMs for LivePerson’s Conversational Cloud platform. LivePerson is also keen to employ Coral.

Follow @voicebotaiFollow @erichschwartz

LivePerson and Cohere Augment Enterprise AI Services With LLMs