Amazon Bedrock Generative AI Service Widens Access and LLM Options

Amazon Web Services (AWS) has released its generative AI app-building platform Amazon Bedrock to the general public and added additional large language models and other new features. Amazon Titan Embeddings and Meta’s Llama 2 LLMs will join the foundation models (FMs) in the Bedrock model marketplace alongside ones built by Cohere, Anthropic, AI21, and Stability.ai. The Amazon CodeWhisperer AI code-writing assistant has also been enhanced with a new enterprise subscription level with more power and the ability to incorporate an organization’s codebase.

Amazon Web Services (AWS) has released its generative AI app-building platform Amazon Bedrock to the general public and added additional large language models and other new features. Amazon Titan Embeddings and Meta’s Llama 2 LLMs will join the foundation models (FMs) in the Bedrock model marketplace alongside ones built by Cohere, Anthropic, AI21, and Stability.ai. The Amazon CodeWhisperer AI code-writing assistant has also been enhanced with a new enterprise subscription level with more power and the ability to incorporate an organization’s codebase.

Bedrock Building

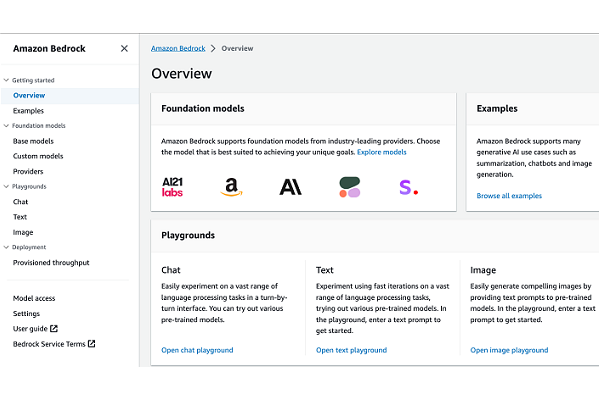

Amazon introduced Bedrock to provide pre-built integrations with leading generative AI models, enabling companies to leverage these complex AI systems via simple APIs without managing infrastructure to build apps like chatbots, image generators, and text analytics tools. Bedrock is now generally available after a long period of limited access to a select list of companies.

On the analytics front, AWS launched a generative authoring feature for its Amazon QuickSight data visualization tool. The tool lets users generate visualizations and calculations through conversational commands and queries, automating complex manual workflows. This extends the natural language understanding that AWS gave to the QuickSight Q business forecasts late last year. AWS also revealed a new level for its Amazon CodeWhisperer AI coding assistant aimed at enterprise customers. Those paying for the Amazon CodeWhisperer Enterprise Tier will get more resources and be able to customize the assistant by integrating the organization’s private codebase and internal APIs.

AWS has been opening up its model marketplace and adding conversational agents and other options since. The general availability comes with the news that Meta’s Llama 2 LLM will be available, specifically the 13 billion and 70 billion parameter models. Bedrock is also adding Amazon Titan Embeddings, which converts text into machine-readable vectors. Bedrock’s flexibility makes it suitable for diverse use cases like search, content creation, and drug discovery.

“Amazon Bedrock’s comprehensive capabilities help you experiment with a variety of top FMs, customize them privately with your data using techniques such as fine-tuning and retrieval-augmented generation (RAG), and create managed agents that perform complex business tasks—all without writing any code, AWS principal developer advocate for generative AI Antje Barth explained in a blog post. “Since Amazon Bedrock is serverless, you don’t have to manage any infrastructure, and you can securely integrate and deploy generative AI capabilities into your applications using the AWS services you are already familiar with.”

Follow @voicebotai Follow @erichschwartz

Amazon Stakes Generative AI Claim With AWS Bedrock Service for Native and Third-Party LLMs

Amazon Upgrades AWS Bedrock Generative AI Service With New Model and Conversational Agents