Amazon Stakes Generative AI Claim With AWS Bedrock Service for Native and Third-Party LLMs

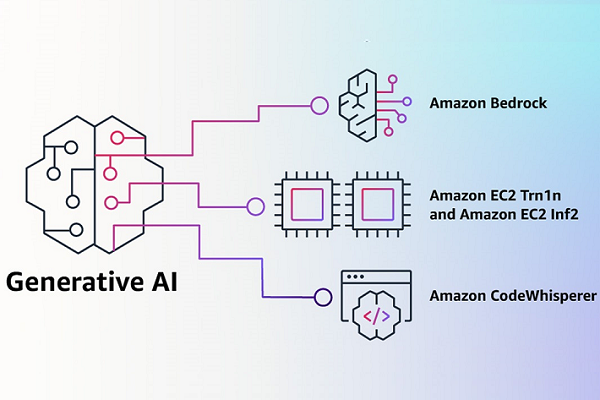

Amazon has laid out its ambitions for generative AI with the launch of Bedrock from Amazon Web Service, which enables developers to design and build apps with pre-trained foundation models (FMs) provided by generative AI startups like AI21, Anthropic, and Stability AI. Bedrock also offers exclusive access to the new Titan models created by AWS. The news accompanies wider access to the CodeWhisperer AI programming assistant and general access to the AWS Inferentia2 chip-powered Amazon EC2 Inf2 instances, which reduce the cost of running generative AI processes.

Amazon has laid out its ambitions for generative AI with the launch of Bedrock from Amazon Web Service, which enables developers to design and build apps with pre-trained foundation models (FMs) provided by generative AI startups like AI21, Anthropic, and Stability AI. Bedrock also offers exclusive access to the new Titan models created by AWS. The news accompanies wider access to the CodeWhisperer AI programming assistant and general access to the AWS Inferentia2 chip-powered Amazon EC2 Inf2 instances, which reduce the cost of running generative AI processes.

Generative Bedrock

AWS Bedrock sets up a system for developers interested in creating and scaling generative AI apps. The idea is that they can assemble their program from existing models to fit their needs while still accessing the suite of AWS tools already available. AWS clients can integrate the models through an API. The selection includes the new Jurassic-2 models built by AI21, Anthropic’s new Claude model, and Stability AI’s text-to-image services, including Stable Diffusion. The announcement is a kind of culmination of previously revealed AWS partnerships. AWS became Stability AI’s preferred cloud provider in November and began hosting Hugging Face’s software in March. The interest has also extended toward younger generative AI startups with the launch of a new generative AI accelerator.

The model options also include the new AWS-trained Titan FMs. There are two initial Titan variants. The first is a model for synthesizing written content and answering queries by pulling information from databases. The other is an “embedding model,” meaning it processes text into “numerical representations” with the meaning embedded within. That’s useful for carrying out searches and is how Amazon’s own product search operates according to the company. Any Bedrock model can be further customized as needed through its connection to AWS examples for training.

“Bedrock is the easiest way for customers to build and scale generative AI-based applications using FMs, democratizing access for all builders. Bedrock will offer the ability to access a range of powerful FMs for text and images—including Amazon’s Titan FMs, which consist of two new LLMs we’re also announcing today—through a scalable, reliable, and secure AWS managed service,” AWS data and machine learning vice president Swami Sivasubramanian explained in a blog post. “With Bedrock’s serverless experience, customers can easily find the right model for what they’re trying to get done, get started quickly, privately customize FMs with their own data, and easily integrate and deploy them into their applications using the AWS tools and capabilities they are familiar with (including integrations with Amazon SageMaker ML features like Experiments to test different models and Pipelines to manage their FMs at scale) without having to manage any infrastructure.”

Bedrock is currently in a limited preview, but Amazon plans to widen availability soon. There are many aspects left unannounced by Amazon at the moment, including pieces or how it has arranged revenue sharing and other licensing deals with the AI model providers. Presumably, those details will emerge as the program rolls out.

CodeWhisperer Shoutout

Another AWS generative AI project, CodeWhisperer, is moving out of previews and into general availability and making it free to developers. CodeWhisperer serves as a digital pair programmer, similar to GitHub’s Copilot, which the Microsoft brand powers with OpenAI’s LLMs. The CodeWhisperer AI has added ten new coding languages to the three it already understood following training on many billions of lines of code, including both its own database and open-source code and documentation. Amazon embedded CodeWhisperer in the AWS IDE Toolkit, but the AI isn’t static. CodeWhisperer will learn from the user’s coding and comments, adapting to individual styles and contexts over time. It can also mark and even remove any functions that may be under a software license, potentially helping avoid lawsuits.

“Developers aren’t truly going to be more productive if code suggested by their generative AI tool contains hidden security vulnerabilities or fails to handle open source responsibly. CodeWhisperer is the only AI coding companion with built-in security scanning (powered by automated reasoning) for finding and suggesting remediations for hard-to-detect vulnerabilities, such as those in the top ten Open Worldwide Application Security Project (OWASP), those that don’t meet crypto library best practices, and others,” Sivasubramanian wrote. “Building powerful applications like CodeWhisperer is transformative for developers and all our customers. We have a lot more coming, and we are excited about what you will build with generative AI on AWS. Our mission is to make it possible for developers of all skill levels and for organizations of all sizes to innovate using generative AI. This is just the beginning of what we believe will be the next wave of ML powering new possibilities for you.”

Follow @voicebotaiFollow @erichschwartz

Amazon’s New CodeWhisperer AI Programming Assistant Challenges GitHub Copilot

Generative AI Startup Hugging Face Picks AWS to Host Future Large Language Models