Adobe Expands Generative AI Toolkit Firefly to Video

Adobe has augmented the Firefly generative AI tools introduced last month with additional video editing and manipulation options. Though still in beta, the wider release will include the ability to add text, adjust color, find and insert music, and get AI assistance with other advanced editing features.

Adobe has augmented the Firefly generative AI tools introduced last month with additional video editing and manipulation options. Though still in beta, the wider release will include the ability to add text, adjust color, find and insert music, and get AI assistance with other advanced editing features.

Firefly Video

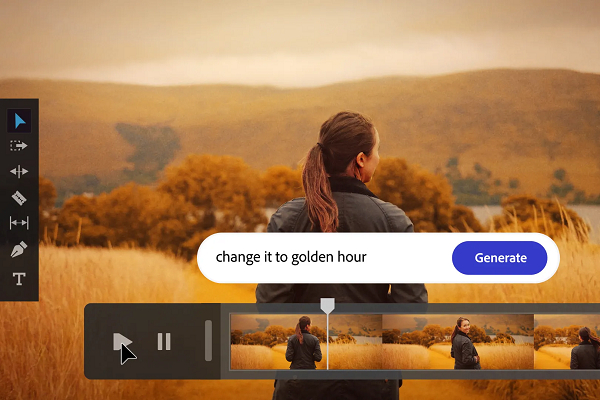

Fiddling with precise color and lighting scales is a necessary, if sometimes tedious, aspect of video editing. The new Adobe Firefly feature list will allow users to describe what they want the scene to look like in natural language, a kind of text-to-filter tool for the AI, including limiting the change to part of a scene, as demonstrated in the clip above. Text can also be imposed upon a video, with the font and style explained in the text box for the AI to interpret and set up on the screen.

Adobe is also pitching Firefly as a more comprehensive filmmaking assistant, helping teach users how to use the more advanced Adobe editing tools and organizing the film’s pre-production. The AI can analyze a script to draft prospective storyboards and pre-visualizations and collect potential b-roll to match discussions and interviews. Firefly will look through b-roll footage that matches what the person on-screen is saying and overlay it in the right spot. For instance, the AI might pull clips of people constructing the Empire State Building to go with someone describing how it was built. Firefly can do the same for background music and sound effects but can take it a step beyond to match what’s happening on-screen by generating new audio based on legally approved databases.

“We are truly in the golden age of video — short-form video is ubiquitous in news, social media, and entertainment. And, insatiable demand, multiplying channels, and globally distributed teams make it extra challenging to scale the production of high-quality creative work efficiently,” explained Adobe senior vice president of digital media Ashley Still in a blog post. “This is why we’re excited to invent and innovate with the video and audio community to make it easier and faster for you to transform your vision into reality.”

Generative Film

Generative AI for video production is not as common as for still images, but new options are popping up at an accelerating rate. Meta recently introduced the Segment Anything Model (SAM), capable of dividing both images and videos into their component objects without training. SAM can identify and extract those objects within an image or video. And Adobe’s video tools are similar to Canva’s recent approach in providing extensive generative AI assistance for creative presentations and campaigns. And as deepfake videos go mainstream, it’s easy to see how additional generative AI tools will accompany their integration into entertainment and other verticals.

“With Firefly as a creative co-pilot, you can supercharge your discovery and ideation processes and cut post-production time from days to minutes. And with generative AI integrated directly into your workflows, these powerful new capabilities will be right at your fingertips,” Still wrote. “Imagine the power to instantly change the time of day of a video, automatically annotate and find relevant b-roll, or create limitless variations of clips — all as a starting point of your creativity.”

Follow @voicebotaiFollow @erichschwartz

Adobe Launches Firefly Generative AI Models for Synthetic Images and Font Design

Meta’s New Segment Anything Model Can Identify and Remove Objects in Images and Videos