Microsoft Debuts Security Copilot Combining Generative AI and Cybersecurity

Microsoft has unveiled Security Copilot, which looks to enhance cybersecurity generative AI. The new service continues Microsoft’s Copilot branding of features powered by large language models, this time with the goal of helping people understand current and potential threats to their digital safety.

Microsoft has unveiled Security Copilot, which looks to enhance cybersecurity generative AI. The new service continues Microsoft’s Copilot branding of features powered by large language models, this time with the goal of helping people understand current and potential threats to their digital safety.

Security Copilot

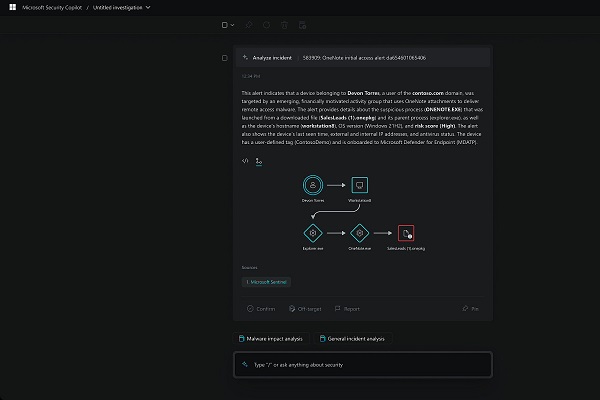

Security Copilot acts as an assistant tasked with collecting and analyzing cybersecurity breaches. While the idea of a tool for helping understand and prepare for such incidents isn’t new, Copilot Security uses OpenAI’s GPT-4 LLM to better translate raw data into useful information. The new tool can streamline security briefings by summarizing incidents and explaining where malicious actions may have originated. The AI can then recommend what to do to mitigate future issues and help prioritize the next steps to take. How Microsoft integrated GPT-4 into the program isn’t clear, but the customized model is trained for security specifically.

“When Security Copilot receives a prompt from a security professional, it uses the full power of the security-specific model to deploy skills and queries that maximize the value of the latest large language model capabilities. And this is unique to a security use-case. Our cyber-trained model adds a learning system to create and tune new skills,” Microsoft corporate vice president of security, compliance, identity, and management Vasu Jakkal explained in a blog post. “Security Copilot then can help catch what other approaches might miss and augment an analyst’s work. In a typical incident, this boost translates into gains in the quality of detection, speed of response and ability to strengthen security posture.”

Generating Copilots

The latest Copilot follows the release of Microsoft 365 Copilot for the tech giant’s productivity software, the Copilot synthetic text generator for Dynamics 365, and the GitHub Copilot coding assistant, also owned by Microsoft. All of the Copilots pitch generative AI as a digital assistant for creating and understanding any and all forms of text using natural language. Extending a tool for summarizing potentially complex essays and completing code for new websites to a role explaining cybersecurity reports isn’t a huge leap. Microsoft did admit the Security Copilot is capable of making mistakes as with its other generative AI projects but presumably is confident it won’t cause the kind of issues that led OpenAI to take down ChatGPT for a while last week.

Follow @voicebotaiFollow @erichschwartz

Microsoft 365 Copilot Infuses Generative AI Into Every Office App

Microsoft Offers Conversational Style Options to Bing Generative AI Chatbot