Google Sends Imagen Text-to-Image Generator to AI Test Kitchen

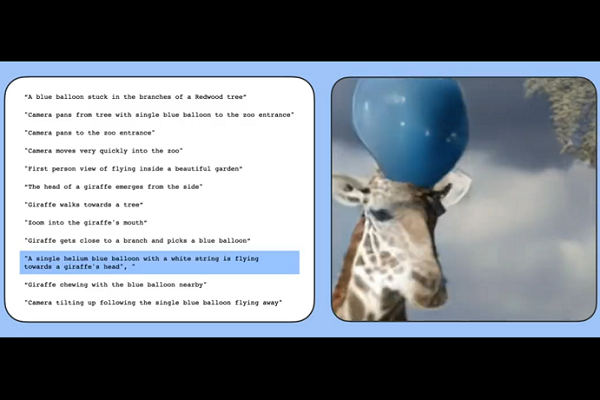

Google will soon add text-to-image/video AI tool Imagen to its AI Test Kitchen ahead of its plans for a wider release for the synthetic media producer. A preview of Imagen’s new home was part of a larger showcase by Google of its generative AI work this week, including demonstrating a combination of Imagen’s video enhancement combined with Google’s other text-to-video project Phenaki and its ability to create longer, more complex videos like the one seen above.

Imagen Kitchens

Google first unveiled Imagen about a month ago, but the inclusion in the AI Test Kitchen will be the first time it is available publicly, albeit a limited number of the public. Imagen can interpolate a text prompt to make an image or video in whatever style the user specifies. The multi-step process uses seven diffusion models to first produce a low-resolution video draft before upgrading the resolution repeatedly. In this case, Imagen enhances a video generated on a different text-to-video model called Phenaki, which is designed to process extended, more detailed text prompts into longer videos than Imagen. Meanwhile, for a text-to-3D rendering, Google shared how the new DreamFusion tool augments Imagen with its NeRF 3D functions.

Once accessible on the AI Test Kitchen, Imagen will allow users to digitally create cityscapes with AI patterned after a chosen theme through the City Dreamer platform, while the upcoming Wobble program will center on leveraging AI to design cute, dancing monsters by describing them in text. All users will be able to offer feedback to Google as they shape Imagen’s final form.

“AI-powered generative models have the potential to unlock creativity, helping people across cultures express themselves using video, imagery, and design in ways that they previously could not,” Google Research senior vice president Jeff Dean explained in a blog post. “Our researchers have been hard at work developing models that lead the field in terms of quality, generating images that human raters prefer over other models. We recently shared important breakthroughs, applying our diffusion model to video sequences and generating long coherent videos for a sequence of text prompts. We can combine these techniques to produce video — for the first time, today we’re sharing AI-generated super-resolution video.”

Text AI

Despite the rapid growth of the visual synthetic media market, Google isn’t ignoring the wordier side of conversational AI. The tech giant revealed its begun early-stage experiments with a text generator called Wordcraft built on its LaMDA dialog system. A group of writers has been playing with Wordcraft to compose new stories that you can read through the Wordcraft Writers Workshop website. Google is also working on a universal speech translator through the new 1,000 Languages Initiative. The end goal of the project is an AI model that can speak and translate among the 1,000 most spoken languages. To start it off, Google has added the Universal Speech Model (USM), trained on more than 400 languages, to its inventory. As Google continues collecting language data, it is taking steps to use what it has already accessible, such as adding nine new African languages to the voice typing on for Gboard.

“Language is fundamental to how people communicate and make sense of the world. So it’s no surprise it’s also the most natural way people engage with technology. But more than 7,000 languages are spoken around the world, and only a few are well represented online today,” Dean wrote. “That means traditional approaches to training language models on text from the web fail to capture the diversity of how we communicate globally. This has historically been an obstacle in the pursuit of our mission to make the world’s information universally accessible and useful.”

Follow @voicebotaiFollow @erichschwartz

Google Debuts Imagen Video AI Text-to-Video Generator Rival for Meta’s Make-A-Video

Google Demos New Conversational AI Model and Opens AI Test Kitchen

Google Assistant Adds Parental Controls and New Voices for Kids