Meta Unveils AI Model Translating 200 Languages

Meta has shared a new AI model able to perform high-quality translations among 200 languages. Born out of Meta’s No Language Left Behind (NLLB) project, the NLLB-200 model claims to score a 44% higher average accuracy based on the new FLORES-200 evaluation dataset Meta released at the same time.

200 Tongues

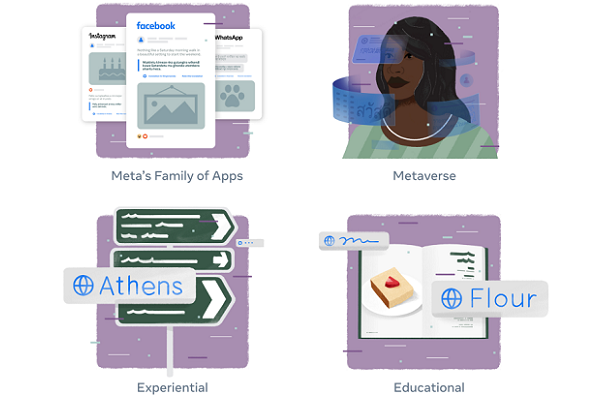

Meta plans to embed NLB-200 across its products. The company estimated the model will handle more than 25 billion translations a day on Facebook, Instagram, and other Meta platforms. The FLORES-200 dataset examined the AI model in each language and in 40,000 different translation directions. NLLB-200 even surpassed 70% accuracy in some of the languages with almost no digital presence. Meta made the AI model, evaluation dataset, and related training codes open-source so other researchers can extend the work, offering up to $200,000 in grants to those with ideas on how to best use NLLB-200 for scientific and philanthropic projects supporting the UN Sustainable Development Goals.

“It’s impressive how much AI is improving all of our services. We just open-sourced an AI model we built that can translate across 200 different languages — many of which aren’t supported by current translation systems. We call this project No Language Left Behind, and the AI modeling techniques we used are helping make high quality translations for languages spoken by billions of people around the world,” Meta CEO Mark Zuckerberg wrote in a Facebook post about the project. “Communicating across languages is one superpower that AI provides, but as we keep advancing our AI work it’s improving everything we do — from showing the most interesting content on Facebook and Instagram, to recommending more relevant ads, to keeping our services safe for everyone.”

Meta Models

Meta joins plenty of other researchers and tech companies eager to build and show off AI datasets and models that push linguistic boundaries. For instance, Amazon introduced its own 51-language dataset aptly named MASSIVE earlier this year to encourage multilingual voice AI development, particularly for Alexa. Similar efforts by MLCommons led the AI non-profit consortium to publish both The People’s Speech Dataset of more than 30,000 hours of supervised conversational data and the Multilingual Spoken Words Corpus (MSWC).

On the translation front, the Mozilla Foundation’s Common Voice project supports tech developers without access to proprietary data a few years ago. The Common Voice Database boasts more than 9,000 hours of 60 different languages and claims to be the world’s largest public domain voice dataset. Nvidia made a $1.5 million investment in Mozilla Common Voice and started working with Mozilla on voice AI and speech recognition. The global focus of the project led to a $3.4 million investment this spring to focus on creating such a resource for Kiswahili, known elsewhere as Swahili. The new model reflects Meta’s AI pursuits, including the two giant conversational AI datasets released last year to encourage AI assistant research and development. One of the datasets focuses on accelerating multilingual voice assistant development, while the other is for training an AI using only a tenth of the standard amount of raw data. The scientists behind the model noted that the model could widen access to digital technology on a global scale, opening doors to those who don’t speak the dominant online languages like English, Mandarin, Spanish, and Arabic. That applies to existing platforms and the still mostly nascent virtual worlds of the metaverse.

On the translation front, the Mozilla Foundation’s Common Voice project supports tech developers without access to proprietary data a few years ago. The Common Voice Database boasts more than 9,000 hours of 60 different languages and claims to be the world’s largest public domain voice dataset. Nvidia made a $1.5 million investment in Mozilla Common Voice and started working with Mozilla on voice AI and speech recognition. The global focus of the project led to a $3.4 million investment this spring to focus on creating such a resource for Kiswahili, known elsewhere as Swahili. The new model reflects Meta’s AI pursuits, including the two giant conversational AI datasets released last year to encourage AI assistant research and development. One of the datasets focuses on accelerating multilingual voice assistant development, while the other is for training an AI using only a tenth of the standard amount of raw data. The scientists behind the model noted that the model could widen access to digital technology on a global scale, opening doors to those who don’t speak the dominant online languages like English, Mandarin, Spanish, and Arabic. That applies to existing platforms and the still mostly nascent virtual worlds of the metaverse.

“Native speakers of these very widely spoken languages may lose sight of how meaningful it is to read something in your own mother tongue. We believe NLLB will help preserve language as it was intended to be shared rather than always requiring an intermediary language that often gets the sentiment/content wrong,” the researchers explained in their publication. “It can also help advance other NLP tasks, beyond translation. This could include building assistants that work well in languages such as Javanese and Uzbek or creating systems to take Bollywood movies and add accurate subtitles in Swahili or Oromo. As the metaverse begins to take shape, the ability to build technologies that work well in hundreds or even thousands of languages will truly help to democratize access to new, immersive experiences in virtual worlds.”

Follow @voicebotai Follow @erichschwartz

Amazon Unveils Speech Datasets for Alexa Skill Development in 51 Languages

Google’s Translatotron 2 Improves Linguistic Shifts Without the Deepfake Potential

Facebook Shares 100-Language Translation Model, First Without English Reliance