University of Waterloo Study Found Consumers Believe Siri is Disingenuous and Cunning Compared to Alexa as Genuine and Caring

A University of Waterloo study had 10 men and 10 women interact with Amazon Alexa, Apple Siri, and Google Assistant and then asked the participants questions about the voice assistant personalities. Amazon, Apple, and Google have each gone to great lengths to create personas for their assistants to make them more relatable for consumers. Edward Lank, a professor in Waterloo’s School of Computer Science, suggests these efforts are paying off in at least one way:

People are anthropomorphizing these conversation agents which could result in them revealing information to the companies behind these agents that they otherwise wouldn’t.

He followed this up by suggesting voice assistants are “data gathering tools that companies are using to sell us stuff.” The details of the study findings won’t be revealed until April at the ACM CHI Conference on Human Factors in Computing Systems according to an article in Waterloo University News. It will be interesting to learn just what types of information is revealed that wouldn’t be otherwise and how the researchers know the information wouldn’t be shared. But, there are some more data available today.

Siri is Cunning, Alexa is Caring

It is not clear how the terms cunning, caring, disingenuous, and genuine came to be selected by consumers, but the terms draw a sharp contrast. Link told Waterloo University News that Siri was viewed as disingenuous and cunning compared to Alexa’s traits of genuine and caring. If these sentiments are replicated in a large-scale trial that should be cause for concern in Cupertino. It might just be a reason to celebrate in Seattle. The sentiment for Google Assistant was not shared but “the individuality of Google, and Siri especially, [were] more defined and pronounced,” than Alexa which was viewed as “neutral and ordinary.”

It is important to note that consumers tend to assign persona characteristics to synthetic voices regardless of whether it was designed intentionally. A Voicebot study conducted with Voices.com and Pulse Labs found that synthetic voices used to read news items were variously assigned traits such as monotonous, robotic, calm, masculine, dull, and pleasant. This is part of human nature. Persona development is an attempt by the voice assistant providers to shape sentiment in a way that achieves their goals.

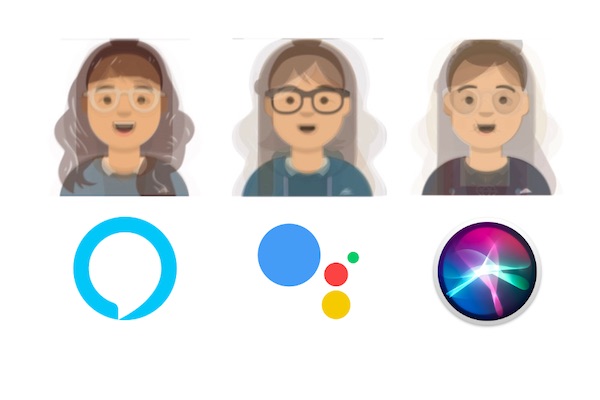

Avatars for Alexa, Google Assistant, and Siri

The study also had the participants describe the voice assistants physically and create an avatar representing what they believe they would look like. Granted, the conclusion of the study was that humans have a tendency to anthropomorphize voice assistants and the researchers asked participants to do just that. This exercise may have influenced the final conclusions that revealed contradicting viewpoints. The same assistant was variously said to have long or short hair, casual or business formal clothes in both dark or neutral colors. That isn’t exactly a consensus emerging.

One other point of note. Many industry observers and insiders claimed that Google had made a big mistake because it chose the name Assistant and not something more human-sounding like Alexa or Siri. However, at the same time, Google actually defined a persona for its generically named Assistant and that seems to have delivered sufficient impact for consumers to anthropomorphize it. The move was also sufficient to get researches to criticize the Assistant for trying to be so humanlike that consumers might share more information than they would otherwise.

Follow @bretkinsella Follow @voicebotai

Alexa Custom Tasks and Direct Skill Connections will Lead to New Types of Skill Experiences in 2020