How to Build a Samsung Bixby Capsule

The next generation of Samsung’s assistant, Bixby was revealed in early November at Samsung’s annual developer conference (SDC). The new Bixby 2 is based upon Viv Labs technology under the covers. For those of you not familiar with Viv, the founders of Viv created the original Siri before it was bought by Apple. After leaving Apple, they founded Viv Labs to build the next generation AI and assistant. Samsung bought Viv in 2016 and the new Bixby is the result of that acquisition.

Next generation is an apt description of Bixby 2. The thinking and design behind it represent a new generation of conversational platform and capabilities. This article will veer into the technical and code, but the intent is to stay high level enough so that designers and others interested in voice who are not developers will have an idea how a Bixby capsule (equivalent to an Alexa Skill or Google Action) is built.

Bixby Compared to Alexa and Google Assistant

Throughout this article, several comparisons and analogies of Bixby to Amazon Alexa and Google Assistant will be made. These comparisons will be used to emphasize and highlight the commonalities as well as the differences.

One of the primary ways Bixby is different from Alexa and Google is the development paradigm. Bixby is heavily model/declarative driven. You will spend much more time with Bixby designing and developing models than writing code. This contrasts sharply with Alexa which is primarily code/imperative driven. Google sits somewhere in the middle.

To understand Bixby, we are going to build a sample Bixby Capsule. Our Bixby Capsule will be simple but useful. It will allow one to lookup commuter train schedules. I live in the San Francisco Bay Area and our local train system is called BART. So, let’s call this the BART Commuter capsule or BCC for short. BCC will allow you to ask simple questions like:

“When is the next BART from [Departure Station] to [Destination Station]?; e.g. “When is the next BART from Embarcadero to Walnut Creek?”

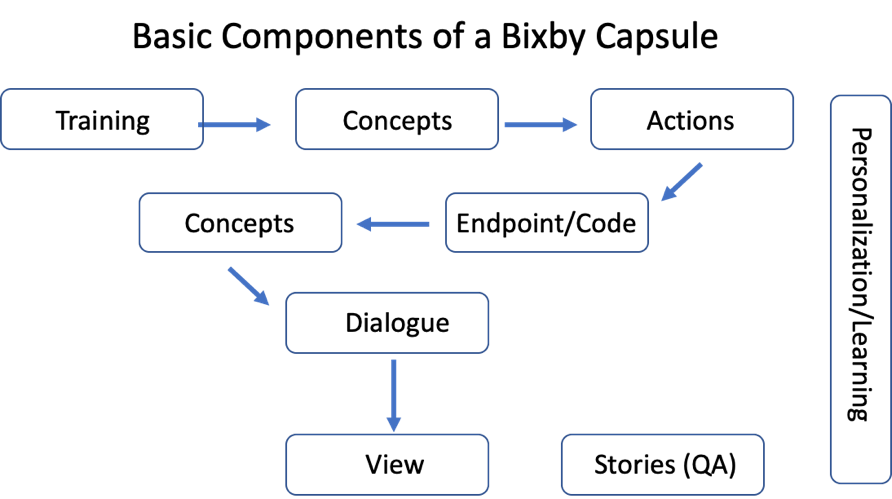

Let’s see how we create this in Bixby. The diagram below shows the fundamental components of a Bixby capsule.

Modeling Concepts in Samsung Bixby

The first step in building a capsule is to model your concepts. A concept is simply an object – it may represent user input (the departure and destination stations in our capsule) or output (the train schedule). A concept can be a primitive e.g. a string, integer etc., or a structured concept which contains primitive concepts. Here are concepts for BCC:

Input Concepts

- Station: List of train stations in the BART system

- SearchDepartureStation: The user’s searched for departure station

- SearchArrivalStation: The user’s searched for arrival station

Output Concepts

- TrainSchedule: The schedules of train trips running between the SearchDepartureStation and the SearchArrivalStation

- Trip: A child of TrainSchedule – a train trip. There are one or more Trip(s) in a TrainSchedule

- TripSteps: A child of Trip – the steps in a trip. If the train is direct, there is one step, transfers add additional steps

- Speech: The output speech

Let’s look at how we model these in Bixby. Here is a snippet of the Station concept:

enum (Station) {

description (BART Station Names)

symbol(12th St. Oakland City Center)

symbol(16th St. Mission)

symbol(19th St. Oakland)

symbol(24th St. Mission)

symbol(Antioch)

symbol(Ashby)

symbol(Balboa Park)

. . .Our Station concept is modeled as an enumeration; e.g. a fixed list of possible values that represent all of the BART stations. The SearchDepartureStation and SearchArrivalStation are modeled identically. Here is the model for the SearchDepartureStation concept:

enum (SearchDepartureStation) {

description (Train Departure Station)

role-of (Station)

}The SearchDepartureStation is an enumeration like the Station concept but instead of repeating the stations, it is a “role of” a Station. In our example, there are two roles a station plays, a departure and arrival role. The model for SearchArrivalStation is the same as SearchDepartureStation save for the name/description.

The main output concept is TrainSchedule. It’s modeled like this:

structure (TrainSchedule) {

description (Train Schedule)

property (searchDepartureStation) {

type (SearchDepartureStation)

min (Required)

max (One)

}

property (searchArrivalStation) {

type (SearchArrivalStation)

min (Required)

max (One)

}

property (trip) {

type (Trip)

min (Required) max (Many)

}

property(speech) {

type (Speech)

min (Required) max (One)

}

}The TrainSchedule concept is a structure e.g. it contains other concepts. TrainSchedule is composed of the search stations, Trip and Speech concepts. Note the notation around cardinality: min and max. Min can be required or optional and max one or many (Our concept has a max of one speech concept but many trip concepts).

A Trip is a structure containing TripSteps:

structure (Trip) {

description (A Train Trip)

property (tripSteps) {

type (TripSteps)

min (Required)

max (Many)

}

}Finally, we have two simple text concepts:

text (TripSteps) {

description (Steps in a train Journey)

}

text (Speech) {

description (Text to speak)

}Keen eyes may ask why TripSteps does not contain two Stations as each TripStep represents a train ride. This is entirely possible and probably more elegant but adds complexity and for the sake of simplicity, simple text will be used for TripSteps. Speech will be used to hold the speech output (versus the visual output driven by Trips and TripSteps)

The equivalent of the simple input concepts are slots for Alexa and entities for Google. Neither platform has the equivalent of a structured concept – you would create such an object in code, not declaratively.

Actions and Endpoints in Samsung Bixby

We are now finished modeling our concepts – we have the necessary entities for our solution. Notice that no traditional code has been written. We have simply used a simple declarative markup language (Bixby Language or bxb) to describe our concepts.

Now that we have our concepts, how do we tell them what to do? We use Actions. Actions are verbs – they do something with the input concept and populate an output concept. Here is the model for our SearchForTrains action:

action (SearchForTrains) {

type (Search)

description (Search for Trains)

collect {

input (searchDepartureStation) {

type (SearchDepartureStation)

min (Required) max (One)

}

input (searchArrivalStation) {

type (SearchArrivalStation)

min (Required) max (One)

}

}

output (TrainSchedule)

}We can see that our action collects user input e.g. the departure and arrival station concepts. It then outputs a TrainSchedule concept.

N.B. The closest equivalent of an action for both Alexa and Google is an intent.

We modeled an action above but have no behavior. To create behavior, we jump into writing code. But before we write code, we create an Endpoint which ties the SearchForTrains action with our code. Here is the BCC endpoint:

endpoints {

authorization {

none

}

action-endpoints {

action-endpoint (SearchForTrains) {

accepted-inputs (searchDepartureStation, searchArrivalStation)

local-endpoint (SearchForTrains.js)

}

}

}In our endpoint, we are tying together the SearchForTrains action with our local Node.js code: SearchForTrains.js and defining the inputs as our departure and arrival concepts. Note that authorization is blank above but is used for security e.g. OAuth when one is using remote endpoints.)

N.B. The equivalent of a Bixby endpoint for Alexa is an endpoint and for Google, it is a fulfillment

In order to get a BART schedule, we need to call a BART API. All of this code will be in the SearchForTrains.js file. This js file is standard Node.js code with a few additional platform-specific libraries.

The code is not included here but is available at the GitHub link at the end of the article. Suffice it to say, it is quite simple:

- SearchDepartureStation and SearchArrivalStation are passed in as function parameters

- A call to the BART API is made (programmer note: Bixby handles making this synchronous e.g. there is no need for promises or callbacks)

- Data returned from the BART API is mapped into the TripSteps, Trip, TrainSchedule, and Speech concepts. There is a moderate amount of logic to create TripSteps text and the Speech Text.

N.B. The equivalent of this code in Alexa is a Lambda function and a webhook for Google.

Modeling UI and Speech Output in Samsung Bixby

Now that we have our output e.g. the TrainSchedule concept filled in, we need to give our user an answer – both via speech and in the UI. Our output is defined in a dialog. Our TrainSchedule dialog looks like this:

dialog (Result) {

match: TrainSchedule (schedule) {

from-output: SearchForTrains (trainSchedule)

}

template("BART Schedule:")

{speech("${value(schedule.speech)}") }

}What this dialog does is first, match the TrainSchedule concept output from SearchForTrains e.g. when SearchForTrains is called and returns data, this dialog will be used. Note in the dialog, we populate a local variable, schedule with the TrainSchedule data.

The template tag defines the visual output at the top of the UI, the header if you will, in our case the simple “BART Schedule:” The speech tag defines what is spoken. On a voice-only device like the upcoming Samsung Galaxy Home, this would be the only output. Bixby will always speak (TTS) the text contained in the speech attribute of a dialog.

A capsule will run with just a Dialog, but the UI will be very “programmer like.” We need to add markup to format the TrainSchedule data.

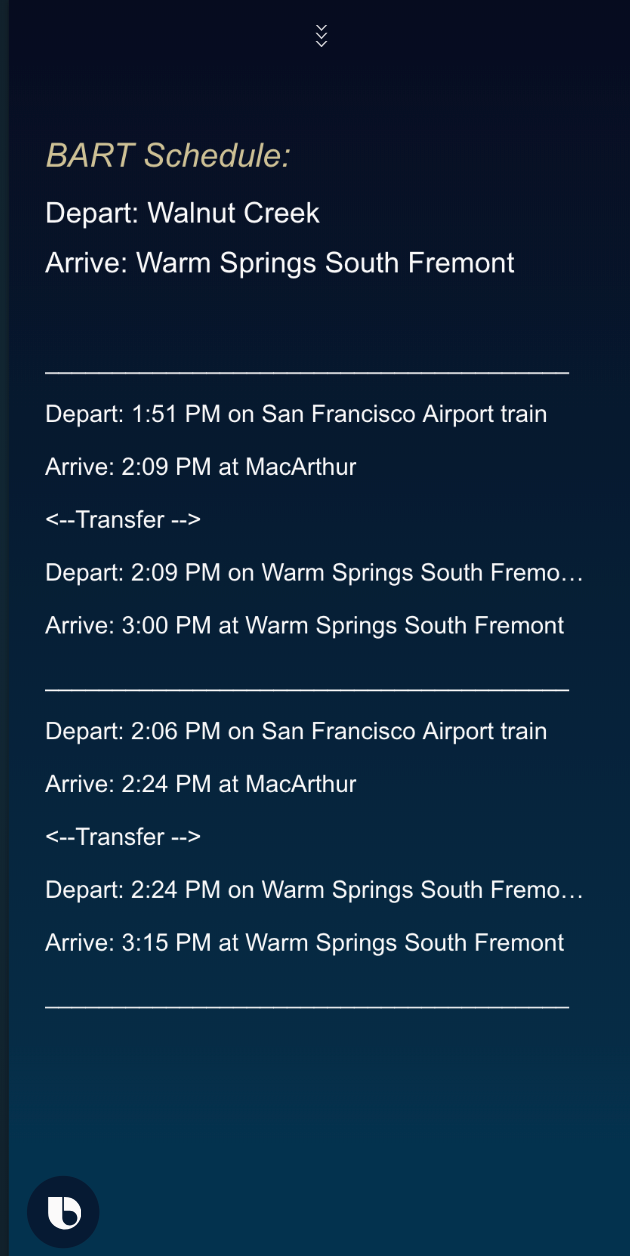

To format the TrainSchedule data we create a view. There are several different ways to do so. We will choose one of the very simplest by using a layout view.

Our UI will be very simple and definitely not award-winning. We will show the departure and arrival stations and then details about the individual trips.

The full code for the layout is available on GitHub. An excerpt is below:

for-each (ts.trip) {

as (trip) {

for-each (trip.tripSteps) {

as (steps) {

single-line {

text {

value {

template ("#{value(steps)}")

}

style (Detail_L)

}

}

}

. . .The markup is iterating through the Trips and displaying the relevant steps data. The full UI looks like this. The input is: when is the next BART from Walnut Creek to Warm Springs

It is worth noting that our inputs, SearchDepartureStation and SearchArrivalStation may also have views created for them. This would let a user use a device touchscreen instead of speak/type the input. For the sake of simplicity with BCC, there are no views created for these. Input is via voice/typing only.

N.B. Both Alexa (via cards, the display templates, and the newer APL) and Google (Rich Responses) support UI output. All three are different enough to make equivalencies difficult. There is a passing resemblance between DialogFlow’s and Bixby’s cards and carousels.

Vocabulary and Training for Samsung Bixby

When a user asks about a train schedule, they may not use the full proper names of stations. What we need is a list of synonyms and equivalent phrases. To do so, Bixby supports the concept of vocabulary. A snippet of our Station (Station.vocab) vocabulary is below:

vocab (Station) {

"12th St. Oakland City Center" {"12th St. Oakland City Center" "12th Street" "Oakland City Center" "12th"}

"16th St. Mission" {"16th St. Mission" "16th Street" "16th"}

"19th St. Oakland" {"19th St. Oakland" "19th Street" "19th"}

"24th St. Mission" {"24th St. Mission" "24th Street" "24th"}

"Antioch" {"Antioch"}

"Ashby" {"Ashby"}

"Balboa Park" {"Balboa Park"}

. . .Looking at the “12 St. Oakland City Center” line, we have defined “12th Street”, “Oakland City Center” and “12th” as synonyms. A user can use any of these and Bixby will resolve the input to the canonical “12th St. Oakland City Center”

N.B. The equivalent of vocabulary in Alexa is slot synonyms and for Google entity synonyms.

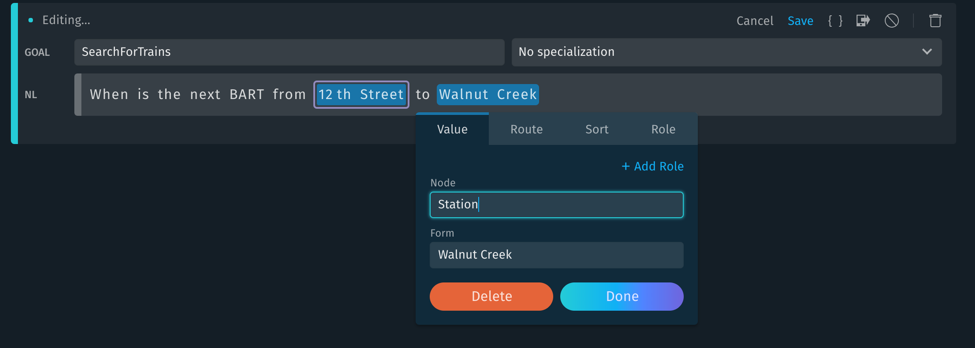

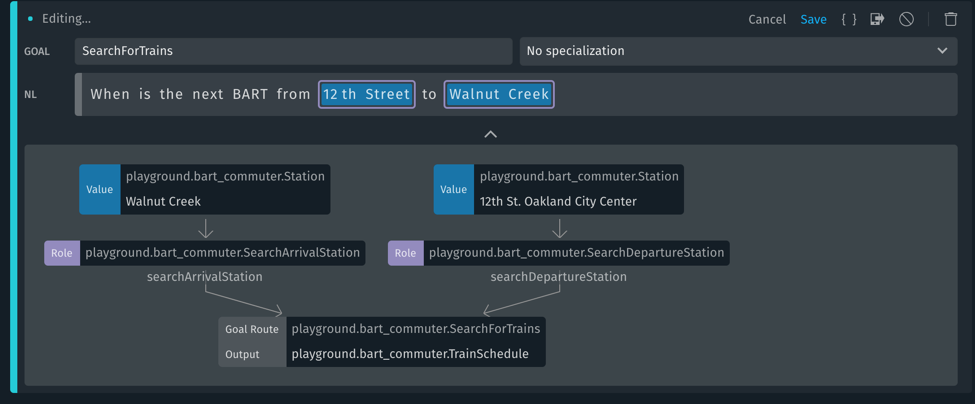

The last thing we need to do is define the actual Natural Language input. Bixby calls this training. Training has a UI (there is a markup text format available). A training entry sample is below:

In this example, we have already defined “12th Street” as the SearchDepartureStation part of the input. We are now defining “Walnut Creek” as SearchArrivalStation. But wait, the screen says the node is “Station” not SearchArrivalStation. Remember how we defined roles-of when created the search stations. The add role button allows one to enter the role e.g. in this case SearchArrivalStation. Our completed training looks like this:

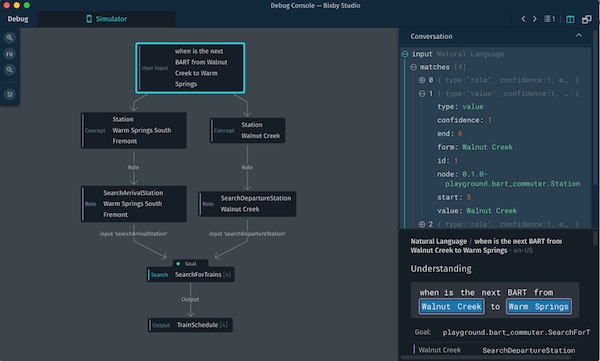

This is our first view into some of the Bixby “magic” – the flowchart below the natural language input shows how this sentence would be processed. Two station names would be extracted, one converted to a SearchDepartureStation and the other to a SearchArrivalStation – these would then be input to SearchForTrains which outputs a TrainSchedule.

What we are seeing is essentially a plan for the NLU and program flow. This is the vaunted Bixby auto program generation. The closest technical analogy I can think of is a relational database explain plan.

For our simple capsule, there are a few trainings for a single phrase. In a released capsule, one would enter several trainings for different phrases.

N.B. The equivalent in Alexa is sample utterances and for Google, it’s training phrases. Note the Bixby IDE may, initially at least, have a passing resemblance to DialogFlow when creating trainings.

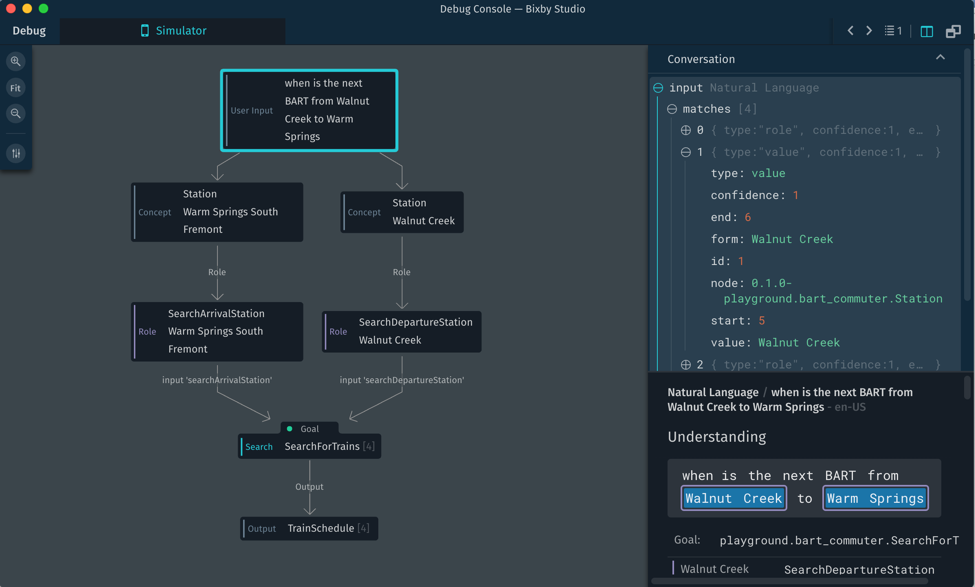

Let’s put this all together and ask the question “when is the next BART from Walnut Creek to Warm Springs?” We have already seen UI output above, let’s view the debug output:

Like during Natural Language training we get a flowchart of the program flow – in this case, the full execution. There is a lot of information available on this screen and each box is clickable for information on a particular step. Here we have clicked on the first box and we can see the NLU process and data.

This type of detailed debugging information about the NLU and your program workings is unique to Bixby. It is one of its market differentiators.

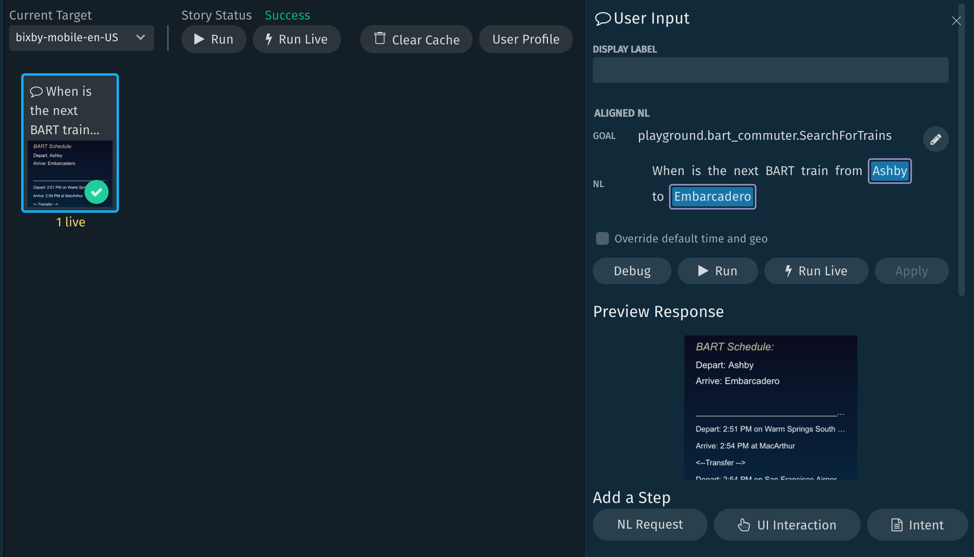

Stories and QA for Samsung Bixby

Let’s conclude with one more optional but very useful feature. In Bixby, one can create test use cases called stories. Here is a story created for our capsule.

Stories are a powerful feature which lets you create use cases, setup assertions and run the tests. You can then modify configurations and rerun a test – all within the same UI. This is another market differentiator for Bixby.

Invocation and Additional Features for Samsung Bixby

If you have used an Alexa Skill or Google Action, you are familiar with an invocation name. “Alexa open [invocation name]” or “Hey Google, speak to [invocation name]. Bixby doesn’t have invocation names! How does it work then? When you create a capsule for Bixby, your training is defining your invocation phrases. So, for the BCC capsule, one would just say “Hey Bixby, when is the next BART from Embarcadero to Walnut Creek.” Bixby would then invoke our capsule to get the answer. So, when you build a capsule for Bixby, you are essentially extending the native capabilities. There is no need to remember an invocation name! Both Amazon and Google currently rely on invocation names but are moving in the Bixby direction. Amazon via the CanFulfillIntentRequest and Google via implicit invocation.

An open issue for Bixby (as well as Amazon and Google to a lesser extent) is how to handle collisions – if two capsules have the same training phrases, which answers a user’s question? Likely a combination of machine learning, as well as some kind of preference choice (Android users – think of the popup that lets you decide which app to use for a particular function), will be involved. The secret sauce has yet to be revealed. Not needing invocation names is a big advance in voice assistants. It’s going to be tough to get this right but the first company to do so will have an advantage. Certainly, Samsung is well positioned launching without invocation names.

The BCC capsule is very simple. Before releasing functionality like it, several more training phrases and error hardening would need to be added. One interesting capability to add would be Personalization/Learning. Bixby has the ability to contextually learn the answers you provide and skip asking them in the future. Our BCC capsule is an excellent candidate for this. After using it for several days to commute back and forth from work, one could simply ask “When is the next BART train” – The learning would understand that in the morning, I go from Walnut Creek to Embarcadero and give an appropriate answer. In the evening if I asked the same question, it would understand I want my commute home, so it would give me the schedule from Embarcadero to Walnut Creek.

This learning capability is a good example of the large amount of Bixby functionality we haven’t explored. The declarative BXB language is powerful and there are significant capabilities that are not used in our sample capsule.

Conclusion

To explore these further capabilities, read much more about Bixby, download the IDE and build your own capsule, go to https://bixbydevelopers.com/

The code for this example capsule can be downloaded from: https://github.com/rogerkibbe/bixby_bart_commuter

I hope you have enjoyed this introduction to Bixby. I was the winner of Best of Show Bixby Capsule at the Samsung Developer’s Conference, Bixby Showcase and have developed several capsules since. I see a bright future for this platform and encourage everyone to try it out. Samsung will be adding Bixby to the 500 million devices it ships yearly over the next few years. There is going to be a lot of Bixby out there!

Please feel free to reach out to me on twitter – @rogerkibbe or at https://www.voicecraft.ai/ for questions, comments, and thoughts!

Bixby from a Developer Perspective with Murphy, Kibbe and Haas – Voicebot Podcast Ep 70