Google Unveils Gemini 1.5 Pro LLM With Staggering 1M-Token Context Window

Google has introduced the Gemini 1.5 Pro, the latest addition to its Gemini family of generative AI models. Gemini 1.5 Pro succeeds the Gemini 1.0 Pro large language model and improves on its predecessor in several ways. The most notable upgrade is a context window 35 times larger, capable of remembering a million tokens’ worth of data under the right circumstances. Context windows are the term for how much data a model can carry when generating a response before ‘forgetting’ some of it. Gemini 1.5 Pro’s context window translates to around 700,000 words or 30,000 lines of code, a 35x increase over Gemini 1.0 Pro. The multimodal LLM can also process up to 11 hours of audio or an hour of video.

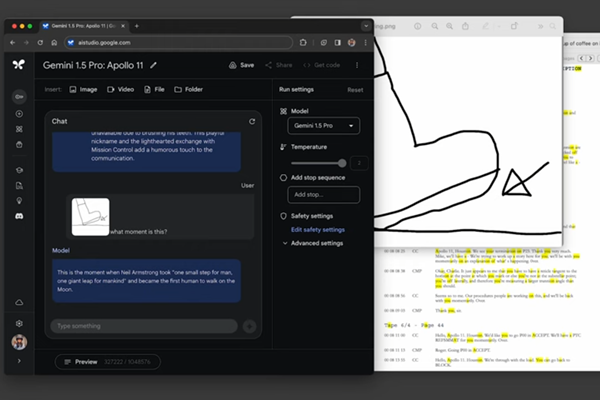

Gemini 1.5 Experimental

The Gemini 1.5 Pro demos are impressive, with the model handling complex search tasks across long texts and videos, as seen in the video above. The enormous context window does have a major drawback. It takes from 20 seconds to a minute to process a response, an enormous latency issue that Google said it’s working to improve. The full-scale version of Gemini 1.5 Pro is currently experimental, however, and only available in a private preview through Google’s AI Studio or to a limited group of enterprise customers on Google’s Vertex AI platform.

There is a more widely available version with a 128,000 token context window, or about 100,000 words, which is still on par with high-end models like OpenAI’s GPT-4-Turbo. While the experimental version of Gemini 1.5 Pro is currently free during the private preview phase, Google plans to introduce pricing tiers for access to the model’s capabilities.

“Our teams continue pushing the frontiers of our latest models with safety at the core. They are making rapid progress. In fact, we’re ready to introduce the next generation: Gemini 1.5. It shows dramatic improvements across a number of dimensions and 1.5 Pro achieves comparable quality to 1.0 Ultra, while using less compute,” Google CEO Sundar Pichai wrote in a blog post. “This new generation also delivers a breakthrough in long-context understanding. We’ve been able to significantly increase the amount of information our models can process — running up to 1 million tokens consistently, achieving the longest context window of any large-scale foundation model yet. Longer context windows show us the promise of what is possible. They will enable entirely new capabilities and help developers build much more useful models and applications.”

Along with the larger context, Gemini 1.5 Pro brings quality improvements comparable to Google’s top Gemini Ultra model, including the reduced computing power requirements cited by Pichai. This is thanks to a new architecture based on smaller expert models specializing in different tasks. The model is also faster at learning new concepts inputted by a user without more complex training.

“When tested on a comprehensive panel of text, code, image, audio and video evaluations, 1.5 Pro outperforms 1.0 Pro on 87% of the benchmarks used for developing our large language models (LLMs). And when compared to 1.0 Ultra on the same benchmarks, it performs at a broadly similar level,” Google DeepMind CEO Demis Hassabis wrote in the post. “Gemini 1.5 Pro also shows impressive “in-context learning” skills, meaning that it can learn a new skill from information given in a long prompt, without needing additional fine-tuning.”

Follow @voicebotai Follow @erichschwartz

Google Bard (and Duet and Assistant Mobile App) No More – Gemini Now The Star of Generative AI Show

Anthropic Releases Claude 2.1 Generative AI Chatbot With 200K Context Window and Reduced Errors

OpenAI Showcases New Generative AI Models and Lower API Prices, Cures GPT-4 ‘Laziness’