Anthropic Releases Claude 2.1 Generative AI Chatbot With 200K Context Window and Reduced Errors

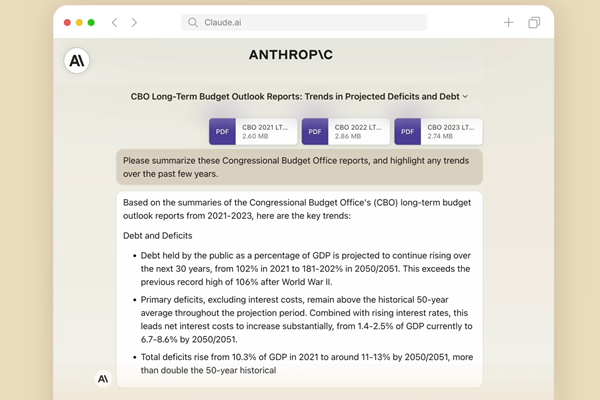

Generative AI startup Anthropic has upgraded its conversational generative AI to Claude 2.1. The new iteration significantly expands Claude’s comprehension abilities and reduces hallucinations, according to Anthropic. Claude 2.1 doubles the 100,000 token context window of Claude 2.0 to 200,000 tokens long – allowing it to absorb long technical manuals, financial filings, or multiple books in a single upload while enabling more nuanced summarization, question answering, trend analysis, and document comparison.

Claude 2.1

A generative AI chatbot’s context window is like short-term memory. It defines how much content from a prompt the model will use to answer questions, while tokens are how large language models divide up words into digestible bits, with a commonly cited average of three tokens per word. 200,000 tokens is about 150,000 words, more than 500 pages of material. A human reader can get through a text of 200,000 tokens in approximately 10 hours, with additional thinking and analysis time needed to explain and discuss the text with others. Having 200,000 tokens makes it exceedingly unlikely Claude will suddenly derail the conversation with a made-up answer. Anthropic claims that Claude 2.1 only produces half the hallucinations of Claude 2.0. That’s a significant attraction, especially for enterprise services that need minimal or no errors in their customer service chatbots. Claude 2.1 also exhibits a 30% decrease in inaccurate answers and is up to four times less likely to infer nonexistent support for assertions in lengthy documents wrongly.

“Since our launch earlier this year, Claude has been used by millions of people for a wide range of applications—from translating academic papers to drafting business plans and analyzing complex contracts. In discussions with our users, they’ve asked for larger context windows and more accurate outputs when working with long documents,” Anthropic explained in a blog post. “Processing a 200K length message is a complex feat and an industry first. While we’re excited to get this powerful new capability into the hands of our users, tasks that would typically require hours of human effort to complete may take Claude a few minutes. We expect the latency to decrease substantially as the technology progresses.”

Anthropic has also introduced a new “tool use” feature in beta, which lets Claude handle workflows via external APIs and databases. For instance, the model might turn to a calculator or translator for numerical reasoning or linguistic changes, respectively. It might also link to product datasets as a way of offering recommendations to users making purchases. Anthropic says the upgrades address user feedback on achieving more dependable, precise outputs. Claude 2.1 is already available in the company’s developer console and powers its public Claude chatbot interface. There’s also a new system prompts setup, which enables users to give Claude custom instructions, putting its responses into context or making Claude take up a role in its answers.

Anthropic has had a banner year. The startup raised $100 million from SK Telecom in August, which came after a $450 million round in May. In between, it released the now-superseded Claude 2.0 as well as the smaller and faster Claude Instant 1.2. The startup also began taking in revenue through a paid version of Claude called Claude Pro. The $20 per month chatbot offers quintupled usage and faster access compared to Claude’s free service. Claude 2.1 will only boost its place in the market, and the recent chaos at OpenAI may make it an even more appealing alternative to ChatGPT for enterprise clients.

Follow @voicebotaiFollow @erichschwartz

Google Cloud Deepens Anthropic Partnership as Generative AI Tug-of-War With AWS Intensifies

Anthropic Introduces Upgraded Generative AI Chatbot ‘Claude 2’