OpenAI (Partially) Lifts Ban on Military Generative AI Projects

OpenAI has ended its blanket prohibition on military use of its generative AI technology in favor of a more nuanced set of rules. The company replaced a policy outlawing “military and warfare” use of its large language models (LLMs) in favor of a narrower focus on harming people or developing weapons. The shift opens the door for defense-related projects undertaken by the U.S. military that don’t brush up against the new limits.

OpenAI has ended its blanket prohibition on military use of its generative AI technology in favor of a more nuanced set of rules. The company replaced a policy outlawing “military and warfare” use of its large language models (LLMs) in favor of a narrower focus on harming people or developing weapons. The shift opens the door for defense-related projects undertaken by the U.S. military that don’t brush up against the new limits.

Weapon LLM

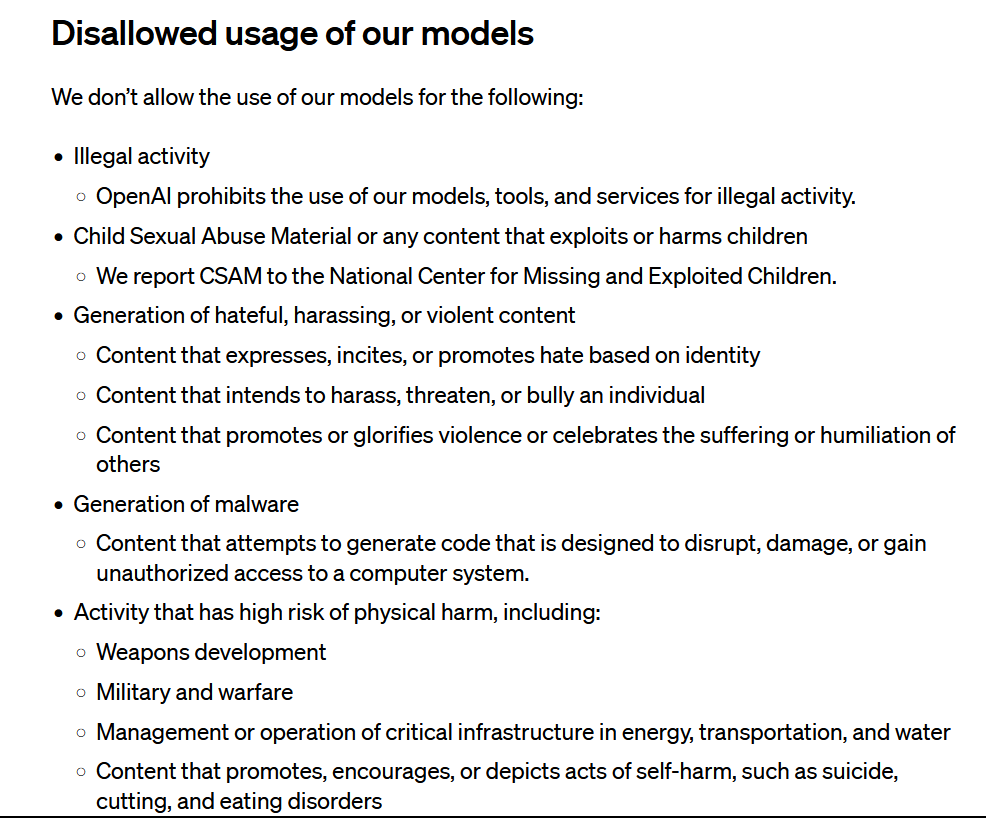

As seen on the right, the original policy disallowing anything with a “high risk of physical harm” included “weapons development” and “military and warfare.” That language has all been wiped away. The revised policy reads: “Don’t use our service to harm yourself or others – for example, don’t use our services to promote suicide or self-harm, develop or use weapons, injure others or destroy property, or engage in unauthorized activities that violate the security of any service or system.”

As seen on the right, the original policy disallowing anything with a “high risk of physical harm” included “weapons development” and “military and warfare.” That language has all been wiped away. The revised policy reads: “Don’t use our service to harm yourself or others – for example, don’t use our services to promote suicide or self-harm, develop or use weapons, injure others or destroy property, or engage in unauthorized activities that violate the security of any service or system.”

Generative AI has plenty to offer the defense industry, even without directly contributing to weapons or war tactics. LLMs can serve as cybersecurity aid and analyze the enormous troves of data collected by military agencies. LLMs can help compose and cross-reference reports and even write manuals for new equipment and training guides for the sundry roles of a modern military.

The U.S. Department of Defense set up a generative AI task force back in August, and the U.S. Army has been experimenting with writing press releases with ChatGPT for almost a year. Still, OpenAI is hardly alone among generative AI developers with potential military contributions. Swedish AI technology developer Helsing recently raised €209 million ($223 million) to expand its AI offerings for military applications across Europe.

“Our policy does not allow our tools to be used to harm people, develop weapons, for communications surveillance, or to injure others or destroy property. There are, however, national security use cases that align with our mission,” OpenAI explained in a statement. “For example, we are already working with DARPA to spur the creation of new cybersecurity tools to secure open source software that critical infrastructure and industry depend on. It was not clear whether these beneficial use cases would have been allowed under “military” in our previous policies. So the goal with our policy update is to provide clarity and the ability to have these discussions.”

OpenAI wants to ensure its tools aren’t used in war while making sure it can accept government funding and contracts for all of the other national defense needs. Even with that cutout, there are questions about how these non-combat projects could contribute to future battles. That’s before even raising ethics questions about surveillance and other thorny debates around modern security agencies.

Follow @voicebotaiFollow @erichschwartz