New Report – Deepfake and Voice Clone Awareness, Sentiment, Concern, and Demographic Data

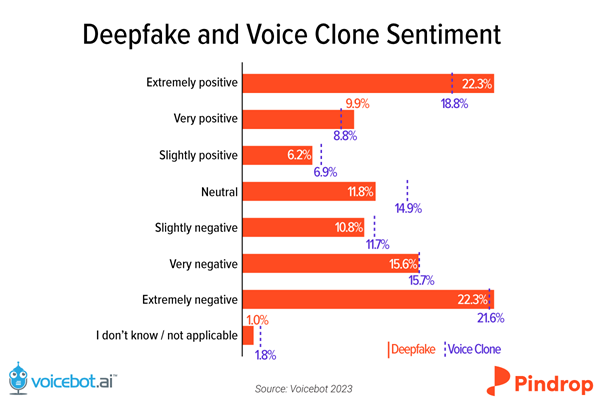

Deepfakes and voice clones are controversial AI technologies. Like any technology, they can deliver positive or negative outcomes. Consumers sense that duality and collectively express a bifurcated view of the solutions. In a survey of 2,000 U.S. adults conducted by Voicebot.ai, Synthedia, and Pindrop, the two most common expressions of sentiment were “extremely positive” and “extremely negative.” The sentiment distribution resembles an inverted bell curve (on its side in our rendition).

Deepfake and Voice Clone Report

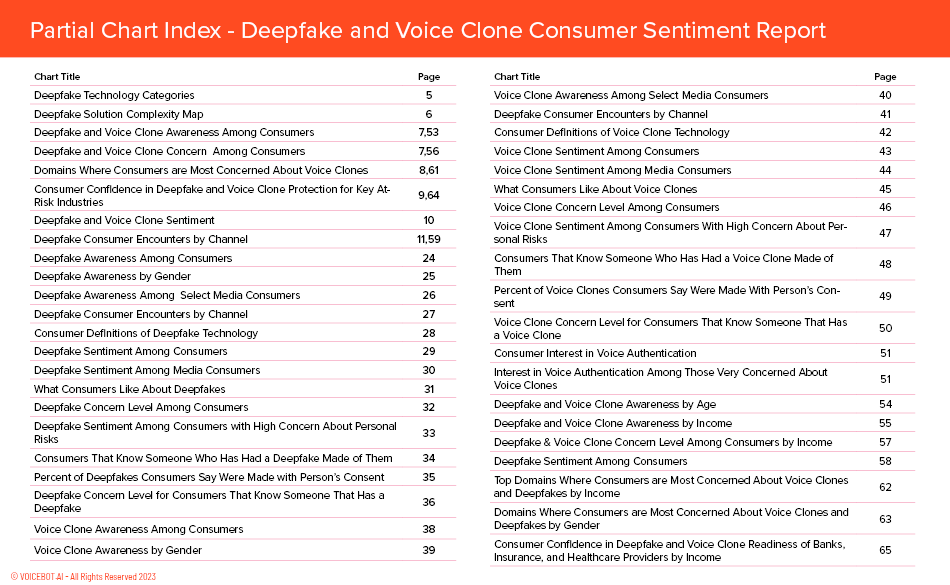

This data comes from the recently released Deepfake and Voice Clone Consumer Sentiment Report 2023. It includes over 50 charts and diagrams across nearly 80 pages of analysis. The goal of the report was to uncover how many people have encountered deepfakes and voice clones, what they think about the technologies and their level of concern. You can download the full report for free through the button below. Some examples of the data include:

- Deepfake and Voice Clone Consumer Encounters by Channel / Platform

- Deepfake and Voice Clone Awareness and Conerns by Age, Income, and Gender

- What Consumes Like about Deepfakes and Voice Clones

- Which Industries Are Consumers Most Concerned About Misuse of the Technologies

- Which Industries Are Consumers Most Confident Have Implemented Protections

- Number of Consumers That Know Someone with a Deepfake or Voice Clone

- And more….

Awareness and Concern are High

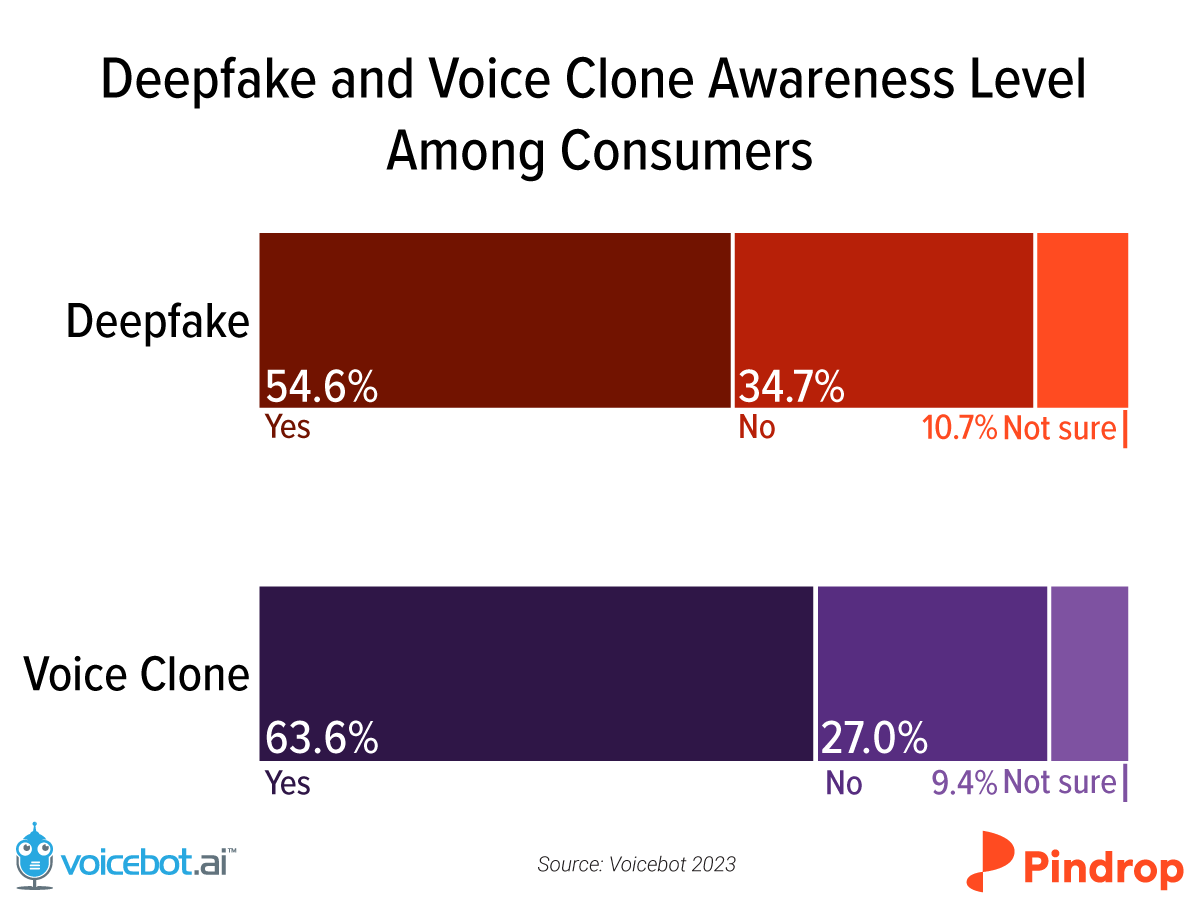

The U.S. White House just issued an executive order relating to AI, and it touches on deepfake technology using the term synthetic media. Government policymakers and regulators intend to take action and don’t see this as an exotic niche of the technology landscape. Consumers already have high awareness, with nearly 55% recognizing the term deepfake and 64% for voice clone. This finding suggests that policymakers may be able to make their case for new rules without having to educate everyone about what they are regulating.

It is important to note that there are many deepfake and voice clone use cases that consumers appreciate. They are broken out separately in the full report. However, despite between 30-40% of consumers reporting positive sentiment about voice clones and deepfakes, concern levels run very high. Over 90% of consumers express at least mild concern over the technologies, and around 60% say they are “extremely” or “very” concerned.

The high rate of concern follows a number of high-profile scams, such as recent incidents involving deepfakes of MrBeast, Tom Hanks, and UK consumer advocate Martin Lewis. These are coupled with a rise in the “grandmother” scams where a voice clone is used to call a grandparent or other relative requesting money to get out of jail, tow a car, or address some other need for cash.

At the same time, entertainment properties ranging from recent Star Wars and Indiana Jones installments to America’s Got Talent, Netflix documentaries, and countless YouTube videos have displayed these technologies in a positive light. Exposure to these positive uses of deepfakes increases the likelihood of positive consumer sentiment and, at the same time, heightens the level of concern.

Three things are clear. Deepfakes and voice clones have improved markedly over the past five years. There is already broad consumer exposure to the technologies. Consumers are concerned, even those who express positive sentiments. We hope this report sheds more light on consumer experience and attitudes toward the technologies and is helpful in better understanding the deepfake market.

Follow Me on LinkedInFollow @bretkinsella Follow @voicebotai

Indian Actor Anil Kapoor Wins Court Order Against Unauthorized AI Deepfakes

Deepfake Creator Ghostwriter Releases AI Travis Scott and 21 Savage Song