Perplexity AI Adds Image Search and Starts Meta Llama 2 AI Chatbot Experiment

Generative AI chatbot startup Perplexity AI announced new features and an updated mobile app and browser extension interface this week, including the capacity to answer questions with images instead of just words. Perplexity also managed to release a new chatbot built on Meta’s brand new Llama 2 AI model for users to experiment with less than 24 hours after Meta introduced the open-source large language model.

Visual Perplexity

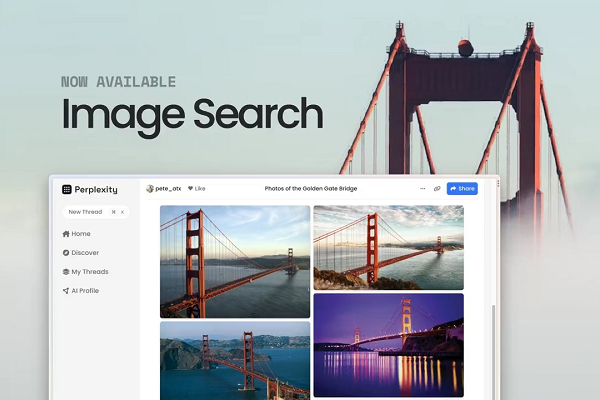

The biggest update to Perplexity’s performance is the new image search feature, which gives Perplexity’s AI the ability to respond to questions about people and places with pictures and videos. The pictures are visible from the search response, while the videos appear as small buttons but play within Perplexity’s window, meaning there’s no need to navigate to YouTube or elsewhere. Image search is already live on Perplexity’s website, and the company said it plans to roll it out to its mobile apps in the near future. The other notable change in function for Perplexity is the ability to edit the titles of search threads for better organization. The additional flexibility fits with the revamped look of Perplexity’s Chrome Extension and iOS app. The mobile app has been upgraded with many of the web portals’ features, including thread syncing, language defaults, and other performance upgrades. Perplexity even updated its website, shortening the URL to ‘pplx.ai.’

Llama Chat

Both the mobile and web versions of Perplexity are hinting at plans for making the AI more personalized via the still nascent “AI Profile” creation button. Some of Perplexity’s vision for the future has already started to appear in Perplexity Labs, the new experimental digital farm where Perplexity and its users can test out ideas. The first Labs resident is a Llama Chat, chatbot built on Meta’s new Lllama 2 model. The license fee-free AI model was introduced this week, but Perplexity managed to obtain access to the LLM and use its inference engine to produce a generative AI chatbot in less than a day.

“You can now view answers for queries related to people, places and things with images and videos rendered in addition to the existing rich formatted text. Play the videos w/o having to leave Perplexity,” Perplexity CEO Aravind Srinivas explained in a LinkedIn post describing Perplexity’s upgrades. “We’re excited to announce Perplexity Labs, as a playground for future experiments, the first of which is LlaMA chat fully powered by Perplexity’s LLM inference engine.

Follow @voicebotai Follow @erichschwartz

Perplexity AI’s New Copilot Feature Provides More Interactive, Personalized Answers With GPT-4

Perplexity AI Raises $25.6M and Launches Conversational Search Engine iOS App