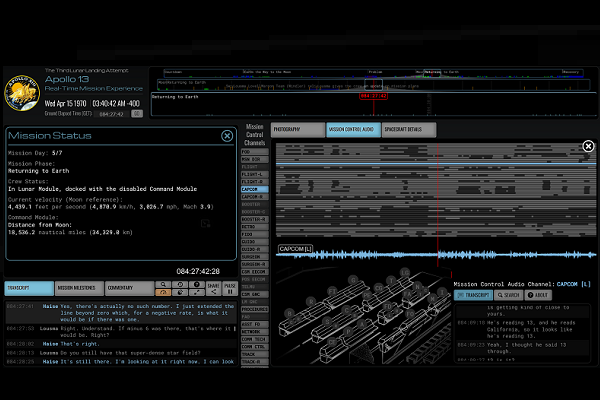

OpenAI’s Whisper ASR is Transcribing Apollo 13’s 7,200 Hours of Mission Control Chatter

OpenAI’s Whisper automatic speech recognition (ASR) system is being used to transcribe the Mission Control log of Apollo 13 as part of the Apollo in Real Time project. Whisper’s training for loud environments, accents, and technical terms make it ideal for the task of transforming 7,200 hours of audio into written text.

Whisper Apollo

Apollo in Real Time offers visitors a chance to experience the Apollo 11, Apollo 13, and Apollo 17 NASA missions through interactive, multimedia experiences. The recorded audio, video, text, and visuals are all synced to the real-time chain of events as they happened at the time. The project turned to OpenAI’s Whisper model to better understand what happened in Mission Control during the harrowing disaster and successful recovery of the Apollo 13 mission. The original 1970 transcript differs from the one generated by Whisper, but the project hopes to redo the transcription as the Whisper model improves rather than attempting to manually edit the daunting amount of data involved.

“The Whisper AI processing of the 7,200 hours of Mission Control audio required over 750 hours of computer time. The resulting transcripts contain over 2,929,556 utterances, consisting of 38,321,298 words, totaling 171.6MB of text,” the project leader explained. “As valuable as these results are, they remain imperfect. The Apollo in Real Time team will not be endeavoring to manually correct these generated transcripts. Instead, as automated transcription technology improves over time the audio will be re-processed, replacing these transcripts.”

Whisper is an open-source software kit that can transcribe English audio or transcribe and translate several other languages into English. Whisper’s training focuses on ASR in situations that other models may struggle such as when there’s a lot of noise, a heavy accent, or non-standard technical language. OpenAI used 680,000 hours of “multilingual and multitask” data to train Whisper and make it better at identifying and parsing speech in those circumstances. On the other hand, OpenAI has said Whsiper’s accuracy falls short of other models because of where it focused the training.

Follow @voicebotaiFollow @erichschwartz

OpenAI Releases Open-Source ‘Whisper’ Transcription and Translation AI

Facebook Makes Multilingual Speech Recognition Model Open Source

SoundHound Debuts Real-Time AI Transcription and Annotation Service