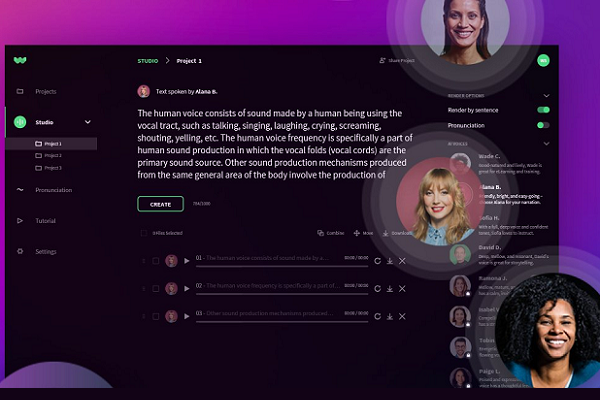

WellSaid Labs Teaches Synthetic Text-to-Speech AI Proper Pronunciation

Mispronounced words and oddly emphasized syllables are common flaws in text-to-speech AI, spoiling the illusion of a human behind the otherwise realistic synthetic speech. TikTok users have even built an entire sub-dialect around the flubbed line-reading by the social media app’s AI. Synthetic speech startup WellSaid Labs has introduced a system aimed at overcoming those foibles by combining a voice model that looks for context to determine how to say a word in tandem with a tool for teaching it proper pronunciation should contextual clues fail.

Read What’s Read in Saskatchewan

Text-to-speech AI usually relies on a phonetic system, essentially sounding out each letter or common letter grouping the same way regardless of the meaning of the whole sentence or paragraph. An otherwise smooth synthetic speech can easily mangle words pronounced differently based on tense (like read) or the many English words spelled without regard for their verbal form. WellSaid’s new model opts for a more holistic approach, reading words based on context. It’s a lot like how children learn to read, with some elocution lessons from My Fair Lady mixed in.

“[The AI] pronounced more words correctly and puts the emphasis on the right part of the word,” WellSaid senior voice data engineer Rhyan Johnson explained in an interview with Voicebot. ” We’ve also normalized pronunciation for non-standard words, acronyms, abbreviations, and numbers.”

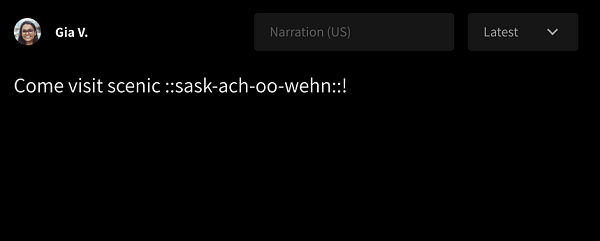

WellSaid’s solution for when the upgraded AI still can’t say a word the way the user wants is to teach it more directly. The respelling tool uses a simplified pronunciation alphabet to guide the AI to pronounce words as desired, including correct vowel sounds and syllabic emphasis. You can see an example to the side of how a user would explain the pronunciation of Saskatchewan to the AI.

WellSaid’s solution for when the upgraded AI still can’t say a word the way the user wants is to teach it more directly. The respelling tool uses a simplified pronunciation alphabet to guide the AI to pronounce words as desired, including correct vowel sounds and syllabic emphasis. You can see an example to the side of how a user would explain the pronunciation of Saskatchewan to the AI.

“Our first step was reducing the rules of English that create so much confusion. We only have about 44 sounds in our catalog, both consonants and vowels,” Johnson said. We’ve had many people asking about how they can spell words out phonetically for the AI, especially if your company name is a made-up word. Respelling empowers them with a consistent and intuitive phonetic system.”

Real Synthetic Appeal

WellSaid’s synthetic speech services already attracted more than 7,000 clients even before the latest upgrade, the startup claims. Johnson cited clinical diagnostics and education as some of the most common outlets for the technology. The startup’s synthetic speech engine and respelling tool could give a voice to future virtual beings in the digital realms of the metaverse and holds a lot of potential for the burgeoning synthetic media entertainment industry. Virtual being builder Synthesia and visual effects firm Digital Domain have inked deals with WellSaid to explore those possibilities. WellSaid’s previous technical advances have already made producing AI voice models much faster and cheaper, and the company boasts around 50 voice options in an array of English styles. The improvements also streamlined the process for the voice artists who get paid for providing a synthetic voice option and then every quarter based on how much their voice is used by WellSaid’s clients.

“We focused on reducing how much training we needed to give the AI,” Johnson said. “When I first joined in 2019, we needed 16 to 20 hours of content from voice talent. We’ve reduced that to two, which is huge, though they have to come back to record more for different kinds of emotional speech. We have a long roadmap. One cool future feature might be just being able to record my voice and telling [the AI] to ‘say it like that.””

Follow @voicebotai Follow @erichschwartz

New Japanese Toy Synthesizes Parent Voices to Read Stories to Kids