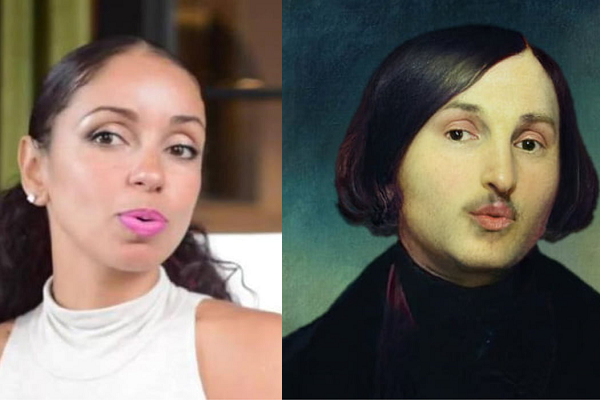

Samsung’s New MegaPortrait Generates Deepfake Videos from Still Images

Samsung Labs has a new tool called MegaPortraits that can overlay still photos or paintings of people with a realistic moving version based on another person’s movements, as seen in the top image. This realistic deepfake uses a single photo source but can imitate facial expressions, head and neck stretching, and the other subtle elements that make a human face come to life.

Samsung Labs has a new tool called MegaPortraits that can overlay still photos or paintings of people with a realistic moving version based on another person’s movements, as seen in the top image. This realistic deepfake uses a single photo source but can imitate facial expressions, head and neck stretching, and the other subtle elements that make a human face come to life.

MegaPixel Deepfake

Samsung blends the source image with the motion of the ‘drive’ whose movement is embedded in the still image. The AI model generating the movement is trained from two random sample frames from the source and driver at each step. The look of the moving image and its motion are processed by the model separately before being projected onto the original image. The high-resolution avatars are designed to function regardless of whether someone looks like the image they are imitating. It doesn’t need a close approximation in face shape or skin tone as is often necessary for other deepfake software.

“Our model imposes the motion of the driving frame (i.e., the head pose and the facial expression) onto the appearance of the source frame to produce an output image,” the researchers behind MegaPortraits explain in a paper on the technology. “The main learning signal is obtained from the training episodes where the source and the driver frames come from the same video, and hence our model’s prediction is trained to match the driver frame.”

The group’s efforts also include working on creating the deepfakes in real time, skipping the need to prepare the videos ahead of time. The AI model connects 100 neural avatars linked to existing images and runs at 130 frames per second. MegaPortraits are not a commercial product yet. The researchers want to improve how well the shoulders move and squash some of the bugs that might cause flickers or other issues.

Follow @voicebotai Follow @erichschwartz

‘Pictures-to-Video’ Synthetic Media Startup D-ID Raises $25M

Watch a Virtual Simon Cowell Perform for ‘America’s Got Talent’

AI Dungeon’s Synthetic Story and Pictures Released on Steam Gaming Platform