OpenAI Debuts New GPT-3 Model to ‘Align’ With Human Intent

OpenAI has introduced a new default GPT-3 natural language model called InstructGPT to produce conversational AI responses that match better with user input. The startup designed InstructGPT to come back with answers ‘in alignment’ with a user’s input and fewer tangential or ‘toxic’ responses.

InstructGPT

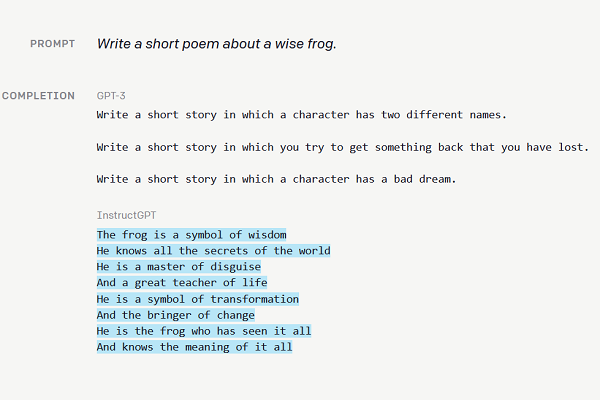

OpenAI laid out in its announcement how its previous GPT-3 model could respond to sometimes innocuous queries with bizarre and nonsensical answers. InstructGPT can understand when the input is a request to complete a task or answer a question, even implicitly. For instance, asking for a poem about a wise frog would previously get the AI to respond with a writing request of its own. With InstructGPT in place, the AI will actually generate a half-decent poem about that frog and its wisdom. Despite that improvement, InstructGPT is less expensive than GPT-3 because of its narrower, if deeper, focus on a smaller set of parameters. Aligning with what users actually want has the added benefit of cutting down on untrue or toxic responses from the AI.

“To make our models safer, more helpful, and more aligned, we use an existing technique called reinforcement learning from human feedback (RLHF),” OpenAI explained in a blog post. “The resulting InstructGPT models are much better at following instructions than GPT-3. They also make up facts less often, and show small decreases in toxic output generation. Our labelers prefer outputs from our 1.3B InstructGPT model over outputs from a 175B GPT-3 model, despite having more than 100x fewer parameters. At the same time, we show that we don’t have to compromise on GPT-3’s capabilities, as measured by our model’s performance on academic NLP evaluations.”

Custom Conversation

The new research builds on the fine-tuning features for GPT-3 that OpenAI unveiled at the end of last year. These tools let developers customize GPT-3 and improve its accuracy. They can then embed the adjusted model into the GPT-3 API right away, now that OpenAI has ended the waitlist for GPT-3 licensing. The end of OpenAI’s waitlist widened access to GPT-3 to anyone willing to submit their email address. There have been several experiments undertaken since GPT-3 launched. Microsoft scooped an exclusive licensing deal and saw the model come to Github Copilot, followed by the low-code Power Apps programming tool and the Azure OpenAI Service. The language model’s potential value is rising, as seen in creations like Codex, which instructs the AI in translating plain language instructions. There’s a negative aspect to the new model, however. The AI can be more easily misused when asked for nefarious guidance. As an example, OpenAI shared the detailed agenda and plan produced when the AI is asked how a user can break into their neighbor’s house.

“Our results show that these techniques are effective at significantly improving the alignment of general-purpose AI systems with human intentions,” OpenAI wrote. “However, this is just the beginning: we will keep pushing these techniques to improve the alignment of our current and future models towards language tools that are safe and helpful to humans.”

Follow @voicebotai Follow @erichschwartz

Microsoft’s New Azure OpenAI Service Shares GPT-3 Language Model With Enterprise Partners