OpenAI Rolls Out GPT-3 Customization Tools

OpenAI has released new fine-tuning features for its GPT-3 natural language model. The new tools enable developers to customize GPT-3, simultaneously upping the accuracy and fine-tuning it to suit their specific needs and language style. Developers can then incorporate the adjusted model into the GPT-3 API right away, now that OpenAI has ended the waitlist for GPT-3 licensing.

OpenAI has released new fine-tuning features for its GPT-3 natural language model. The new tools enable developers to customize GPT-3, simultaneously upping the accuracy and fine-tuning it to suit their specific needs and language style. Developers can then incorporate the adjusted model into the GPT-3 API right away, now that OpenAI has ended the waitlist for GPT-3 licensing.

Fine-Tuned AI

The new GPT-3 features allow developers to quickly and directly adjust the language model with their own data. A single short line of code in the OpenAI command line tool starts the automated training in the desired content and style. The datasets shape the AI to fulfill better whatever purpose the developers envision for the API faster and with far fewer resources than building a custom conversational AI from scratch. There doesn’t need to be a lot of data to see results, either. Fewer than 100 examples will provoke noticeable change, and that there’s a linear progression every time the number of examples doubles.

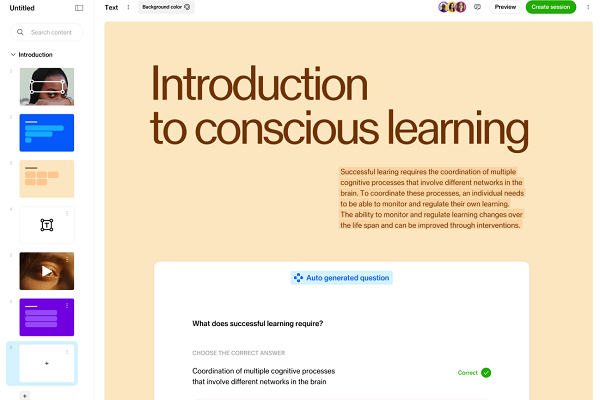

OpenAI cites a company whose customized API led to half as many errors and another garnering a 95% correct response rate, a 12% jump, as a few of the beneficiaries of the new choice. The customization is a boon to any industry using GPT-3, including enthusiastic converts like AI research assistant Elicit, customer feedback platform Viable, business education AI firm Sana Labs, seen at the top of the article. Sana personalizes lessons for businesses, and the upgraded GPT-3 has led to more accurate and specific answers to questions. a 60% improvement, according to OpenAI.

“Customizing makes GPT-3 reliable for a wider variety of use cases and makes running the model cheaper and faster. You can use an existing dataset of virtually any shape and size, or incrementally add data based on user feedback,” OpenAI explained in a blog post. “Last year we trained GPT-3 and made it available in our API. With only a few examples, GPT-3 can perform a wide variety of natural language tasks, a concept called few-shot learning or prompt design. Customizing GPT-3 can yield even better results because you can provide many more examples than what’s possible with prompt design.”

GPT-3 Central

The end of OpenAI’s waitlist widened access to GPT-3 to anyone willing to submit their email address. There have been several experiments undertaken since last year when GPT-3 launched. Microsoft scooped an exclusive licensing deal and saw the model come to Github Copilot, followed by the low-code Power Apps programming tool and the Azure OpenAI Service. The language model’s potential value is rising, as seen in creations like Codex, which instructs the AI in translating plain language instructions. Existing OpenAI developers are on the move, too, picking up plenty of cash along the way for universal autocomplete startup Compose.ai and enterprise writing assistant startup Copy.ai, who raised $2.1 million and $2.9 million, respectively. The new customizing tools only enhance GPT -3’s place in the world.

“Customizing GPT-3 improves the reliability of output, offering more consistent results that you can count on for production use-cases,” OpenAI wrote. “Whether text generation, summarization, classification, or any other natural language task GPT-3 is capable of performing, customizing GPT-3 will improve performance.”

Follow @voicebotai Follow @erichschwartz

Microsoft’s New Azure OpenAI Service Shares GPT-3 Language Model With Enterprise Partners

Microsoft Scores Exclusive License to the Much-Hyped GPT-3 Language Model