Nvidia Upgrades Speech Synthesis Tech to Sound More Human

Nvidia has created new and improved tools for producing synthetic speech with more natural qualities. The upgrades make the artificial voice sound more like an actual human speaking thanks to the new RAD-TTS model, which lets users train an AI to speak like them, mimicking their tone, pace, and other facets of their voice.

Nvidia has created new and improved tools for producing synthetic speech with more natural qualities. The upgrades make the artificial voice sound more like an actual human speaking thanks to the new RAD-TTS model, which lets users train an AI to speak like them, mimicking their tone, pace, and other facets of their voice.

Nvidia RAD

The RAD-TTS model applies Nvidia’s machine learning tech to text-to-speech tools, teaching the AI how to speak like someone whose audio recordings are fed into it. Once it has amassed enough information on how someone speaks, the AI can start to talk like them, reading out any script written for it, matching the intonation and rhythm of the human more than Nvidia’s earlier iterations of the tech. RAD-TTS is so accurate that it won a National Association of Broadcasters competition for creating the most realistic avatar.

Once a voice is learned, users can even switch between voices, so a speech performed by one person can seem to be performed by someone else. The audio can be finetuned as well to make sure it matches as closely as possible. Nvidia envisions using the models to improve the voices of customer service AI and to make games and audio entertainment richer without requiring more time and people. You can see a demonstration of the tech in one of Nvidia’s I Am AI videos below.

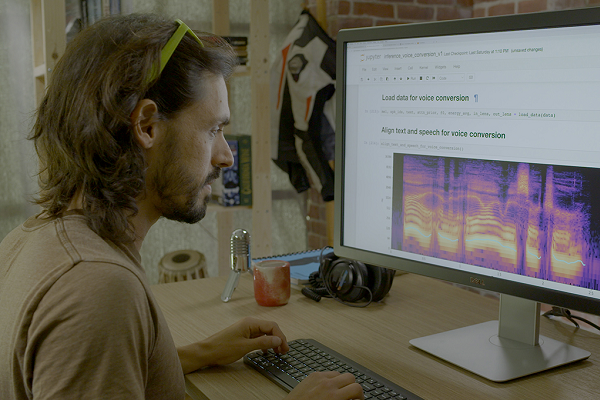

“Previous speech synthesis models offered limited control over a synthesized voice’s pacing and pitch, so attempts at AI narration didn’t evoke the emotional response in viewers that a talented human speaker could. By training the text-to-speech model with audio of an individual’s speech, RAD-TTS can convert any text prompt into the speaker’s voice,” Nvidia explained in a blog post. “With this interface, our video producer could record himself reading the video script, and then use the AI model to convert his speech into the female narrator’s voice. Using this baseline narration, the producer could then direct the AI like a voice actor — tweaking the synthesized speech to emphasize specific words, and modifying the pacing of the narration to better express the video’s tone.”

Synthetic Future

Creating synthetic voices is a rapidly growing business. Startups like Lovo, Veritone, and Wellsaid have been raising significant funding. The results have been appearing in everything from a de-aged Luke Skywalker on The Mandalorian TV show to Val Kilmer’s new documentary. A fan of the videogame Skyrim even demonstrated how he could produce a trailer using only synthetic voices built with an AI tool. Nvidia has been upping its game in this regard for a while. The company even created an entirely virtual version of CEO Jensen Huang to give a small part, 14 seconds, of a major speech at its conference earlier this year.

Follow @voicebotai Follow @erichschwartz

Young Luke Skywalker’s Voice Synthesized From Old Recordings for Mandalorian Cameo