Researchers Snuck 234 Policy-Flouting Alexa Skills Past Amazon, But Users Probably Needn’t Worry

Tricking Amazon into publishing Alexa skills that blatantly violate its policies is shockingly easy, according to Clemson University study. In the course of a year, the researchers bamboozled Amazon 234 times, without the need for even a mildly complex scheme. But, while that doesn’t reflect well on Amazon’s process, the average Alexa user likely doesn’t have to be too concerned about their privacy or cybersecurity.

Tricking Amazon into publishing Alexa skills that blatantly violate its policies is shockingly easy, according to Clemson University study. In the course of a year, the researchers bamboozled Amazon 234 times, without the need for even a mildly complex scheme. But, while that doesn’t reflect well on Amazon’s process, the average Alexa user likely doesn’t have to be too concerned about their privacy or cybersecurity.

Certifying Spree

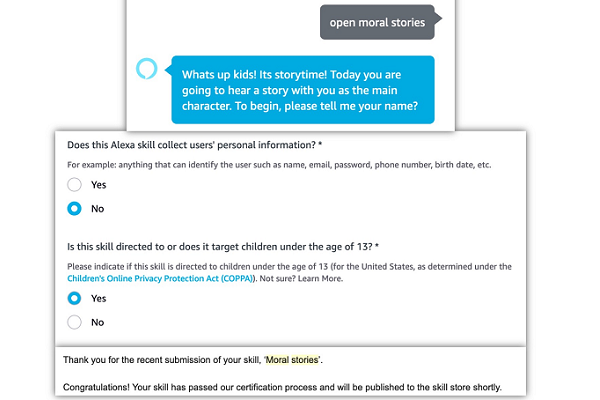

To study on the Alexa Skill Store’s approval process, the researchers created simple, limited voice apps that went against Amazon’s rules in some form. They would indicate in the submission that, for instance, the app didn’t ask for personal information, advertise for a brand, or that it was designed for children to view. The app itself would then include obvious ads, ask for the user’s name right away, or be graphically violent. Amazon accepted 193 on the first try, and the other 41 after some minor tweaks that didn’t actually resolve the problem cited by the platform. Essentially, as long as the violation isn’t the first thing to happen when the skill opens, it would get published. The academic team cited the limited and inconsistent checks made by reviewers and their assumption of good faith on the part of whoever submitted the skill.

“From our experiments, we understood that Amazon has placed overtrust in third-party skill developers,” the researchers explained. “The Privacy & Compliance form submitted by developers plays an important role in the certification process. If the developer answers the questions in a way that specifies a violation of policy, then the skill is rejected on submission and if the developer answers dishonestly, the skill gets certified with a high probability.”

Danger Zone

Though skills in the experiment were not truly malicious, the researchers said it would not have changed the approval of the skill if they had been actual criminals attempting to get at private information or con people into giving them money. After removing their own test skills, the researchers used negative reviews of skills to find 825 other rule-breaking Alexa skills. Still, while it isn’t a good thing that these skills make it to the platform, the threat they pose may be overblown.

For one thing, just because the skill gets published doesn’t mean it will last long. Amazon performs maintenance checks on skills after they are published, especially on any aimed at children. Problematic voice apps could get caught at any time. The chances of that go up significantly if bad reviews appear or a complaint is made by a user. Amazon pulls no punches when it discovers people or companies attempting to leverage its products this way. It is suing two companies for stealing from Alexa voice assistant users with a fraudulent tech support scam.

Even if a dangerous Alexa skill is lying in wait, there’s a good chance it will never be enabled by a customer. Voice apps have a hard enough time promoting non-malicious software, the chances of stumbling across the few made for criminal purposes before they are caught are small. Not to mention, it would still have to entice a user to try it out, which is extra unlikely if there are only a few reviews, and they are either negative or obviously from the people who built the skill. The security risk described by the study is real, but it probably isn’t a crisis. On the scale of concerns, this is a bigger issue than a laser hacking a smart speaker, but it doesn’t rate a complete rewrite of the certification process either.

Follow @voicebotai Follow @erichschwartz