Voice Assistants Are Becoming Less Accurate, But Google Assistant is Still the Smartest: Report

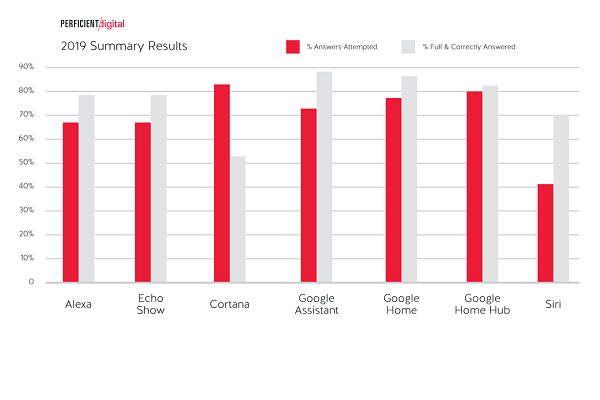

Voice assistants are answering fewer questions correctly compared to a couple of years ago according to the latest annual report from Perficient Digital on digital assistant intelligence. Despite the overall decline, however, Google Assistant consistently ranks above its rivals for the percentage of questions fully and completely answered.

Voice Assistant Struggles

Perficient compared the Amazon Alexa, Apple Siri, Google Assistant and Microsoft Cortana voice assistants across different devices. The results saw Google Assistant at its best on a smartphone, with 89% full and complete answers, and slightly lower percentages on Google Home and Home Hub devices.

That’s still better than Alexa, which fell from 90% accuracy in 2017 to 79% accuracy this year or Siri, which dropped from around 85% to 70%. It was Cortana that saw the steepest dive, however. Almost 90% of its answers were complete and accurate in 2017, yet it could only offer accurate answers 53% of the time this year.

Perficient tested 4,999 queries on subjects like history, geography, and math to determine what the voice assistants would try to answer and how accurate they were. The results were then measured against previous years, which used the same questions except in the case of Cortana. Microsoft may have adjusted Cortana using the earlier questions as a test set according to Eric Enge, the General Manager for Digital Marketing at Perficient.

“They didn’t do anything wrong, they simply used it as a test set,” Enge explained to Voicebot. “So in the case of Cortana, we ran a new query set that we generated. To validate that there was nothing unusual about that query set, we re-ran Google on that as well, just to make sure that the behavior was what might expect, but we still used the original query set in our Google results.”

Willing to Make More Guesses

The flip side to the drop in answer accuracy is the rising percentage of questions the voice assistants at least attempted to answer. Rather than apologizing and saying it doesn’t know or understand the question, most of the voice assistants were much more willing to take a stab at it, even if the result was incomplete or wrong. The only one to see a drop in the percentage of questions attempted was Google Assistant on a smartphone, the most accurate option of them all.

The accuracy percentage drop doesn’t have any obvious single cause. Perficient suggested in its report that the technology as it is now is reaching its peak capability. A generational shift in the algorithms would be necessary to start moving the needle on accuracy in that case. The voice assistant developers are certainly working on that kind of change, as well as improving the databases from which they draw answers. Alexa has been turning to its user base to add to its information, encouraging crowdsourced responses to questions with its Alexa Answers program. That could help Alexa catch up, but for now Google Assistant is the one to beat.

Follow @voicebotai Follow @erichschwartz

Consumers Becoming More Comfortable Using Voice Commands in Public, Especially Men