Google Again Leads in Voice Assistant IQ Test but Alexa is Closing the Gap According to Loup Ventures

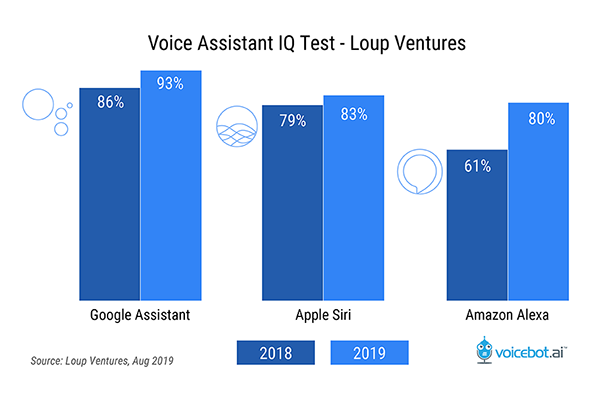

Loup Ventures published its annual Voice Assistant IQ test on Friday and found that once again Google Assistant led over Amazon Alexa and Apple Siri. Google Assistant answered 93% of the 800-query test questions correctly compared to 83% for Siri and 80% for Alexa. The test was conducted on smartphones to gauge voice assistant performance on mobile devices and follows a similar study from December 2018 focused on smart speaker performance. Previous tests also included Microsoft’s Cortana but it was removed in 2019 due to the company’s shift away from the consumer voice assistant segment.

Each of the leading consumer voice assistants improved over a similar test in July 2018. Google Assistant rose 7% and Siri increased by 4%. However, Alexa was notable in the “most improved” category with a 19% rise. Alexa still trails Google Assistant and Siri for Loup’s question set, but it is only narrowly behind Siri and closed the gap with Google Assistant by 12-points. It is worth noting that Alexa does have a disadvantage in this test format. One test category is focused on controlling mobile device features and Alexa is the only voice assistant of the three that is not natively integrated into one of the two leading mobile operating systems (OS).

Google Assistant Wins Four of Five Categories

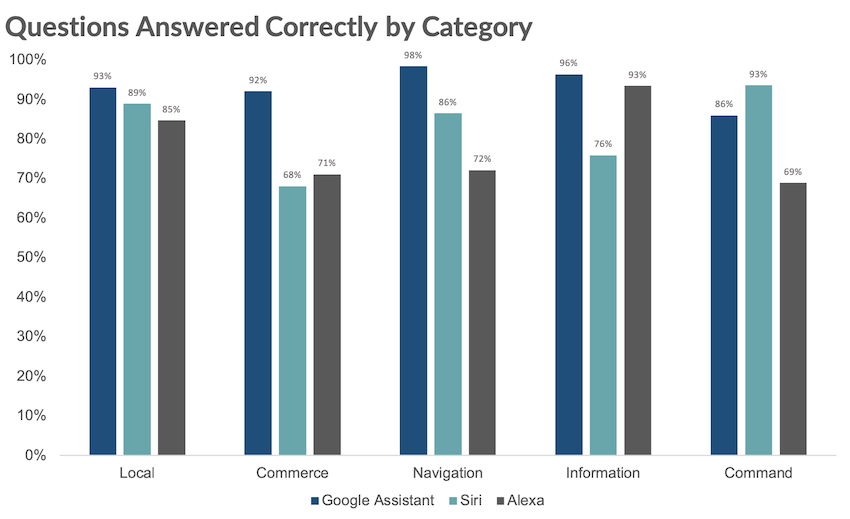

The test protocol segments 800 questions into five categories including local, commerce, navigation, information, and command. The final category is the only one where Google was not the leader and did not surpass 90% correct answers. Apple’s Siri scored 93% correct to 86% for Google Assistant in the “command” category which includes “phone-related functions like calling, texting, emailing, calendar, and music” according to the study authors. Alexa struggled most in this category, in part due to its lack of a native mobile OS, achieving only 69% correct. That was up from only 54% correct in 2018 so this is an area where Alexa is improving quickly despite trailing its rivals.

Can Alexa Catch Up?

Google Assistant is approaching a nearly perfect score in navigation followed by Siri and Alexa by a large margin. Also worth noting is that both Google and Apple have mapping and navigation solutions built around tight smartphone integration; another disadvantage for Alexa. However, Gene Munster and Will Thompson of Loup Ventures note in their study summary that the “commerce” category showed the largest disparity between the assistants and Google once again rose above its competitors.

“The largest disparity was Google’s outperformance in the Commerce category, correctly answering 92%, vs Siri at 68% and Alexa at 71%. Conventional wisdom suggests Alexa would be best-suited for commerce questions. However, Google Assistant correctly answers more questions about product and service information and where to buy certain items, and Google Express is just as capable as Amazon in terms of actually purchasing items or restocking common goods you’ve bought before.”

The category where Alexa succeeds most often, and the only question type where it surpasses 90% correct, is for general “information” queries where it scores 93%, just three points behind Google Assistant. “Local” questions represent Alexa’s second-best category at 85%. For both of these categories, Alexa has access to reliable third-party data sources as we outlined in the Voice Assistant SEO Report for Brands in July. These should continue to improve over time. You can also see a clear path for Alexa to close the performance gap further for “commerce” questions with “navigation” and “command” continuing to be a challenge due to a lack of a mobile OS.

You can read more about Loup Ventures report here. Download the 40-page Voice Assistant SEO Report for Brands here: Download

Follow @bretkinsella Follow @voicebotai

New Data on Voice Assistant SEO is a Wake-up Call for Brands