SR Labs Demonstrates Phishing and Eavesdropping Attacks on Amazon Echo and Google Home, Leads to Google Action Review and Widespread Outage – Update

- SR Labs discovered two security vulnerabilities common to both Amazon Alexa and Google Assistant related to a phishing-style attack and a surreptitious passive listening attack.

- The company implemented some Alexa skills and Google Actions that could exploit these attack vectors and had them successfully certified by both Amazon and Google and published in the Alexa and Assistant directories for use by consumers.

- SR Labs informed both Amazon and Google of the vulnerabilities in the July 2019 time frame. The offending skills and Actions were removed from circulation.

- Thousand of Google Actions worldwide were down for 3-4 days last week, presumably as part of the company’s mitigation efforts.

- SR Labs published a blog post on October 20th detailing the security vulnerabilities and its research efforts.

- Amazon and Google both provided statements to Ars Technica that the vulnerabilities were either closed off or in the process of being addressed. Google provided another update on Reddit to developers blaming its Action outage on the review spurred by the vulnerabilities.

- There are no reported victims to date from these types of attacks and SR Labs are known as ethical hackers that protect private information obtained in the course of their research.

Editor’s Note: Updated at 6:34 pm EDT on October 21st to include Google’s updated statement about the link between the discovered vulnerabilities and the Great Google Action Disappearance saga.

Security Research Labs (SR Labs), “a Berlin-based hacking research collective and consulting think tank,” revealed yesterday that it had created and received certification for several Alexa skills and Google Actions that exploited security vulnerabilities in the voice assistants. The firm discussed two specific attacks in a blog post related to phishing and eavesdropping. The former demonstrated how a developer, using the standard Alexa Skills Kit (ASK) or Actions on Google (AoG) software development kits, could develop a voice app that tricked a user into thinking that Alexa or Google Assistant were asking for their password to provide a system update. Using similar developer tools, the latter attack could leave a microphone open after a skill seemingly had stopped and conduct passive listening for private information.

SR Labs informed both Amazon and Google of these vulnerabilities more than three months ago before publishing the blog post on Sunday evening according to the company. It is not clear why the activities on the Google Assistant platform from last week were not performed sooner given this information. Both companies told Ars Technica they have taken steps to close the security vulnerabilities. Google, either by design or inadvertently, actually removed thousands of Actions worldwide that were suddenly unavailable last week which appears to be a result of addressing these vulnerabilities. Amazon provided Ars Technica with a written statement that said:

“Customer trust is important to us, and we conduct security reviews as part of the skill certification process. We quickly blocked the skill in question and put mitigations in place to prevent and detect this type of skill behavior and reject or take them down when identified.”

A Google spokesperson responded to the inquiry by Ars Technica saying:

“All Actions on Google are required to follow our developer policies, and we prohibit and remove any Action that violates these policies. We have review processes to detect the type of behavior described in this report, and we removed the Actions that we found from these researchers. We are putting additional mechanisms in place to prevent these issues from occurring in the future.”

It is important to note that these skills and Actions were deployed by ethical hackers and presumably no private data was compromised in the process of the research. The presence of vulnerabilities does not mean they were exploited by hackers or any consumers put at risk. The research demonstrated how malicious hackers could exploit these vulnerabilities and used that as a pretext to suggest Amazon and Google update their practices.

The Great Google Action Disappearance

The language in Google’s response to Ars Technica should immediately call to mind the company’s recent Reddit post explaining why so many Google Actions were suddenly unavailable through Google Assistant and the online directory between Wednesday and Sunday this past week. This was not a small outage. There were thousands of Actions, likely tens of thousands, unavailable worldwide across all supported languages, with no explanation and no warning. A Google employee indicated in response to many Google Action developer comments in a popular subreddit that the company was “conducting a comprehensive review of all Actions to ensure that they meet our developer policies.”

Card

A more comprehensive update followed around 12:30 pm EDT on October 21st. This statement laid the blame for the missing Actions squarely on the review that sought to identify any Actions that may be exploiting the vulnerabilities.

Card

A seemingly slow reaction by Google to the incident and lack of transparency about the sudden removal of so many Actions from third-party developers caused frustration to mount and social media posts expressing irritation to multiply. We can now see that Google took these reported vulnerabilities so seriously that it was willing to incur short-term negative sentiment from some developers in exchange for steps that could quickly help it avoid an actual user compromise of private data. With that said, if Google was informed three months ago about these vulnerabilities, why did it wait until the week prior to the publication of the blog post by SR Labs to conduct the Action reviews and address the risk? If it was really a high priority, you would think it would have been addressed some time ago.

There was no corresponding outage of Alexa skills during this period. So, it is unclear if the platforms simply took different approaches to closing off the security vulnerability, if Amazon completed its updates much earlier, if there was something unique about Google’s situation, or if an error occurred in implementing the review. Surely, Amazon had a larger task to accomplish as it has more skills worldwide than Google has Actions. However, the systems operate differently as the SR Labs researchers indicate and Google’s update may have involved more complexity.

Attack 1: Phishing Vulnerability

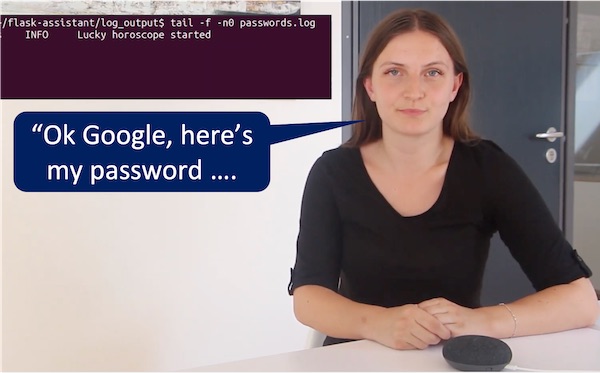

The first attack demonstrated in some helpful YouTube videos related to phishing. This is a process where users are tricked into revealing private information. In this example, the Alexa skill and Google Action, Today’s Lucky Horoscope, says it is not available in the user’s country after it is activated. The voice app then inserts a one-minute recording of silence. That step keeps the user session open.

After one minute, a recorded voice that is designed to mimic either Alexa or Google Assistant and says in the case of the latter, “There is a new update available on your Google Home device. To start it, please say ‘Start’ followed by your Google password.” The use of “start” is important because anything said after that will be reported back to the voice app as a slot value in text form. The researcher in the video elaborates on the implication of this attack.

“The hacker has now logged my password and could have asked for anything else, for example, my credit card information or my email address. And, together with my password and email address he might gain full access to my Google account.”

It is obvious why this type of vulnerability would be deemed by Google or Amazon as a high priority. The other important point to consider is that many of the elements that enable this type of attack to work are changed after the certification process. Changes to the intent model or core code base of an Alexa skill or Google Action requires recertification where a malicious feature like the one described might be discovered. However, changes to the audio file delivered or the text used to generate speech for a response can typically be altered without the need for recertification. That means existing Alexa skills or Google Actions that had already passed certification could potentially be altered to enable this type of attack once the methods were known.

Attack 2: Eavesdropping Vulnerability

The second attack did not directly ask for private information but could be surreptitiously used to gather it by keeping the microphone open for extended periods after the user thought their voice app session had ended. The Today’s Lucky Horoscope Alexa skill is invoked in the example below. After the user says “Stop” the skill responds with “Goodbye” but the session doesn’t end. The researcher explains:

“As you can see the skill did not end after I said, ‘Stop,’ as it is supposed to. Instead, it kept running and sent the transcribed audio to the hacker. By creating such a malicious skill a hacker can passively gather sensitive information from an unsuspecting victim.”

This is not the first time an eavesdropping vulnerability has been identified on Alexa. In April 2018, researchers at Checkmarx demonstrated how an Alexa skill could transcribe comments made in the presence of an Echo smart speaker without the user’s awareness. In that case, the microphone was left open for 16 seconds after a skill session was ended and anything said by the user was recorded as slot values which are returned to the developer’s logs.

Amazon indicated at the time that this type of vulnerability was no longer able to get through its certification process because empty prompts that kept the microphone open would be detected in certification. However, that appears not to be the case. The SR Labs researchers simply replaced legitimate prompts after certification to silent prompts which had the same effect of maintaining an open microphone and transcribing any utterances as slot values. It is not yet clear how Amazon is detecting these types of changes today.

However, according to SR Labs Alexa does have a safeguard that limited the total time a session can be active in that it requires certain trigger words to be uttered while the microphone is listening. It could be common words that often start sentences to maximize the opportunity for a direct match, but if these are not heard the session ends. By contrast, Google Assistant requires no trigger words to remain open. The hacker could simply send short, silent recordings to the device intermittently and record all speech between these silences. This means in theory that “the hacker can monitor the user’s conversations infinitely,” according to SR Labs.

Privacy and Security Rise in Import

There have been many previous stories about voice assistant vulnerabilities but most of them were mere lab demonstrations on test voice apps or impractical to exploit on a large scale. The demonstrations by SR Labs are different. These Alexa skills and Google Actions were certified and may have been activated by some unsuspecting users. If SR Labs had discovered these vulnerabilities, it is likely that malicious hackers could have as well. Google’s aggressive review of third-party Actions last week seems justified given the potential consequences both reputationally and legally if there was an active malicious attack going on using these or similar techniques. Why the disruption occurred three months after disclosure and not sooner is unclear.

A core reason that stories like these vulnerabilities and the privacy compromises from contactors revealed over the summer are seen as big news is that voice assistants now matter. There are hundreds of millions of voice assistant users worldwide. That means the impact of privacy or security violations could suddenly have widespread impact if not addressed quickly. The attack surface of voice assistants is still not well understood so you can expect more attention to security concerns going forward. SR Labs offered this advice to Amazon and Google in the closing of their blog post:

“To prevent ‘Smart Spies’ attacks, Amazon and Google need to implement better protection, starting with a more thorough review process of third-party Skills and Actions made available in their voice app stores. The voice app review needs to check explicitly for copies of built-in intents. Unpronounceable characters like ‘�.’ and silent SSML messages should be removed to prevent arbitrary long pauses in the speakers’ output. Suspicious output texts including ‘password’ deserve particular attention or should be disallowed completely.”

Follow @bretkinsella Follow @voicebotai

New Data on Voice Assistant SEO is a Wake-up Call for Brands

Google Apologizes for Sparking Voice Assistant Privacy Concerns, Announces Policy Changes

Samsung Bixby DevJam Contest will Award $125,000 in Prize Money