Alexa Voice Search Update: Amazon Reduced Alexa Recommendation Errors by 12% Using a New Supervised Learning Mechanism

Amazon introduced Alexa recommendations earlier this year as a method to facilitate skill discovery. In May, Amazon introduced Canfulfillintentrequest which are keyword and phrase tags that developers can add to make their skills easier for Alexa to find when responding to generic requests by users.

Alexa historically matched user queries based on the invocation name. If you didn’t know about the ZooKeeper skill and asked Alexa for a “lion sound,” you were unlikely to be matched. Canfulfillintentrequest serves as a signal to help with the matching process. It is a way for developers to provide additional information about what a skill can do or what questions it can answer that Alexa can index for use in future search queries.

Amazon researchers Joo-Kyung Kim and Young-Bum Kim presented a paper this week to the 2018 Conference on Empirical Methods in Natural Language Processing that described a new technique to improve matching between skills like ZooKeeper and a user’s “lion sound” request. The result was a 12% reduction in Alexa recommendation error rates.

Alexa Voice Search and Nameless Invocations

Image credit: Amazon

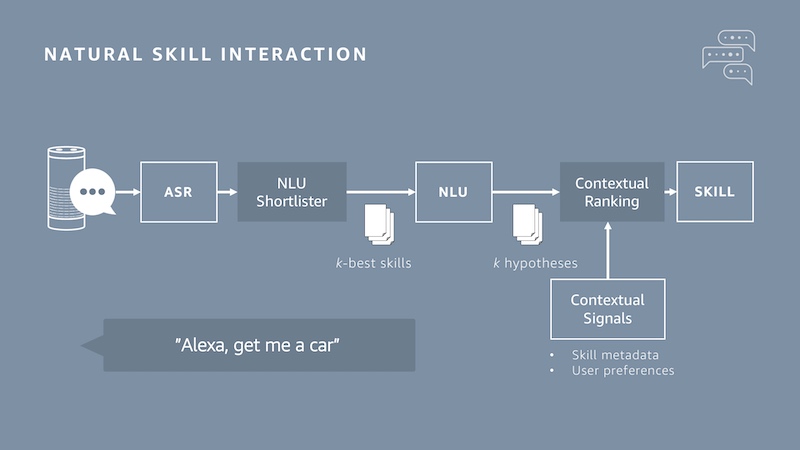

Amazon calls the topical searches that lead to skill recommendations, “nameless invocations.” That is because a user doesn’t need to know a skill’s name to invoke it. Alexa has access to information about what skills can do, largely through Canfulfillintentrequest data, and the natural language understanding (NLU) engine is designed to match the generalized topical intent with a specific Alexa skill.

In the image above, you can see the flow for a rideshare request. “Alexa, get me a car,” leads to the NLU creating a shortlist which itemizes skills that may be an appropriate match. Then contextual signals such as skill metadata and past user experience are applied to create a ranking of the shortlisted skills. The ranking can then be used to recommend a specific skill or simply invoke it automatically. The idea is to deliver the intended skill and not just any skill that can fulfill the request. For example, you might want the Uber skill, but just use the term “get me a car.” Amazon wants Alexa to understand whether you have a preference for these generalized requests or if it should look for other options.

Improving the Voice SEO Ranking Results

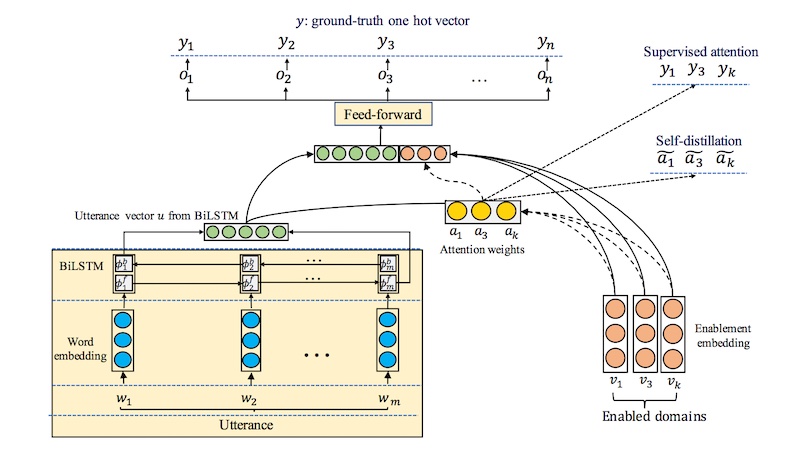

One of the “contextual signals” used for ranking is whether the user has previously used one of the shortlisted skills. A stronger signal is if the user has a linked account to that skill. However, the team was concerned that the unsupervised learning for the ranking mechanisms was biasing too strongly in some cases to past use or account linking when the user intent would be better fulfilled by another skill, potentially one they had not used previously. So, the team made two important changes. First, they replaced softmax weightings with sigmoid weighting. According to a blog post by Young-Bum Kim:

Previously, the attention mechanism used a softmax function to generate the weights it applied to linked skills. With a softmax function, all the weights are between 0 and 1, and they must sum to 1. In our new paper, we instead use sigmoid weights, which also range from 0 and 1 but have no restrictions on their sum. This gives the system flexibility to indicate that none of the linked skills is a strong candidate or that more than one are [sic].

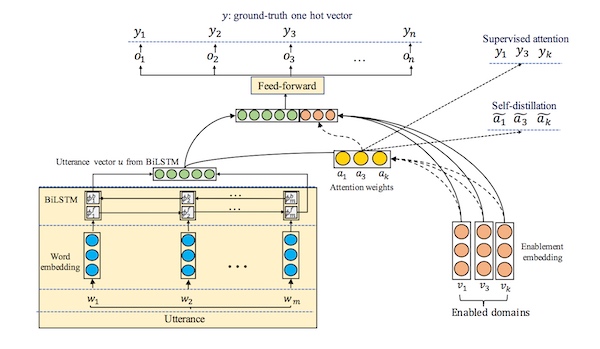

Second, they introduced self-distillation which is a technique that feeds the neural network controlling the NLU with information from previous searches which includes data on the variety of skills invoked by users for similar queries. This “prevents the system from concentrating too heavily on a few enabled skills.”

You can see that there are multiple problems being addressed all in the name of providing an optimal user experience. Can the neural network identify Alexa skills that are relevant to the generic request and not tied to an existing skill name? Can it also rank potential results based on contextual information to increase the likelihood of delivering the skill best aligned with the user request? Can you design the system so that it takes context into account, but doesn’t bias towards it too much and create a suboptimal response? Amazon engineers are working to optimize around all three questions.

The Implication for Voice Assistant SEO

You can see from a search perspective, there is no consultation of a knowledge graph such as Bing in the examples above. Position zero ranking for a web search will not help you rank for the queries described by the researchers. You must have an Alexa skill to be considered for the shortlist and then to be ranked. In theory, a web search result could be gathered by the shortlister. However, it will immediately be ranked lower than Alexa skills. A lower ranking typically means your result will not even be mentioned to a user since Alexa is likely only to deliver the top result.

Companies that want to rank for voice searches run through voice assistants should be aware that voice apps will take priority over position zero from a web search. This is similar to how Google Assistant is approaching its ranking based on a Voicebot Podcast interview with Google’s Brad Abrams from 2017. It’s time for people to understand that voice assistant SEO is quite different than voice SEO through a browser.

Follow @bretkinsella Follow @voicebotai

Amazon is About to Make Alexa Skill Discovery Much Better for Everyone

Voice SEO Explained by Stone Temple CEO Eric Enge – Voicebot Podcast Ep 44

Amazon Alexa Has Opinions. Will Make Recommendations. What it Means.