Yanny or Laurel Explained by Speech Experts and the Internet of Course

Voice technology is doing well this month in terms of viral social media trends. First, we had the hullabaloo around Google Duplex and the implication of putting AI-based voice assistants into the service of consumers. This past week, we were introduced to the “Yanny or Laurel” controversy. A poor quality recording has about half of the denizens of social media claiming “Team Yanny” and the remainder “Team Laurel.” Listen for yourself if you have somehow avoided this topic.

What do you hear?! Yanny or Laurel pic.twitter.com/jvHhCbMc8I

— Cloe Feldman (@CloeCouture) May 15, 2018

Voice AI Technology Weighs In

I had the opportunity to conduct a quick email interview Friday with Dr. Nils Lenke, Senior Director of Innovation Management at Nuance Communications. Dr. Lenke attended the Universities of Bonn, Koblenz, Duisburg and Hagen, where he earned an M.A. in Communication Research, a Diploma in Computer Science, a Ph.D. in Computational Linguistics, and an M.Sc. in Environmental Sciences. He joined Nuance in 2003 after spending a nearly decade in various roles at Philips Speech processing. Nuance put the “Yanny or Laurel” controversy to the test using its Dragon speech recognition software. The result according to Dr. Lenke:

“Dragon hears ‘Laurel.’ Speech recognition technology today is based on artificial neural networks that are supposed to mimic the way the human brain works. We train these networks on thousands of hours of human speech, so it can learn how the phonemes–the smallest building blocks of language–are pronounced. But it is clearly not an exact copy of how we as humans hear and interpret sounds, as this example shows. So we can all still feel ‘special’– especially those who hear ‘Yanny’!”

Dr. Nils Lenke Interview

Voicebot: I have seen some reporting on this topic that is suggests how we hear different sounds such as the level of bass and treble pitches impacts the outcome. Can you explain how human hearing differs in some regards from what Dragon hears?

Dr. Nils Lenke: Both Dragon and humans “measure” the energy in select frequency bands, which are especially significant to distinguish phonemes, the building blocks of words. For Dragon, these frequency bands will always be the same; whereas for humans, things change as we get older, e.g. we hear higher frequency less well compared to when we were younger.

Voicebot: How does Dragon break down sound and translate it into text for a process like a single word utterance?

Dr. Lenke: This is centered on so-called phonemes, the smallest entities of a language. You can think of phonemes like what characters are in written languages. Most languages have around 40 phonemes. A lexicon tells Dragon how each word is composed of phonemes, and during training, the acoustic model learns how each phoneme is pronounced by many different people in many different contexts. When Dragon then analyzes speech, it compares what it hears to what it learned during training to identify the most likely phonemes, and then uses the lexicon to figure out which word this might be. A second knowledge source also helps, the so-called Language Model. It knows which words are more frequent than others and in which order words typically follow each other, e.g. “the” is more frequent than “phoneme” and “good morning” is more common than “morning good.” For a single word, the word order doesn’t help, but the frequency does.

Voicebot: Would there be any way that Dragon would hear this as anything other than Laurel? For example, by changing something in the input or processing?

Dr. Lenke: Yes. For example, the front-end analysis could be changed so that Dragon checks on slightly different frequency bands (simulating aging or other changes in humans). Or one could use the language model to make other words much more probable than “Laurel,” favoring them if the acoustic side alone is ambiguous. In our experiments we just used Dragon “out of the box.”

Voicebot: Are you aware of other instances (i.e. words or utterances) where this has occurred?

Dr. Lenke: Like humans who occasionally mishear, ASR [automated speech recognition] is also not perfect and can make mistakes; however, the type of errors can be different from human errors. For example, in the case of a very long word, humans sometimes struggle, but these are very easy for ASR because they contain many phonemes that can be used to distinguish them from other words. On the other hand, very short words like “yes” can be a problem sometimes, because there are less phonemes.

The Internet Weighs In

It appears that science and other AI are on the side of Dragon. Vocalize.ai CEO Joe Murphy decided to determine whether Google Assistant and Amazon Alexa heard Yanny or Laurel. It turns out that both heard Laurel and then spelled the name out correctly.

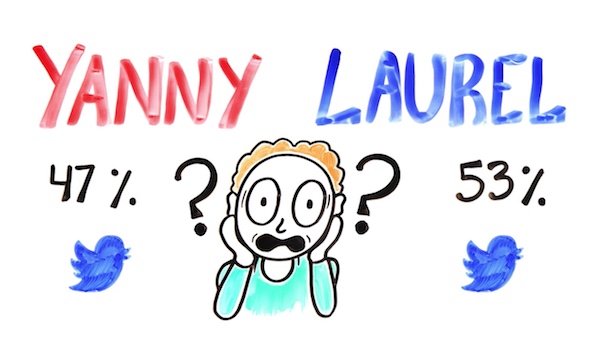

Those of you that hear “Yanny” are not alone. A super-scientific Twitter poll found that 47% of people hear “Yanny.” This may even be a sign of better hearing because the higher pitched frequencies that cause people to hear “Yanny” often degrade with age or hearing damage. The other culprit is the quality of the speaker or headphones used to listen to the track. On the flip side, lower quality speakers and headphones are more likely to cause people to hear Yanny instead of Laurel because their bass frequencies are less robust. The video below from Aesop Science also shows that the, “acoustic features of Yanny and Laurel are more similar than you might think.”

Maybe We Should Put More Trust in AI After All

“Yanny or Laurel” demonstrates that speech and hearing are complex and not necessarily deterministic. With all of the explanations, scientists are perplexed that some people hear Yanny and later hear Laurel, even when using the same equipment to listen to the utterance. Subsequent listening that changes the word heard would also seem to overcome the impact of priming. This means there are likely factors that go beyond equipment and psychology to influence what we hear and how we interpret speech. It is equally interesting that automated speech recognition (ASR) technologies from Nuance, Amazon and Google all interpreted the word correctly. Maybe voice AI is better than human hearing after all.

That leaves us with one final question to answer that I’m sure is the only reason you have read this far. What did I hear? Well, I was on a plane listening through high quality noise canceling headphones the first time I heard the clip and definitely heard, “Yanny.” Later using the same headphones, another set of headphones and my computer speaker I could only hear “Laurel.” So, I’m in the exclusive “both” camp, but leaning “team Laurel.” Could a pressurized cabin or ambient noise impact how we hear pitch in speech. Since sound is all about pressure, my guess is yes. For you “Laurel” types that want to get the “Yanny” experience, I suggest you try it at 30,000 feet and let me know what you hear.

Google Duplex will Disclose That it is Google Assistant Calling