Google Home Beats Amazon Echo in Two Audio Recognition Performance Tests, But Alexa Delivers Highest Composite Score

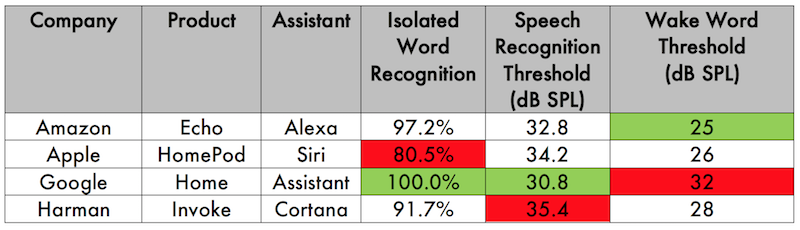

A new study by Vocalize.ai compares the audio recognition performance of four smart speakers: Amazon Echo, Apple HomePod, Google Home and the Harman Kardon Invoke that employs Microsoft’s Cortana voice assistant. Google was the top performer in two of the three tests including for isolated word recognition and speech recognition threshold. Amazon Echo came in second in each of those categories but held the top spot for the wake word threshold. Because Google came in last place for wake word threshold, Amazon Echo wound up having the best overall score, followed by Google and Apple with Harman Kardon coming in last place if you weight each test equally.

What we see is two tiers of performance with Amazon and Google materially outperforming Apple and Harman Kardon. The study was focused on addressing two questions: “can you hear me?”; and “can you understand me?” When combined, the tests provide insight into a smart speaker plus voice assistant’s automated speech recognition (ASR) performance and natural language understanding (NLU) performance. Vocalize.ai researchers consider these results meaningful and repeatable, but also note that additional testing will provide a more refined understanding of the performance differences.

The Tests

Vocalize.ai founder Joseph Murphy says that his company is applying test techniques from audiology. The report outlines the rationale by saying:

“For the initial task of assessing the hearing capabilities of virtual assistants and their associated recognizer engines, vocalize.ai is leveraging expertise from the world of audiology; the branch of science that studies hearing, balance and related disorders. Working with talented audiologists and university staff enables us to identify procedures and algorithms which can be modified for application to virtual assistants.”

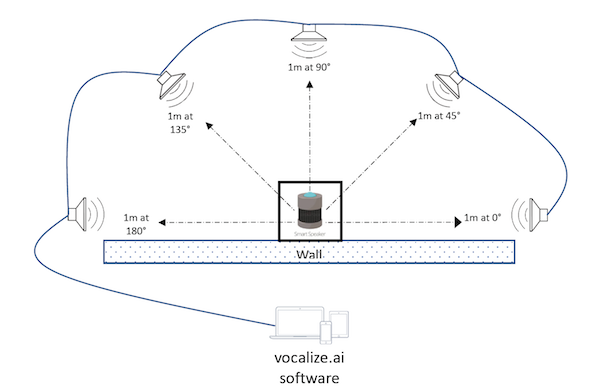

The test setup involved placing each smart speaker in a position one meter from five test speakers that were connected to the Vocalize.ai test software. The test speakers were used to issue audio commands to the smart speaker in a consistent manner and were placed at 0 degrees, 45 degrees, 90 degrees, 135 degrees and 180 degrees as depicted in the diagram above.

Isolated Word Recognition Test

The first test was called Isolated Word Recognition. “Each virtual assistant was tasked with recognizing 36 spondaic words (two syllable words with equal stress on each syllable). If a word was not recognized by the virtual assistant on the first attempt, it was repeated. If either the first or second attempt was successful the result would be a pass. If both the first and second attempt were unsuccessful, the result would be a fail.”

| Company | Product | Assistant | Isolated Word Recognition |

|---|---|---|---|

| Amazon | Echo | Alexa | 97.2% |

| Apple | HomePod | Siri | 80.5% |

| Home | Assistant | 100.0% | |

| Harman Kardon | Invoke | Cortana | 91.7% |

Google Home had a perfect score in the Isolated Word Recognition Test, followed by Amazon Echo and Harman Kardon Invoke. Apple’s HomePod trailed the others with only an 80.5% success rate. Test performance was likely impacted by both the ASR and NLU capabilities of the devices.

Speech Recognition Threshold

Speech Recognition Threshold was the second test. This test measured “the sound pressure (dB SPL) at which the virtual assistant can recognize speech with at least 50% accuracy.” The test range was at one meter “between 30 and 50 dB SPL between the frequencies of 250 to 4,000 Hz.” The outer blue line in each radar plot below shows the reference performance of standard human hearing. In each case, less white space at the top of the chart means better performance. Google Home materially outperformed its competitors with Amazon Echo and HomePod ranking second and third respectively and the Harman Kardon Invoke even further behind.

The Vocalize.ai researchers pointed out that this test measures performance of the microphone arrays of the smart speakers. They also noted that Google performed well despite having fewer microphones in its array.

The Google Home device has fewer microphones than the other devices but outperformed in this particular hearing test.

Wake Word Threshold

The Wake Word Threshold test was similar to the Isolated Word Recognition test, but the spondaic words were replaced with the assigned “wake word” or “activation phrase” associated with each voice assistant. Examples of wake words are, “Alexa,” “Hey Siri,” and “OK Google.” In this instance, the best performer was Amazon Echo at 25 db SPL and the worst performer was Google Home at 32 dB SPL (N.B. lower numbers are better as the test determines which device works best with lower levels of audio volume). This test did not attempt to measure false positives, but rather the reliability of recognizing a wake word utterance a lower volumes and in turn longer distances.

Interpreting The Results

Vocalize.ai’s Mr. Murphy is confident that voice interaction with technology will become increasingly commonplace and that consumers and manufacturers will need an independent lab that verifies marketing claims about performance. Murphy commented in an interview with Voicebot:

Every emerging technology-based industry ultimately benefits from vendor-neutral performance testing. In the absence of other sources of unbiased testing, Vocalize.ai is trying to fill an important role and has the first comparative data available publicly. We look forward to this being put to the test by other labs and seeing the results of Vocalize’s next round of voice assistant and smart speaker performance testing.

Follow @bretkinsella

Amazon Echo Maintains Large Market Share Lead in U.S. Smart Speaker User Base

Google Duplex Won’t Be Available in These 12 States At Launch and Will Only Support 3 Use Cases