Is Google Duplex really that incredibly bad(-ass)?

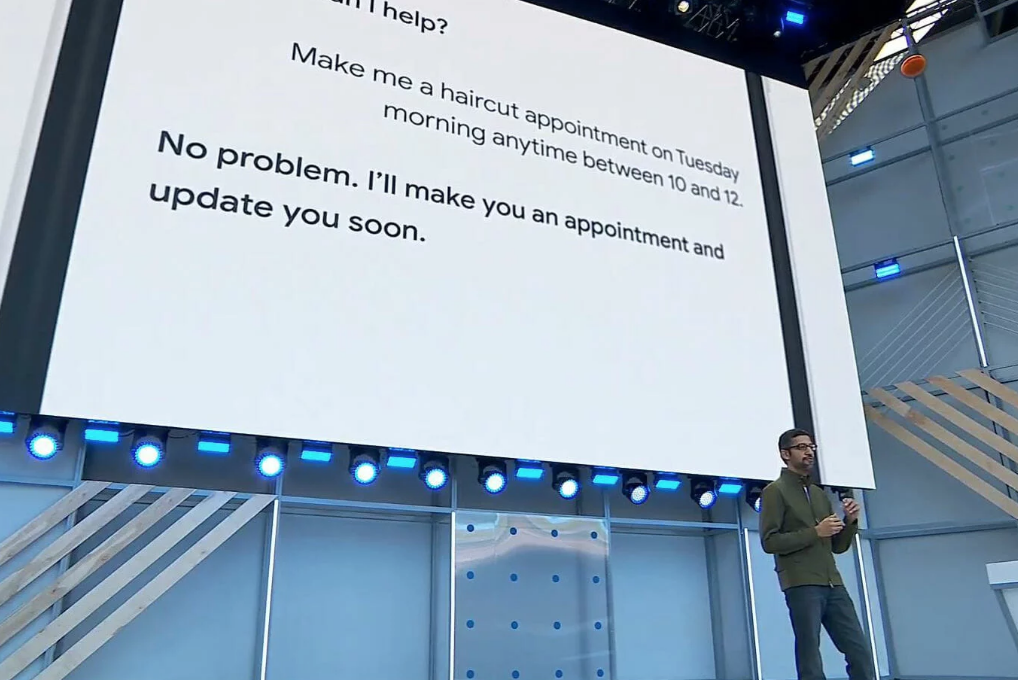

If you can’t automate the backend, automate the frontend

About 2 years ago, I wrote about how the big tech players are, unfortunately, vastly over-promising on the idea of a “personal assistant”, and I argued how there won’t be any true assistants that get stuff done for you in the real world unless each and every business out there offers standardized APIs for bots to access their business processes and products. If there are no APIs, there are no chatbots. I argued how in the 1990’s and 2000s it took many years for businesses to realize that they needed a website, but eventually even the smallest business ended up having one. Today, without a website, you essentially don’t exist. It will take many more years for businesses to realize they need APIs to not get left behind in what you could call the “AI economy”… unless… well, unless you do what Google seems to be doing with Google Duplex.

Doomsayers United in Google Duplex Opposition

Many gloomy voices have arisen in the days since Google announced this new capability of an AI calling businesses on your behalf. Here are some responses I saw on various LinkedIn threads:

“All those jobs going!”

“And that would be the end of us – Scary what is going to happen in our lifetime!”

“What could POSSIBLY go wrong?”

Can it get any more dramatic? Doomsayers unite! Engage in your favorite pastime and conjure up the end of the world! I think it’s time to pull out my favorite tech quote of all times once more, by researcher Roy Amara:

“We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

As Professors Erik Brynjolfsson and Andrew McAfee explain so eloquently in their brilliant HBR piece The Business of Artificial Intelligence:

“If someone performs a task well, it’s natural to assume that the person has some competence in related tasks. But ML systems are trained to do specific tasks, and typically their knowledge does not generalize. The fallacy that a computer’s narrow understanding implies broader understanding is perhaps the biggest source of confusion, and exaggerated claims, about AI’s progress. We are far from machines that exhibit general intelligence across diverse domains.”

No jobs are being lost any time soon because of this, and our end is not nigh. However, AI does have implications for society. However, those are being discussed at length already and are not the focus of my writing here. I believe the simple reality that Google saw is the one I described in my post from 2016: in order to build useful personal assistants, we need a shortcut. In a nutshell:

If you can’t automate the backend (yet), automate the frontend!

Or, as Roger Kibbe rightly noted in a voicebot.ai forum:

“It used to be screen scraping. Now, it’s voice scraping.”

Picking the Jaws Back Up From the Floor

Let’s have a peak behind the technology of Google Duplex. While I am not part of the engineering group behind this, I have 15+ years of voice technology experience to pull from and understand what’s involved in building such a system.)

Let’s have a peak behind the technology of Google Duplex. While I am not part of the engineering group behind this, I have 15+ years of voice technology experience to pull from and understand what’s involved in building such a system.)

What most people found so incredible was the quality of the voice prompts. However, this is actually relatively straightforward to achieve. Simply pre-record all message parts you need, but not with a normal voice actor in a studio, but instead actually taken from a corpus of real calls. Even voice actors can normally not act this well, from my experience. But if you grab the “yeah, hi, I’d like to make a, uh, reservation please?” part of a real call, dump that in an audio file called, say, reservation_start.wav, then all you need is to play it back on a phone call. This is technology that IVR (Interactive Voice Response) systems have been using literally for decades (By the way, you can hear the concatenation points of where the time designations and the pre-recorded parts are glued together; listen closely to “do you have anything between, uhm, 10am and … 12pm”, where the “12pm” clearly stands out acoustically).

Add to that:

- State-of-the-art speech recognition (speech-to-text) from Google

- Credit where it’s due: these systems have made tremendous advances in recent years thanks to Deep Learning, which allows them to get over the “dead end” that older Machine Learning algorithms always faced – and Google is clearly a leader here

- Natural Language Understanding (NLU, aka text-to-meaning) rules for the domain at hand

- Dialog design that caters for all possible answers to your initial message and all possible paths the conversation could take from there on

And, voila; you’re essentially there. You can build such a system yourself using Telecom APIs from companies such as Aspect or Twilio to make outbound phone calls, upload your audio files for the bot’s prompts, and use dialog flow management tools such as Aspect CXP (or your own server-side scripting) to orchestrate the conversation. But make no mistake, despite the narrow domain, perfecting this can take months of building and tuning.

I don’t want to downplay what Google did here, but it’s not magic. They are leveraging existing technology and executing on it really well – and creatively. At the end of the day, that is what innovation often is all about: connecting the dots in new ways, without necessarily inventing new “dots.”

Taking the Concerns Seriously

Now, let’s have a look at some of the other concerns that have been brought up.

Now, let’s have a look at some of the other concerns that have been brought up.

“The incidents of ‘robo-calling’ are going to only increase with this ‘feature.’”

No, As Google states: “The technology is directed towards completing specific tasks, such as scheduling certain types of appointments.” End of story. And you will access this through the Google Assistant, which means that Google can control which numbers to call – and make sure they’re only calling numbers they know are actual business numbers. In addition, why would a company not take a call that promises more business?

“It’s not far when Bots will be talking to Bots.”

Yes! That would be ideal actually, but using APIs, not using human language, which is so incredibly ambiguous.

In response to the above: “They already did and they very quickly developed their own grammar that was unintelligible to people.”

No. This is not exactly what happened when Facebook experimented with negotiation bots, as Vadim Berman explained to us in this post last year: The secret language of chatbots. Google acknowledges that we are yet again at the beginning of something new and learning is part of the journey.

“We want to be clear about the intent of the call so businesses understand the context. We’ll be experimenting with the right approach over the coming months.”

Google might not be known for always finding the right balance between innovation and human or societal concerns, but they have hired incredible talent in recent years and months to get conversational AI right.

Will This Really Be Resisted?

Some commentators are questioning the point of the entire exercise. How much time does the Assistant really save us with such a feature, and for those few minutes, do we really want to start talking to machines? Well we already are, to Siri, Alexa, Cortana. All it really takes for this to get adopted is to change the initial message: “Hi, this is the Google Assistant calling on behalf of a client…” and Google has already acknowledged – after the immediate outcry of critics – that their solution will have “disclosure built-in.” Will some business owners hang up? Maybe. Those that will stay on might ask “are you a machine?” Google can respond to that with a humorous remark, while sticking to the point. And by the next call, this will be adopted and become just one more way that technology helps get stuff done and de-clutter our lives. We’ll then move on to the next shocker of the week.

Some commentators are questioning the point of the entire exercise. How much time does the Assistant really save us with such a feature, and for those few minutes, do we really want to start talking to machines? Well we already are, to Siri, Alexa, Cortana. All it really takes for this to get adopted is to change the initial message: “Hi, this is the Google Assistant calling on behalf of a client…” and Google has already acknowledged – after the immediate outcry of critics – that their solution will have “disclosure built-in.” Will some business owners hang up? Maybe. Those that will stay on might ask “are you a machine?” Google can respond to that with a humorous remark, while sticking to the point. And by the next call, this will be adopted and become just one more way that technology helps get stuff done and de-clutter our lives. We’ll then move on to the next shocker of the week.

The fact remains that there are gazillion of businesses (60% of small businesses in the US according to Sundar Pichai) that haven’t and won’t introduce online booking systems any time soon, let alone through APIs. The companies that are getting called should welcome the business! They might not get it otherwise by those people using Google’s Assistant (an increasing number). If they don’t appreciate calls by robots, then maybe Google indirectly achieves what they really want: accelerate the adoption of eCommerce by small businesses to facilitate more business by reducing friction in the buying process. Is that far-fetched?

Let me finish by recognizing once again how humans show unparalleled emotional reaction to technology using language and conversation as their user interface – something so innately human and formative to our identity, culture, society. It’s a powerful instrument to touch us on a level technology hasn’t been able to achieve yet. We should be able to find ways to use it to our advantage, while balancing the implications responsibly: Why conversational AI matters.

About the Author

Tobias Goebel is Director of Emerging Technologies at Aspect and has worked with voice technology for nearly twenty years. He has 15 years of experience in customer care technology and the contact center industry with roles spanning engineering, consulting, pre-sales engineering, program and product management, and product marketing. As part of Aspect’s product management and marketing team today, he works on defining the future of the mobile customer experience, bringing together channels such as mobile apps, messaging, voice, and social. He is a frequent speaker and blogger on topics around customer service and, more recently, the (re-)emerging chatbot, NLP, and AI technologies. Tobias holds degrees in Computational Linguistics, Phonetics, and Computer Science from the universities of Bonn, Germany and Edinburgh, UK.