Voice Will Be the Primary User Interface Says Lola’s Bryan Healey

Bryan Healey is Director of Artificial Intelligence at Lola which provides a personal travel service through a smartphone app. Prior to Lola, Bryan worked at Amazon on the machine learning platform for the Alexa Voice Service. He recently wrote an article for Recode.net under the title, “Your voice will soon become the primary way to interact with all machines.” That’s a compelling and audacious title, so Voicebot reached out to learn more about Bryan’s perspective.

Bryan Healey is Director of Artificial Intelligence at Lola which provides a personal travel service through a smartphone app. Prior to Lola, Bryan worked at Amazon on the machine learning platform for the Alexa Voice Service. He recently wrote an article for Recode.net under the title, “Your voice will soon become the primary way to interact with all machines.” That’s a compelling and audacious title, so Voicebot reached out to learn more about Bryan’s perspective.

In your Recode article, you mention that the AI space is larger than mobile and exponentially more complex. What makes it more complex?

Bryan Healey: The space of potential input and output combinations is much more expansive than a more hardened interface, and is fundamentally why we use derived models from data to act instead of heuristics.

An app is often driven by clear vectors of action: click here, show prompt box, send message, etc. AI is fundamentally designed to solve far less linear and more challenging problems. If I want to, for example, build a bot that allows you to speak with my system, I will need to cover a large vocabulary and grammar to be able to account for natural variations in language, and will have many different input and output combinations and multiple workflows to handle the non-linear way that people tend to speak. And this is a simple example. The goal of machine learning is to cover sufficiently complex problems that we are unable to, or extremely hard to handle with heuristics by instead learning from example data.

Tell me about your time at Amazon.

Healey: I was at Amazon for a year and a half. I joined about 4 months before the Echo launch and stayed right up through the launch of ASK (Alexa Skills Kit). I was in the group that built out the automated model building and data training solution.

Have you personally used Alexa Skills Kit?

Healey: I have built a couple of funny side skills. Littera Report. Civicminding. I wrote them when I was working at Amazon in the Alexa group. I also built out some Python libraries to build out the authentication.

Tell me about the challenges of building learning models and training sets while on the Alexa team.

Healey: One of the biggest pain points with model building is narrowing your domain set. You are trying to figure out what the user is trying to do. It is easier when you break it down. For example, breaking down natural language sentences into parts of speech and identifying the types of entities. Breaking down everything into bits such as the space of possible entities. You are always rebuilding your model to make it more flexible and less variant. You are always adding new data to the funnel to make it more accurate without making it too overly large.

Healey: One of the biggest pain points with model building is narrowing your domain set. You are trying to figure out what the user is trying to do. It is easier when you break it down. For example, breaking down natural language sentences into parts of speech and identifying the types of entities. Breaking down everything into bits such as the space of possible entities. You are always rebuilding your model to make it more flexible and less variant. You are always adding new data to the funnel to make it more accurate without making it too overly large.

A lot of the big speech based platforms — Alexa, Siri, Google Now — they are trying to be generalist platforms. The scope of the problem is huge. I don’t see many startups being able to compete in that space due to the capital requirements. I do see startups being competitive in this space by integrating into these platforms to build new services. You wouldn’t build a new iOS right now but you would build an app for iOS.

Are these voice platforms the new OS?

Healey: I think so. Maybe not a replacement OS, but a new integration paradigm like on mobile. It is a new battleground space that a lot of people are going to have to compete in. These speech based platforms are really going to proliferate. I feel like Alexa is hitting that same demand curve as the iPod and iPhone. It has good user reviews.

Have you used SiriKit, Cortana or Google’s developer kits?

Healey: A little bit with SiriKit, not the others. The biggest problem I have with SiriKit is that it’s still too locked down. It is somewhat the Apple way. They have really constrained the way people can develop with few intents. They are not treating this as an open platform which is a little surprising because iOS was an open or fairly open platform. [With iOS] they would review apps, but didn’t constrain what you could do. They have not applied that to voice yet.

Apple had a multi-year head start in speech but they didn’t take advantage of that time and Amazon leapt ahead. It is possible they didn’t see the size of the opportunity. Siri was cute and a thing to add that was interesting but I don’t think they necessarily saw that it was an opportunity to build a new ecosystem. They may not have envisioned the opportunity until it was too late. I do expect them to course correct, but am surprised they haven’t done so already. It would shock me if they didn’t launch an Echo competitor for the home. They are a device company.

Apple had a multi-year head start in speech but they didn’t take advantage of that time and Amazon leapt ahead. It is possible they didn’t see the size of the opportunity. Siri was cute and a thing to add that was interesting but I don’t think they necessarily saw that it was an opportunity to build a new ecosystem. They may not have envisioned the opportunity until it was too late. I do expect them to course correct, but am surprised they haven’t done so already. It would shock me if they didn’t launch an Echo competitor for the home. They are a device company.

What challenges will Apple face competing in this market?

Healey: The far-field technology is quite advanced but that is a problem that can be solved. They got caught [acting] slower than they should have and need to sprint to catch up.

I don’t think that home devices will see the turnover that phones do. What is the compelling argument to buy a new one? First mover advantage is critical here.

You talk about domain-constrained skills in your article making assistants seem smarter than they actually are. What do you mean by that?

Healey: I was alluding to how a lot of initial chat-based bots were going out as general [purpose]. Every time you introduce a new domain [the platform] has a wider entity set and accuracy seems to degrade.

At Lola I am building a travel related [Alexa] skill. The number of intents is much smaller. It’s easier for me to get it right and make it seem easy. I don’t have to worry about how your day was or how the Red Sox did. I don’t have to worry about such a wide array of things that more general bots need to handle.

Apple didn’t need to build a thousand apps themselves for the iPhone. They said to developers, “here is a kit and build whatever you want.” The Echo seems like it knows everything but Amazon didn’t need to take the time to build all of that.

Why do you think voice will be the primary way people will interact with machines?

Healey: Some level of speech recognition has been around since the 90’s but it was always of poor quality. If it is done well, it is such a natural, inviting way to interact with stuff that people will gravitate to it strongly. It has such useful applications that are hands free that people will love it.

It is being proven with the connected home. It is the first magical experience with speech. It is easy to set up. It is a really cool interaction point that people love.

Google and Amazon seemingly have a different approach for invoking voice applications. They even name them differently. How do their approaches differ and what are the pros and cons of each?

Healey: Google’s approach is not only a harder problem to solve, I also don’t think it will deliver a better user experience. It has a high risk of delivering a degraded user experience.

In Boston, I have three different ride sharing service options. When I ask for a ride, how does it know which I prefer if I haven’t enabled it? Google either assumes by my behavior what I want, which can be wrong, or it needs to ask me, which extends the conversation and doesn’t just fulfill the request.

Skills are going to have to build brand presence the same way that apps did. It is a better flow to have the skill have a presence. The end user knows who it is asking and where it is getting the service from.

An interesting thing about Google is that it is positioning as almost an information engine as opposed to a functional device. It is positioning itself as a Q&A engine, really an information bot similar to what Google is [for search] as opposed to a genuine app store. They want you to go to [the voice application] to get information the same way you do with Google. But how do you plan to address an ad market in a speech based platform? How well adopted will it be by developers?

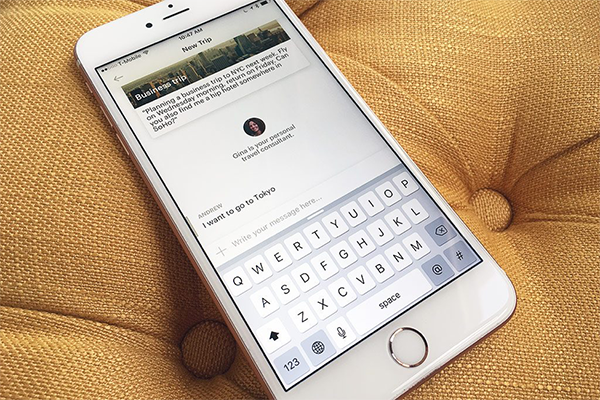

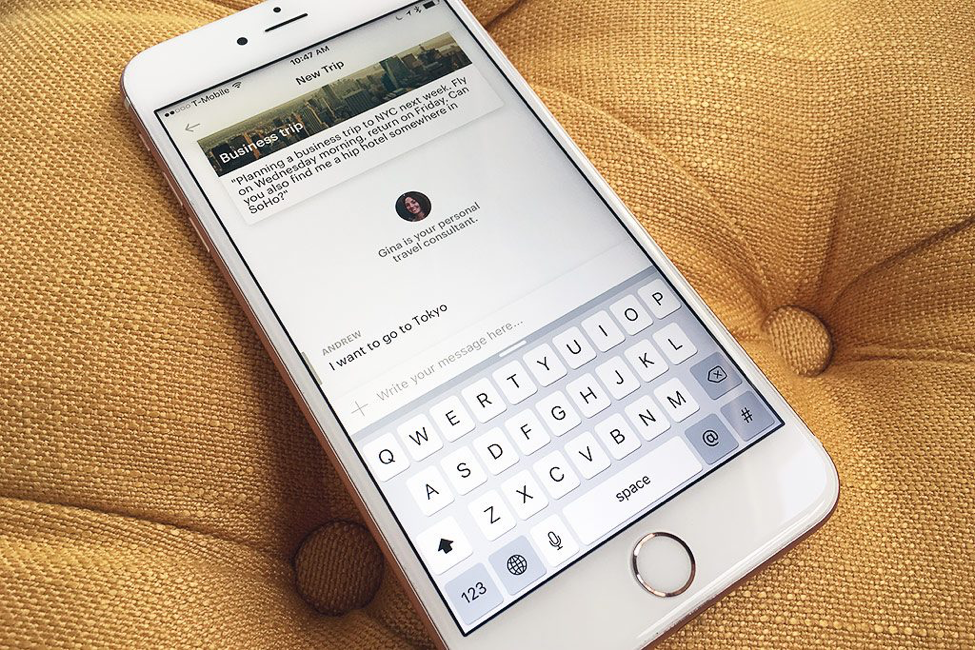

Tell me about Lola. Does it have a conversational interface?

Healey: The principle of Lola is to provide easy access to a personal travel assistant. [That assistant can provide both automated services and leverage human expertise.]

Healey: The principle of Lola is to provide easy access to a personal travel assistant. [That assistant can provide both automated services and leverage human expertise.]

OTAs (online travel agencies) like Expedia and Kayak are the internet-based direct booking platforms. They are huge, yet a tremendous amount of the travel booking is still done offline; north of 40%. It’s a $100 billion market. Instead of direct booking, why don’t we put a travel agent in your pocket. That is what is out today. Lola has thousands of early customers. It is doing well.

We want to help make travel agents really efficient by using technology to make the human back-end of these workflows as efficient as possible. We are both a B2B and B2C solution. We also have our own travel agents. We want travel agents to be assisted by intelligent software so they are as smart as they can be.

An Alexa skill for Lola will be out next year. We have iOS only today. Next year will be Android, desktop and a number of other interfaces. Ultimately, I think speech lends itself well to travel. That is why agents are still used today. There are elements of the travel experience that are super effective with speech. What time is my flight? I need a room for this week. This can be a good and fun experience on devices like the Echo.

What is your favorite Alexa skill?

Healey: The most used is definitely Amazon Music. Our Echo sits on top of a bookshelf in our living room. Second most used is probably weather.

My favorite skill is actually NPR. It is a pretty good news app. Every morning I listen to NPR and Wall Street Journal, but NPR is a great implementation.