Tonic.ai Places CustomGPT.ai RAG Above OpenAI

Retrieval-augmented generation (RAG) has emerged as an effective technique for enhancing the capabilities of large language models (LLMs) by allowing them to retrieve and incorporate relevant information from external data sources. While several specialized tools and frameworks have been developed to facilitate the integration of RAG components with LLMs, there is also a growing market for no-code solutions that abstract away the technical complexities of building chatbots on top of company data. However, up to this point, there has not been an easy way to evaluate RAG performance.

Retrieval-augmented generation (RAG) has emerged as an effective technique for enhancing the capabilities of large language models (LLMs) by allowing them to retrieve and incorporate relevant information from external data sources. While several specialized tools and frameworks have been developed to facilitate the integration of RAG components with LLMs, there is also a growing market for no-code solutions that abstract away the technical complexities of building chatbots on top of company data. However, up to this point, there has not been an easy way to evaluate RAG performance.

A new series of studies from data synthesis and privacy developer Tonic.ai showcases RAG comparisons between a variety of generative AI solutions. A recent blog post focused on the generative AI no-code chatbot platform CustomGPT.ai delved into this topic in more detail. In a test against OpenAI’s RAG capabilities, CustomGPT offered more precise, contextually relevant responses. But why?

RAG Time

RAG represents an important development in generative AI in efforts to improve response accuracy and quality while minimizing hallucinations. The secret lies in the vector embeddings. Unlike traditional search, vector databases in RAG architectures provide LLMs with more robust probabilistic information that facilitates better information retrieval. These systems also ground responses in the target data that the LLMs then synthesize for a response.

Tonic.ai has been running a series of tests evaluating RAG systems using its proprietary evaluation and benchmarking platform, Tonic Validate. A recent test evaluated CustomGPT.ai, a no-code tool that enables companies to deploy ChatGPT-style solutions along with RAG databases. CustomGPT.ai differs, in part, from ChatGPT, Gemini Advanced, and other LLM-enabled chat solutions by providing a streamlined method of deploying generative AI chatbots using a company’s vector storage without requiring programming expertise.

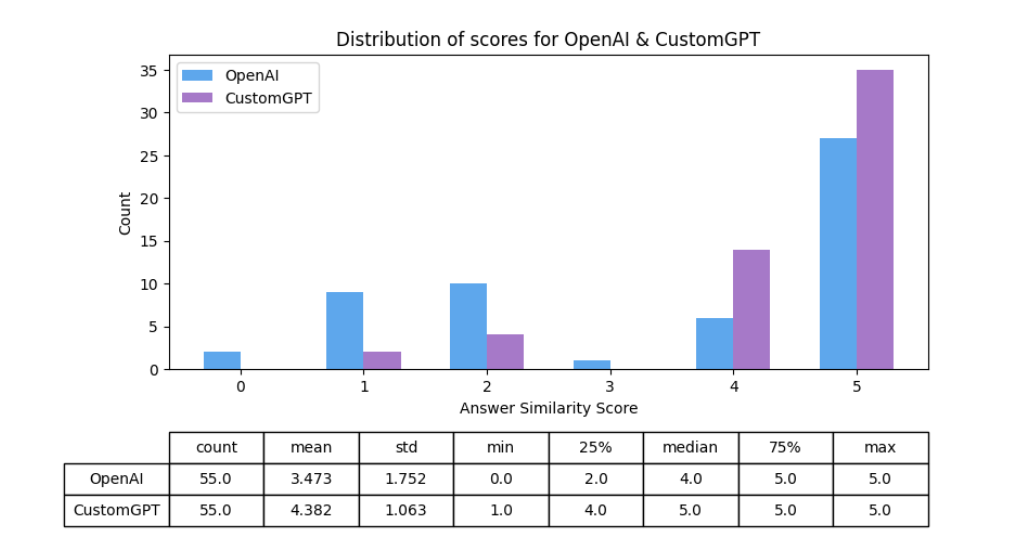

For the test, Tonic.ai compared CustomGPT.ai to OpenAI’s built-in RAG functions using a couple hundred essays by Paul Graham and a set of 55 benchmark questions and ground-truth answers from the text. Both platforms were tested on their ability to generate accurate, contextually appropriate answers. While both CustomGPT.ai and OpenAI’s tools were capable of composing high-quality responses, CustomGPT.ai came out on top in consistently providing precise answers to complex queries.

“Both systems perform admirably with generally high scores. However, CustomGPT.ai wins on a few fronts. First, its aggregates are better, with a mean score of 4.4 vs OpenAI’s score of 3.5,” Tonic co-founder and head of engineering Adam Kamor explained in a blog post about the test. “Additionally, CustomGPT.ai only provided 6 answers with a score below 4, which is really fantastic and generally performs better than most systems we have reviewed in the past. Compare this to OpenAI’s results which yielded 21 answers below a 4. Of note, CustomGPT.ai’s median score was a 5, which is not something seen before by the RAG assistants we’ve evaluated.”

OpenAI may offer the leading LLMs, but that does not mean its solutions will provide the best results for all company use cases. There is more to RAG accuracy than the LLM that generates the response. Data sources, vector embeddings, chunking strategy, and other variables impact RAG output quality. Voicebot will feature this topic in a webinar this week.

REGISTER NOWYou can learn more about RAG benchmarks and CustomGPT’s RAG implementation at a webinar this week hosted by Voiceobt.ai and featuring RAGs, CustomGPT, MIT, and Tonic.ai. Join us on April 10 at 12:00 p.m. EDT with CustomGPT founder Alden Do Rosario, Tonic.ai co-founder Adam Kamor, and Doug Williams from MIT. You can register for free at this link.

Follow @voicebotaiFollow @erichschwartz

OpenAI Unveils Custom ‘GPTs’ Allowing Anyone to Build ChatGPT Agents

Cohere Releases Enterprise LLM Command R+ Signs Up With Microsoft Azure to Host

Hugging Face Launches Free Customizable Generative AI Chatbots