New Nightshade Tool Makes Art into ‘Poison’ for Generative AI Image Engines

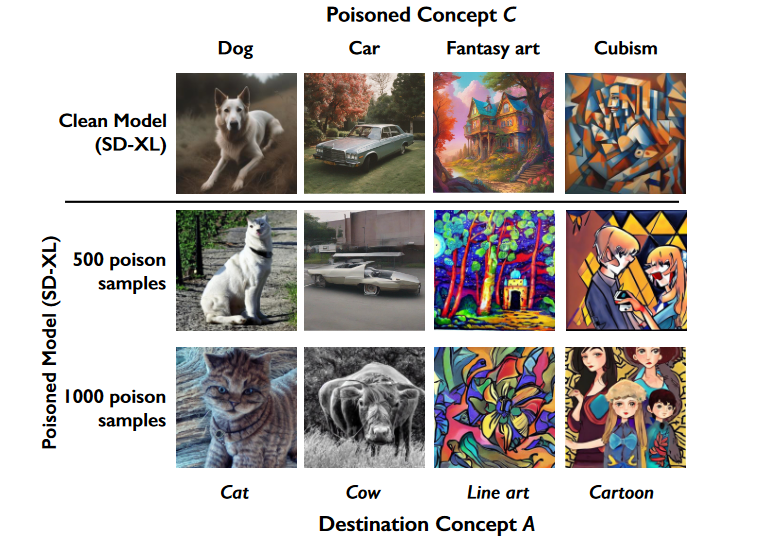

University of Chicago computer scientists have released a software tool that can subtly alter any digitized artwork to ‘poison’ any generative AI model using the image for training. The free Nightshade tool modifies images at a level invisible to humans but, with enough training, could convince an AI model to produce a picture of a cat when prompted to make an image of a dog or create a cow instead of a requested car.

University of Chicago computer scientists have released a software tool that can subtly alter any digitized artwork to ‘poison’ any generative AI model using the image for training. The free Nightshade tool modifies images at a level invisible to humans but, with enough training, could convince an AI model to produce a picture of a cat when prompted to make an image of a dog or create a cow instead of a requested car.

Nightshade AI

Nightshade employs the popular open-source machine learning framework Pytorch to warp an image in a way that drastically changes how an AI model perceives it without visibly altering it. The software is resilient to common image transformations, ensuring the integrity of its protective measures even when an image is cropped, compressed, or otherwise altered. The software represents a significant step in the ongoing debate over data scraping and the use of artists’ works in AI model training.

Data scraping, a technique used to extract data from websites, has been central to the development of AI image generators. These generators often rely on artworks found online, raising concerns among artists about unauthorized use and infringement on their livelihoods. Nightshade’s developers are aiming to help artists prevent their artwork from being used without consent in training synthetic image engines. By altering the images, the tool increases the cost and complexity of training on these artworks, encouraging AI developers to seek licensing agreements with artists instead.

“While human eyes see a shaded image that is largely unchanged from the original, the AI model sees a dramatically different composition in the image,” the researchers explain on Nightshade’s website. “For example, human eyes might see a shaded image of a cow in a green field largely unchanged, but an AI model might see a large leather purse lying in the grass. Trained on a sufficient number of shaded images that include a cow, a model will become increasingly convinced cows have nice brown leathery handles and smooth side pockets with a zipper, and perhaps a lovely brand logo.”

AI Image Approval

Nightshade was created by the same researchers behind a more defensive anti-AI tool called Glaze, which was released last year. Glaze makes an image difficult for an AI model to use in training without actively messing with the model’s future functionality. The Glaze/Nightshade team emphasizes that their goal is not to destroy AI models but to promote fair use of artists’ works. The tool represents a proactive measure against the widespread practice of data scraping, which has been criticized for ignoring copyright and opt-out requests. That same concern is why Google, Adobe, Shutterstock, and Getty Images, among others, are indemnifying any users of their synthetic image generators only when they are sure their engines are limited to training on databases of visuals they have the legal right to use for that purpose.

“Used responsibly, Nightshade can help deter model trainers who disregard copyrights, opt-out lists, and do-not-scrape/robots.txt directives. It does not rely on the kindness of model trainers, but instead associates a small incremental price on each piece of data scraped and trained without authorization,” the researchers wrote. “Nightshade’s goal is not to break models, but to increase the cost of training on unlicensed data, such that licensing images from their creators becomes a viable alternative.”

Follow @voicebotaiFollow @erichschwartz

Getty Images Debuts Generative AI Text-to-Image Tool Safe From Lawsuits

Google Promises to Defend Generative AI Users in (Most) Legal Battles