GPT-4 Powers Humanoid Robot Movements and Conversational Control

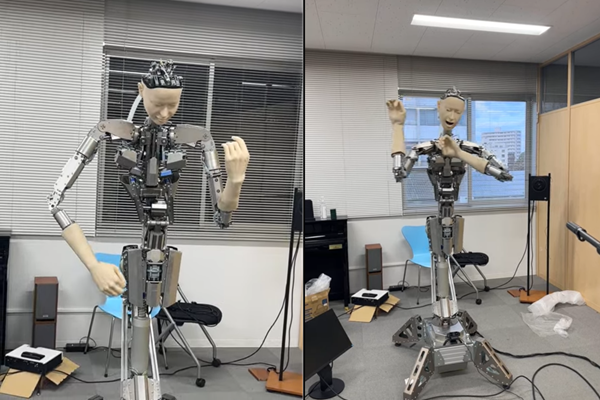

Scientists from the University of Tokyo linked OpenAI’s GPT-4 large language model (LLM) to a humanoid robot. The robot, named Alter3, can understand conversational prompts well enough to control the robot’s movements and gestures, as explained in a newly published paper.

GPT-4 Robot

The University of Tokyo team demonstrated Alter3 adopting poses like taking a selfie, playing guitar, or pretending to be a ghost when directed in natural language, without needing explicit programming for each motion. It’s much the same with how GPT-4, which also powers ChatGPT, is able to understand what people are describing in whatever terms they prefer, responding in-kind or generating an image with DALL-E 3 as relevant. The breakthrough by the scientists bridges a gap in conversational interactions with physical robotics, which normally requires fine motion control using specialized hardware-based code. The researchers translated high-level commands into instructions Alter3 could execute. The robot can learn movements the way humans intuitively pick them up – going from basic shuffles to more coordinated actions. Users can guide Alter3’s poses and help it distinguish nuances like different dance moves.

The system uses GPT-4 to interpret prompts like “pretend to be a ghost” and break it down into a series of Python code commands that Alter3 carries out. While Alter3’s mobility is currently restricted to arm gestures due to its fixed lower body, the team believes their technique can transfer to other androids with minimal adaptation. The researchers suggest this progress significantly advances humanoid robots’ capacity to parse conversational directives and contextually respond through lifelike facial expressions and physical behaviors.

Generative AI Robotics

This isn’t the first experiment in combining LLMs with robots. Nvidia also employed GPT-4 to create an AI training system called Eureka that trains robots to perform tasks more quickly than the usual method with an autonomous training setup and self-generated reward algorithms. That’s similar to the non-OpenAI system a group from the University of Michigan used to win the first Alexa SimBot Challenge through their Seagull virtual robot. Alter3 is also not the first robot using a relatively unedited version of OpenAI’s models. Engineers at robotic AI software developer Levatas embedded ChatGPT into one of the ‘Spot’ robot dogs built by Boston Dynamics and connected the AI to Google’s Text-to-Speech synthetic voice API, enabling the metal canine to understand and respond to spoken, informally phrased questions and commands.

Follow @voicebotaiFollow @erichschwartz

University of Michigan Wins First Alexa Prize SimBot Challenge