Deepfake Generative AI Detection Startup Reality Defender Raises $15M

Deepfake and synthetic media detection startup Reality Defender has raised $15 million in a Series A funding round led by DCVC. Reality Defender offers tools for spotting and dealing with manipulated media of all sorts, including AI-produced text, images, audio, and video.

Defending Reality

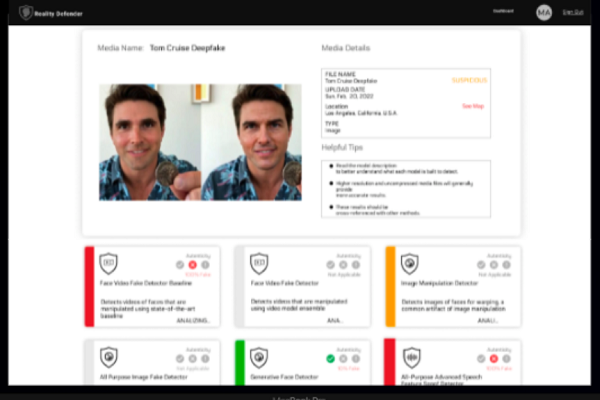

Reality Defender pitches its services to businesses, government agencies, and other enterprise organizations as a way to distinguish synthetic media created using generative AI. The startup claims to have caught millions of deepfakes and to have thwarted multiple disinformation campaigns since its 2021 launch thanks to its multimodal approach toward its work. Reality Defender’s web app and API are trained in-house to pinpoint the manipulation or fabrication of media through generative AI.

“In an era where advancements in AI make it impossible for any one person to differentiate between reality from fabrication, we stand as a beacon of hope against the tide of AI-driven disinformation. The Reality Defender team and its partners are not only committed to identifying and nullifying these threats, but to proactively ensure they don’t erode the pillars of trust and democracy,” Reality Defender CEO Ben Colman wrote in a blog post. “As we navigate this ever-evolving challenge, our goal remains steadfast: to ensure that truth and authenticity remain unassailable, shining brightly even in the face of sophisticated digital deceit.”

Along with the funding, Reality Defender unveiled its new Explainable AI feature for its its AI-generated text detection tool, which color codes text to highlight synthesized paragraphs, providing visibility into how the system works. Explainable AI is in the works for audio, image, and video detection tools as well. The startup also debuted a real-time deepfake screening for select voice channels that will allow call centers and fraud teams to catch generative manipulation live.

Deepfake Demands

The malicious use of deepfakes to deceive people or falsely associate them with scams is becoming ubiquitous. A deepfake video of trusted British consumer advice guide Martin Lewis attempting to trick people into sending money for a scam investment provoked outrage from Lewis over how the AI could mislead people who trust him. Even Tom Hanks isn’t immune to AI impersonation attempts, recently alerting fans about a deepfake video of himself selling dental plans on social media. The issue is starting to come up in courts, as when Indian actor Anil Kapoor won in court against the unauthorized creation and use of AI imitations of his likeness against 16 defendants. And Google recently changed its rules for political ads so that any synthetic media has to be marked as such. Still, at this point, there’s evidence that shows how far synthetic media produced by generative AI has advanced and how it is becoming more widely used for both benign and destructive work.

There’s been a recent rush among synthetic media developers to create deepfake AI detectors. Synthetic speech startup Resemble AI has an audio watermark feature, followed more recently by ElevenLabs and Meta. While those watermarks detect deepfakes produced by those companies, Resemble AI took it a step further with the Resemble Detect, which can spot deepfake voices from almost any source. Pindrop has arguably been working on this longer than anyone in the market. The company has not made a formal announcement, but Amit Gupta, VP of product management, presented a demo at the Synthedia Generative AI Innovation Showcase in June.

The text side has been a bit trickier. OpenAI released a tool for detecting AI-written text to significant fanfare, only to quietly remove it six months after its release. And while Turnitin boasts nearly 100% accuracy in detecting AI writing and GPTZero claims to accurately identify 99% of human-written articles and 85% of AI-generated ones, there’s some skepticism over their claims.

Follow @voicebotai Follow @erichschwartz