100,000 ChatGPT Account Details Discovered on Dark Web by Cybersecurity Firm

ChatGPT credentials for around 100,000 accounts have been discovered in illegal online marketplaces known as the dark web, according to a report by Singapore-based cybersecurity research company Group-IB. The saved ChatGPT login info was scraped by malware over the course of a year by the same kind of software that steals email passwords and other personal information, a testament to the generative AI chatbot’s popularity both by users and those who would exploit their data.

Dark ChatGPT

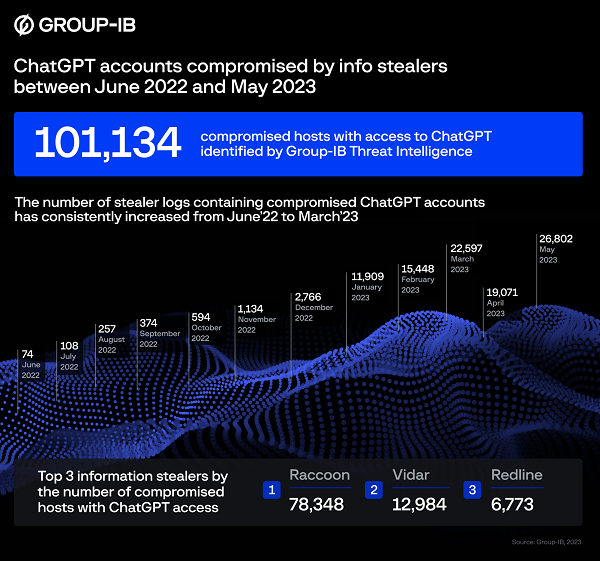

As can be seen in the chart above, the number of ChatGPT logins discovered in stolen data grew quickly from 74 in June of last year to a peak of almost 27,000 in May. The Asia-Pacific region was the overall highest source of the ChatGPT credentials sold on the dark web at, about 40%, with Europe in second place. In terms of how they were stolen, Group-IB identified three kinds of malware as the main perprerators, Raccoon, Vidar, and Redline, though Raccoon accounted for almost 80% of the thefts. Once these info stealers get the credentials, they are sold in online markets that require illicit software to access.

“Many enterprises are integrating ChatGPT into their operational flow. Employees enter classified correspondences or use the bot to optimize proprietary code,” Group IB head of threat intelligence Dmitry Shestakov said. “Given that ChatGPT’s standard configuration retains all conversations, this could inadvertently offer a trove of sensitive intelligence to threat actors if they obtain account credentials. At Group-IB, we are continuously monitoring underground communities to promptly identify such accounts.”

The leak of credentials is not from any gap in OpenAI’s security, though it will likely draw more scrutiny to the generative AI developer’s security systems. OpenAI made several upgrades to its security infrastructure after a security expert uncovered a ChatGPT vulnerability that allowed some users to see the titles of other people’s conversations with the AI. A relatively quick resolution couldn’t forestall more scrutiny from government regulators and a brief ban on ChatGPT in Italy. It also led to OpenAI setting up a ‘bug bounty’ program that pays as much as $20,000 to users who inform the company about security issues.

The accelerating theft of ChatGPT logins highlights how much generative AI tools and the accounts people use for them are becoming part of an internet user’s portfolio of personal information. Email accounts, bank passwords, and other critical and private data require increasingly complex and regularly updated login info. For that reason, OpenAI may need to set similar policies, as the company acknowledged.

“The findings from Group-IB’s Threat Intelligence report is the result of commodity malware on people’s devices and not an OpenAI breach,” OpenAI said in a statement. “We are currently investigating the accounts that have been exposed. OpenAI maintains industry best practices for authenticating and authorizing users to services including ChatGPT, and we encourage our users to use strong passwords and install only verified and trusted software to personal computers.”

Follow @voicebotaiFollow @erichschwartz

OpenAI Fixes ChatGPT Security Vulnerability That Caused Extended Downtime

OpenAI Will Award $1M for Generative AI Cybersecurity Programs